Posts by Dustin Franklin

Edge Computing

Oct 19, 2023

Bringing Generative AI to Life with NVIDIA Jetson

Recently, NVIDIA unveiled Jetson Generative AI Lab, which empowers developers to explore the limitless possibilities of generative AI in a real-world setting...

11 MIN READ

Robotics

Oct 05, 2020

Introducing the Ultimate Starter AI Computer, the NVIDIA Jetson Nano 2GB Developer Kit

Today, NVIDIA announced the Jetson Nano 2GB Developer Kit, the ideal hands-on platform for teaching, learning, and developing AI and robotics applications. The...

7 MIN READ

Robotics

Aug 19, 2020

Announcing ONNX Runtime Availability in the NVIDIA Jetson Zoo for High Performance Inferencing

Microsoft and NVIDIA have collaborated to build, validate and publish the ONNX Runtime Python package and Docker container for the NVIDIA Jetson platform,...

6 MIN READ

Robotics

May 14, 2020

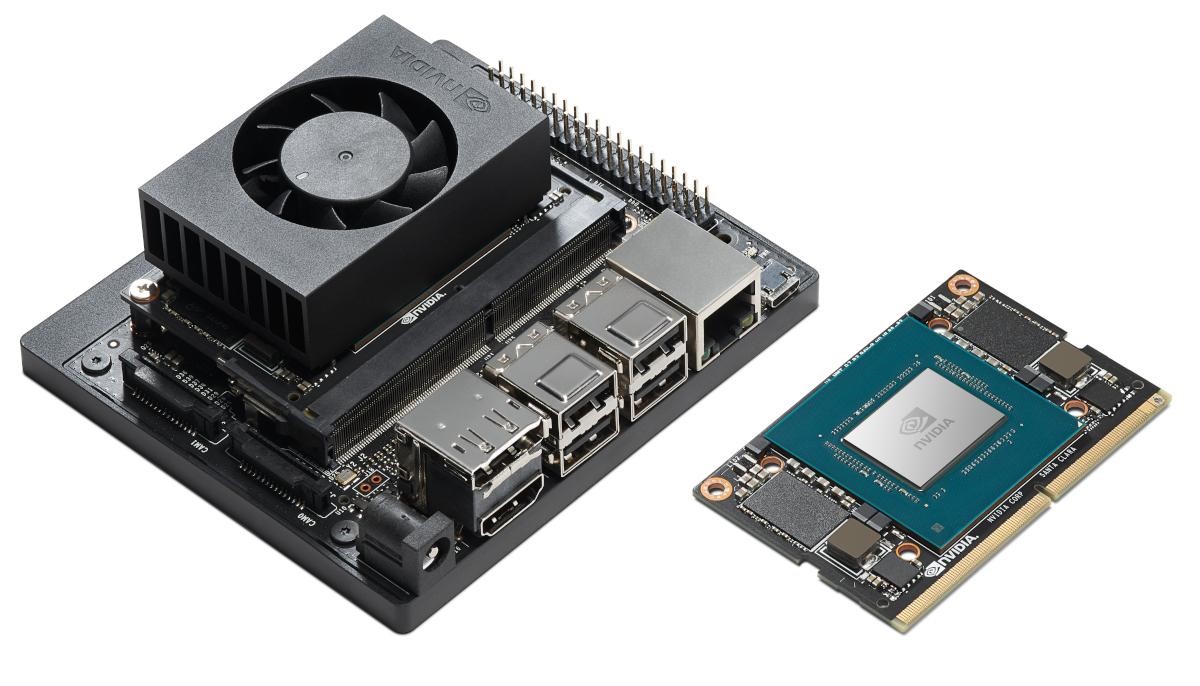

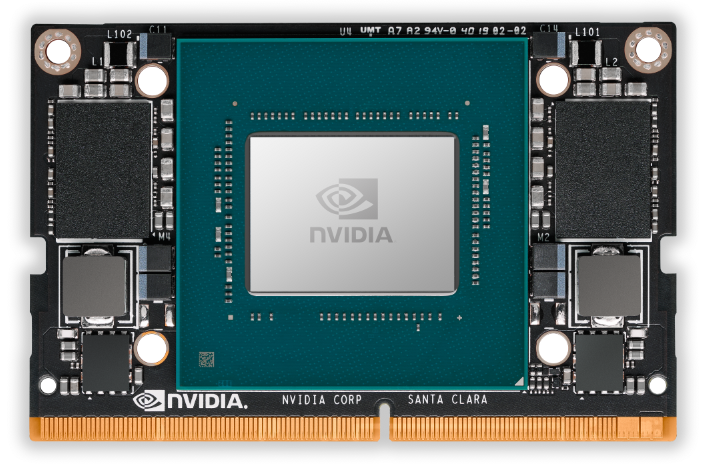

Bringing Cloud-Native Agility to Edge AI Devices with the NVIDIA Jetson Xavier NX Developer Kit

Figure 1. NVIDIA Jetson Xavier NX Developer Kit and production compute module. Today, NVIDIA announced the NVIDIA Jetson Xavier NX Developer Kit , which is...

11 MIN READ

Robotics

Nov 06, 2019

Introducing Jetson Xavier NX, the World’s Smallest AI Supercomputer

Today NVIDIA announced Jetson Xavier NX, the world’s smallest, most advanced embedded AI supercomputer for autonomous robotics and edge computing devices....

9 MIN READ

Data Science

Mar 18, 2019

Jetson Nano Brings AI Computing to Everyone

Update: Jetson Nano and JetBot webinars. We've received a high level of interest in Jetson Nano and JetBot, so we're hosting two webinars to cover these...

13 MIN READ