Posts by Jeff Larkin

Data Center / Cloud

Mar 06, 2024

How to Accelerate Quantitative Finance with ISO C++ Standard Parallelism

Quantitative finance libraries are software packages that consist of mathematical, statistical, and, more recently, machine learning models designed for use in...

10 MIN READ

Simulation / Modeling / Design

Nov 13, 2023

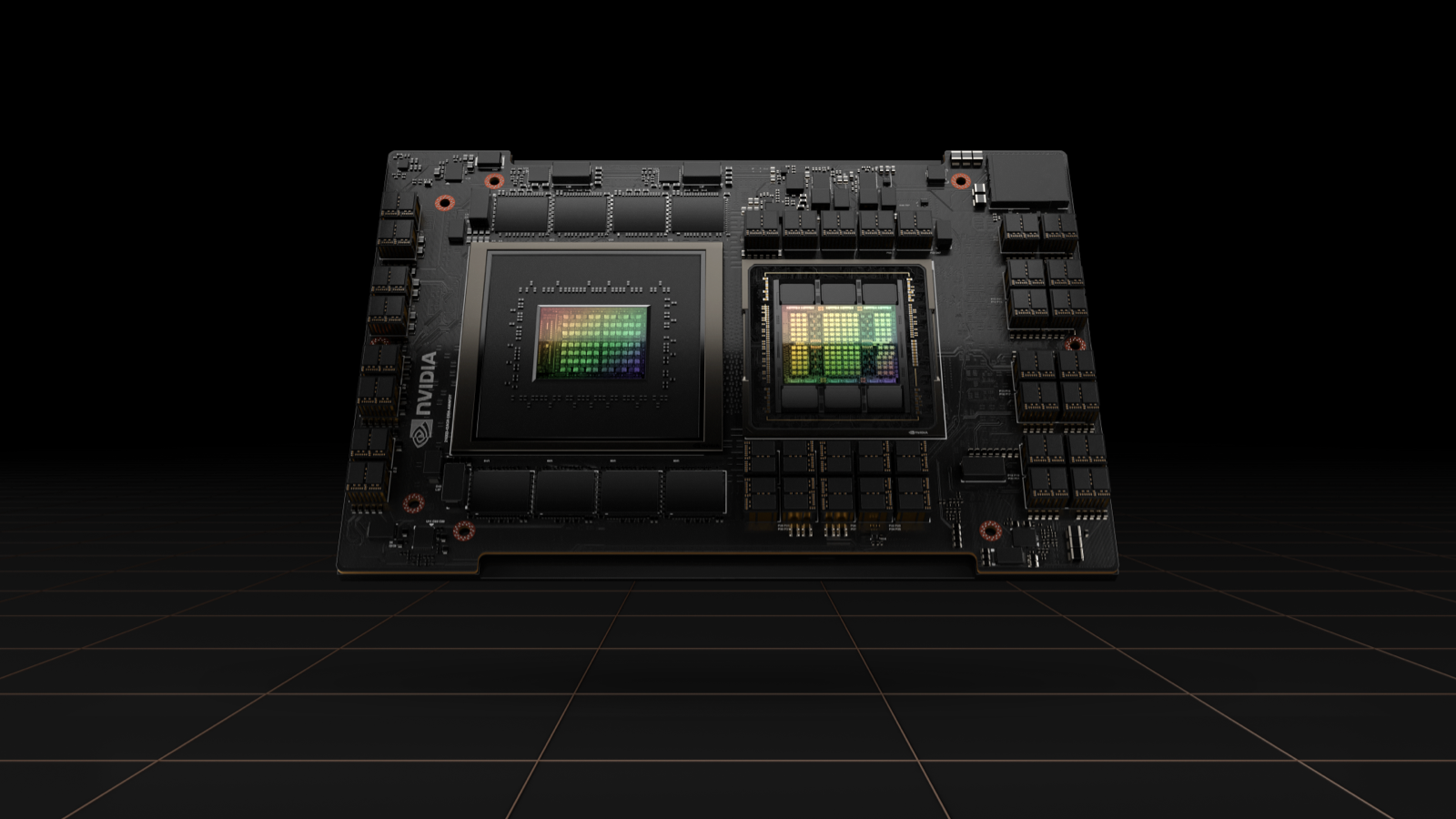

Simplifying GPU Programming for HPC with NVIDIA Grace Hopper Superchip

The new hardware developments in NVIDIA Grace Hopper Superchip systems enable some dramatic changes to the way developers approach GPU programming. Most...

17 MIN READ

Simulation / Modeling / Design

Jun 12, 2022

Using Fortran Standard Parallel Programming for GPU Acceleration

Standard languages have begun adding features that compilers can use for accelerated GPU and CPU parallel programming, for instance, do concurrent loops and...

12 MIN READ

Simulation / Modeling / Design

Apr 18, 2022

Multi-GPU Programming with Standard Parallel C++, Part 2

It may seem natural to expect that the performance of your CPU-to-GPU port will range below that of a dedicated HPC code. After all, you are limited by the...

13 MIN READ

Simulation / Modeling / Design

Apr 18, 2022

Multi-GPU Programming with Standard Parallel C++, Part 1

The difficulty of porting an application to GPUs varies from one case to another. In the best-case scenario, you can accelerate critical code sections by...

17 MIN READ

Data Center / Cloud

Jan 12, 2022

Developing Accelerated Code with Standard Language Parallelism

The NVIDIA platform is the most mature and complete platform for accelerated computing. In this post, I address the simplest, most productive, and most portable...

12 MIN READ