This week’s Spotlight is on Dr. Debbie Bard, a cosmologist at the Kavli Institute for Particle Astrophysics and Cosmology (KIPAC).

This week’s Spotlight is on Dr. Debbie Bard, a cosmologist at the Kavli Institute for Particle Astrophysics and Cosmology (KIPAC).

KIPAC members work in the Physics and Applied Physics Departments at Stanford University and at the SLAC National Accelerator Laboratory.

To handle the massive amounts of data involved in cosmological measurements, Debbie and her colleagues Matt Bellis (now an assistant professor at Siena College) and Mark Allen (now a data scientist at Chegg) teamed up to explore the potential of GPU computing and CUDA.

They concluded that “GPUs are a useful tool for cosmological calculations, allowing calculations to be made one or two orders of magnitude faster.” Their results were presented in a paper titled Cosmological Calculations on the GPU, which appeared earlier this year in Astronomy and Computing.

NVIDIA: Debbie, tell us a bit about yourself.

Debbie: I used to work in particle physics, but for the past three and a half years I’ve been studying cosmology. On the surface, the two fields are very different – one studies subatomic particles, the other the structure of the entire universe – but they’re linked by the very profound questions they ask about the nature of our universe. I’m interested in understanding the structure and evolution of the universe to learn something about Dark Energy, the mysterious force that’s driving the accelerated expansion of the universe.

NVIDIA: What are you primarily focused on now?

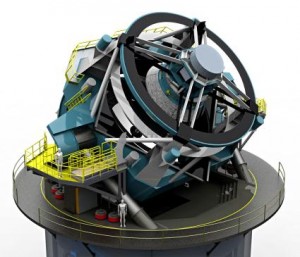

Debbie: I work on the Large Synoptic Survey Telescope (LSST). This is a telescope that will image the entire sky every three nights, and over the length of the ten year survey will build up an unprecedented amount of data on the universe. LSST is not yet built, but is scheduled to start collecting data in eight years or so.

NVIDIA: What are some of the challenges?

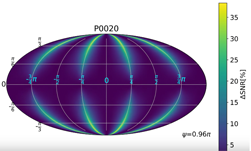

Debbie: LSST will image tens of billions of galaxies, which is great for people like me who want to exploit that dataset, but it also poses a real challenge. The statistics we use to describe structure of matter in the universe tend to be correlation functions, which are very inefficient to calculate (the calculation time scales with the number of data points squared!).

Approximation functions such as tree codes can be very useful, but they inevitably introduce uncertainties and potential systematic errors which ultimately limits the accuracy of our measurements. We will have such large volumes of data (and therefore such great statistical accuracy) that even small sources of bias and uncertainty will make a real difference to our results. The challenge is therefore to find a way to calculate these statistics to full precision in a reasonable time frame, which is where GPUS come in handy.

LSST will produce so much data that astronomers simply will not be able to do science in the way they have done before. The algorithms and data analysis techniques that have worked so far will simply not scale to a dataset of tens of billions of astronomical objects, each observed hundreds of times over the ten year LSST survey.

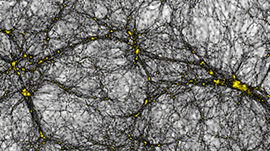

We need to start now to develop data analysis pipelines and algorithms that will work with LSST data, so that we’re ready for when the telescope starts taking data. A large part of that involves running simulations of the sky, and of the telescope, so that we have appropriate data to test our algorithms with.

NVIDIA: What role does GPU computing play in your work?

Debbie: I use GPUs to make the full and exact calculation of statistics that describe the structure of matter in the universe. We need to compare what we see in our data to theoretical predictions – if a theory matches the data well then there’s a good chance it gives an accurate description of the universe.

However, to make this comparison we need to make simulated datasets based on the theory, and compare the statistics of these simulations to our data. So we need to calculate the relevant statistics over many simulations, as well as on data. Without GPUs, I would not be able to do this within a reasonable time frame. I’ve been able to use GPUs to calculate my statistics in a couple of minutes, whereas it would take hours on the CPU.

We write a lot of histogramming in shared memory, so we use a lot of atomic addition. With large volumes of data, we take advantage of streaming functionality on the GPU by chunking our data into subsets, and streaming the data transfer and calculation of these chunks in parallel.

NVIDIA: Describe your hardware/software system.

Debbie: The GPUs are mounted in four Supermicro 6016GT-TF-TM2 1U rack mount servers. Each server uses Dual Intel Xeon E5540 2.5GHz 4-core CPUs, 48GB of RAM running RHEL 6.4, with one NVIDIA Tesla M2070 GPU. My laptop is a trusty old MacBook Pro – running Mac OS X v10.6.3 with GeForce 320M and 48 CUDA cores.

We are currently adding a Tesla K40 to the system. During the holidays, I’m looking forward to designing a new algorithm that will take advantage of the kernels-launching-kernels capability (dynamic parallelism).

NVIDIA: What’s the “next big thing” in cosmology research?

Debbie: The next big question we’re trying to answer is really this mystery of the Dark Universe. We think 96% of the content of the universe is made up of Dark Matter and Dark Energy, and we don’t really know what these things are. This is both a challenge and an opportunity, and it’s the biggest question in science today.

In terms of data, the next big thing is the Dark Energy Survey (DES), which has just gotten started. The measurements DES will make of galaxies will allow us to start to understand the statistics that describe the structure of the universe. LSST will start taking data in about eight years, and that will give us another order of magnitude more data. In the next decade we’re really going to start to constrain our theories of what the universe is made of, and how it’s evolved. Provided, of course, that we can process the enormous quantity of complex data these surveys will provide.

NVIDIA: How did you become interested in cosmology?

Debbie: I’ve always been fascinated by the most fundamental questions in science – what is everything made of? Why is the universe the way it is? How did it start, how did it evolve? These question border on the philosophical, but cosmology allows us to make a scientific description. Plus, space is pretty cool!

Read more GPU Computing Spotlights.