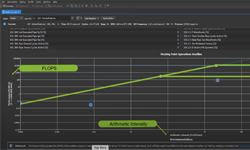

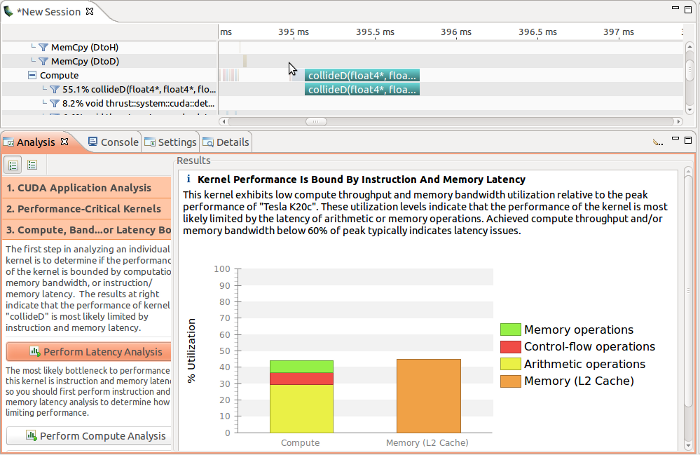

NVIDIA’s profiling and tracing tools, including the NVIDIA Visual Profiler, NSight Eclipse and Visual Studio editions, cuda-memcheck, and the nvprof command line profiler are powerful tools that can give you deep insight into the performance and correctness of your GPU-accelerated applications. These tools gather data while your application is running, and use it to create profiles, application API traces, automatic optimization guidance, and in the case of cuda-memcheck, memory leak and race checking.

To improve tracing performance and reduce overhead in the target application, these tools internally buffer the data they gather, and flush it to disk at various points, including stream synchronization, context synchronization, context destruction, and when the internal buffer is full. For technical reasons, it is not always possible to automatically flush the data on application exit. Therefore, you should clean up your application’s CUDA objects properly to make sure that the profiler is able to store all gathered data. This means not only freeing memory allocated on the GPU, but also resetting the device Context.

If your application uses the CUDA Runtime API, call cudaDeviceReset() just before exiting, or when the application finishes making CUDA calls and using device data. If your application uses the CUDA Driver API, call cuProfilerStop() on each context to flush the profiling buffers before destroying the context with cuCtxDestroy().

Without resetting the device, applications that don’t synchronize before they exit may produce incomplete profile traces. With this simple clean-up step, you can be sure you get an accurate profile.