MATLAB

Sep 05, 2023

Webinar: Build Realistic Robot Simulations with NVIDIA Isaac Sim and MATLAB

On Sept. 12, learn about the connection between MATLAB and NVIDIA Isaac Sim through ROS.

1 MIN READ

Feb 25, 2021

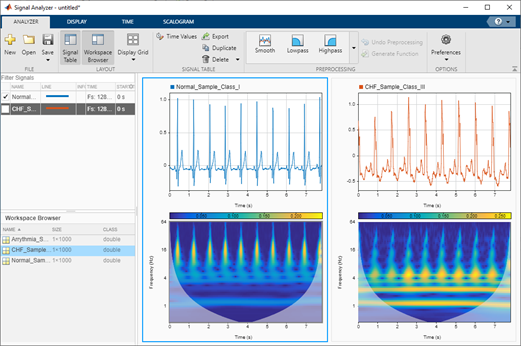

Developing AI-Powered Digital Health Applications Using NVIDIA Jetson

Traditional healthcare systems have large amounts of patient data in the form of physiological signals, medical records, provider notes, and comments. The...

17 MIN READ

Sep 30, 2019

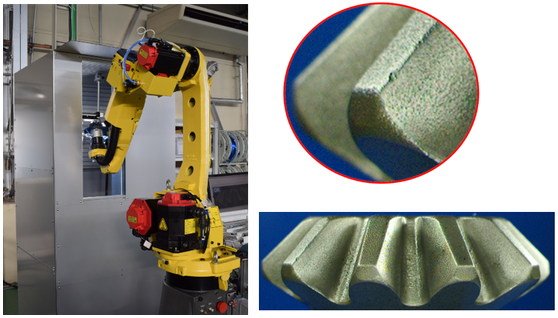

Rapid Prototyping on NVIDIA Jetson Platforms with MATLAB

This blog discusses how an application developer can prototype and deploy deep learning algorithms on hardware like the NVIDIA Jetson Nano Developer Kit with...

11 MIN READ

Mar 13, 2019

Speeding Up Semantic Segmentation Using MATLAB Container from NVIDIA NGC

Gone are the days of using a single GPU to train a deep learning model. With computationally intensive algorithms such as semantic segmentation, a single GPU...

8 MIN READ

Oct 08, 2018

Using MATLAB and TensorRT on NVIDIA GPUs

As we design deep learning networks, how can we quickly prototype the complete algorithm—including pre- and postprocessing logic around deep neural networks...

16 MIN READ

Jul 20, 2017

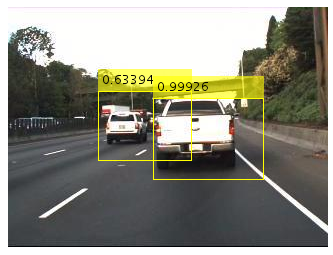

Deep Learning for Automated Driving with MATLAB

You’ve probably seen...

13 MIN READ

Oct 27, 2015

CUDA Helps See People Through Walls

Researchers at MIT's Computer Science and Artificial Intelligence Lab have developed software that uses variations in Wi-Fi signals to recognize human...

2 MIN READ

Oct 26, 2015

Deep Learning for Computer Vision with MATLAB and cuDNN

Deep learning is becoming ubiquitous. With recent advancements in deep learning algorithms and GPU technology, we are able to solve problems once considered...

12 MIN READ

Aug 17, 2015

High-Performance MATLAB with GPU Acceleration

In this post, I will discuss techniques you can use to maximize the performance of your GPU-accelerated MATLAB® code. First I explain how to write MATLAB code...

16 MIN READ

May 12, 2015

Deep Learning for Image Understanding in Planetary Science

Went from training 700 img/s in MNIST to 1500 img/s (using CUDA) to 4000 img/s (using cuDNN) that is just freaking amazing! @GPUComputing — Leon Palafox...

11 MIN READ

Jul 29, 2014

Calling CUDA-accelerated Libraries from MATLAB: A Computer Vision Example

In an earlier post we showed how MATLAB® can support CUDA kernel prototyping and development by providing an environment for quick evaluation and...

16 MIN READ

Sep 11, 2013

CUDA Spotlight: GPU-Accelerated Neuroscience

This week's Spotlight is on Dr. Adam Gazzaley of UC San Francisco, where he is the founding director of the Neuroscience Imaging Center and an Associate...

9 MIN READ

Jul 15, 2013

Prototyping Algorithms and Testing CUDA Kernels in MATLAB

This guest post by Daniel Armyr and Dan Doherty from MathWorks describes how you can use MATLAB to support your development of CUDA C and C++ kernels. You...

11 MIN READ