V100

Oct 05, 2023

Just Released: NVIDIA HPC SDK 23.9

This NVIDIA HPC SDK 23.9 update expands platform support and provides minor updates.

1 MIN READ

Oct 04, 2023

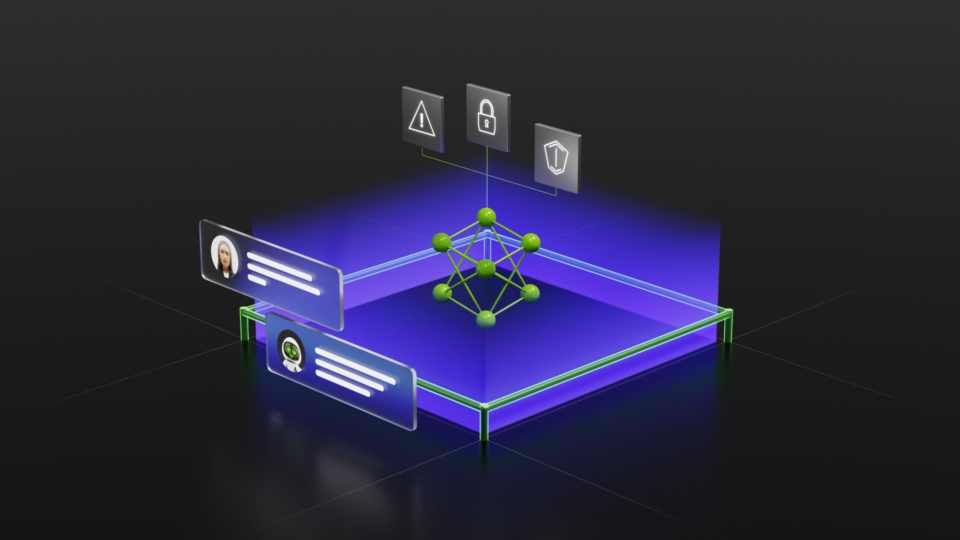

Analyzing the Security of Machine Learning Research Code

The NVIDIA AI Red Team is focused on scaling secure development practices across the data, science, and AI ecosystems. We participate in open-source security...

12 MIN READ

Sep 06, 2023

GPUs for ETL? Optimizing ETL Architecture for Apache Spark SQL Operations

Extract-transform-load (ETL) operations with GPUs using the NVIDIA RAPIDS Accelerator for Apache Spark running on large-scale data can produce both cost savings...

8 MIN READ

Aug 03, 2023

Securing LLM Systems Against Prompt Injection

Prompt injection is a new attack technique specific to large language models (LLMs) that enables attackers to manipulate the output of the LLM. This attack is...

15 MIN READ

Jun 22, 2023

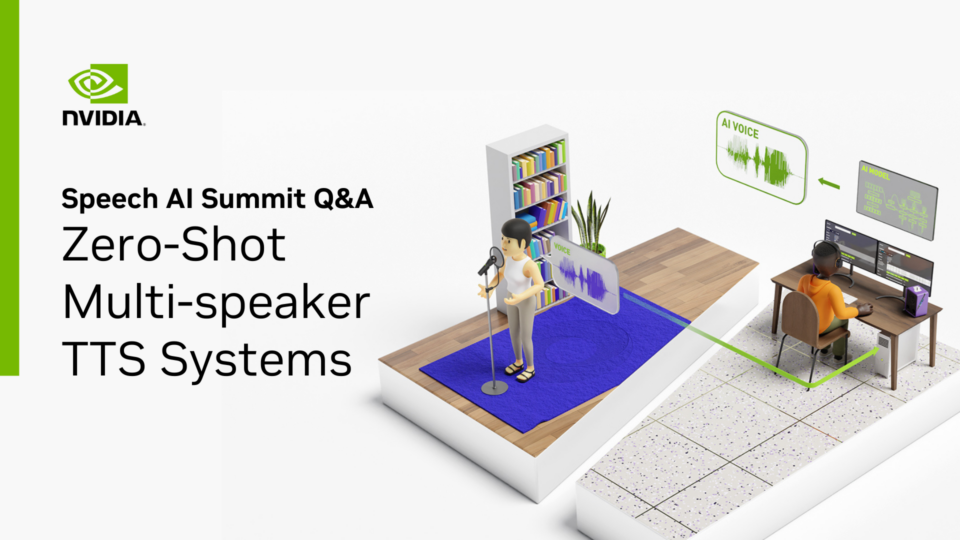

Overview of Zero-Shot Multi-Speaker TTS Systems: Top Q&As

The Speech AI Summit is an annual conference that brings together experts in the field of AI and speech technology to discuss the latest industry trends and...

6 MIN READ

Jun 07, 2023

Predicting Credit Defaults Using Time-Series Models with Recursive Neural Networks and XGBoost

Today’s machine learning (ML) solutions are complex and rarely use just a single model. Training models effectively requires large, diverse datasets that may...

12 MIN READ

May 17, 2023

Webinar: Performant Multiphase Flow Simulation at Leadership-Class Scale

On June 6, learn how researchers use OpenACC for GPU acceleration of multiphase and compressible flow solvers that obtain speedups at scale.

1 MIN READ

Apr 25, 2023

Increasing Inference Acceleration of KoGPT with NVIDIA FasterTransformer

Transformers are one of the most influential AI model architectures today and are shaping the direction of future AI R&D. First invented as a tool for...

6 MIN READ

Mar 15, 2023

How to Create a Custom Language Model

Generative AI has captured the attention and imagination of the public over the past couple of years. From a given natural language prompt, these generative...

12 MIN READ

Jun 24, 2020

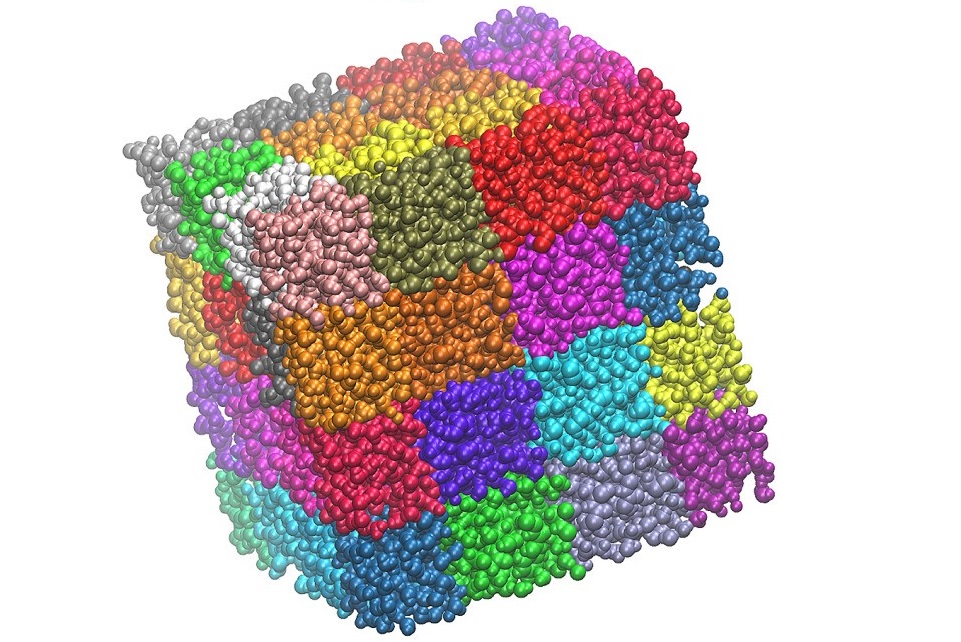

Delivering up to 9X the Throughput with NAMD v3 and NVIDIA A100 GPU

NAMD, a widely used parallel molecular dynamics simulation engine, was one of the first CUDA-accelerated applications. Throughout the early evolution of...

14 MIN READ

Feb 19, 2020

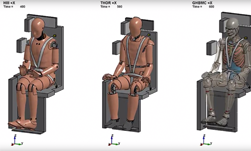

Head Injury Study Validates NASA Safety Testing

Designing safety restraints for automobiles is a challenging endeavor, now imagine doing the same for a NASA spacecraft. Unlike a vehicle, impacts can come from...

2 MIN READ

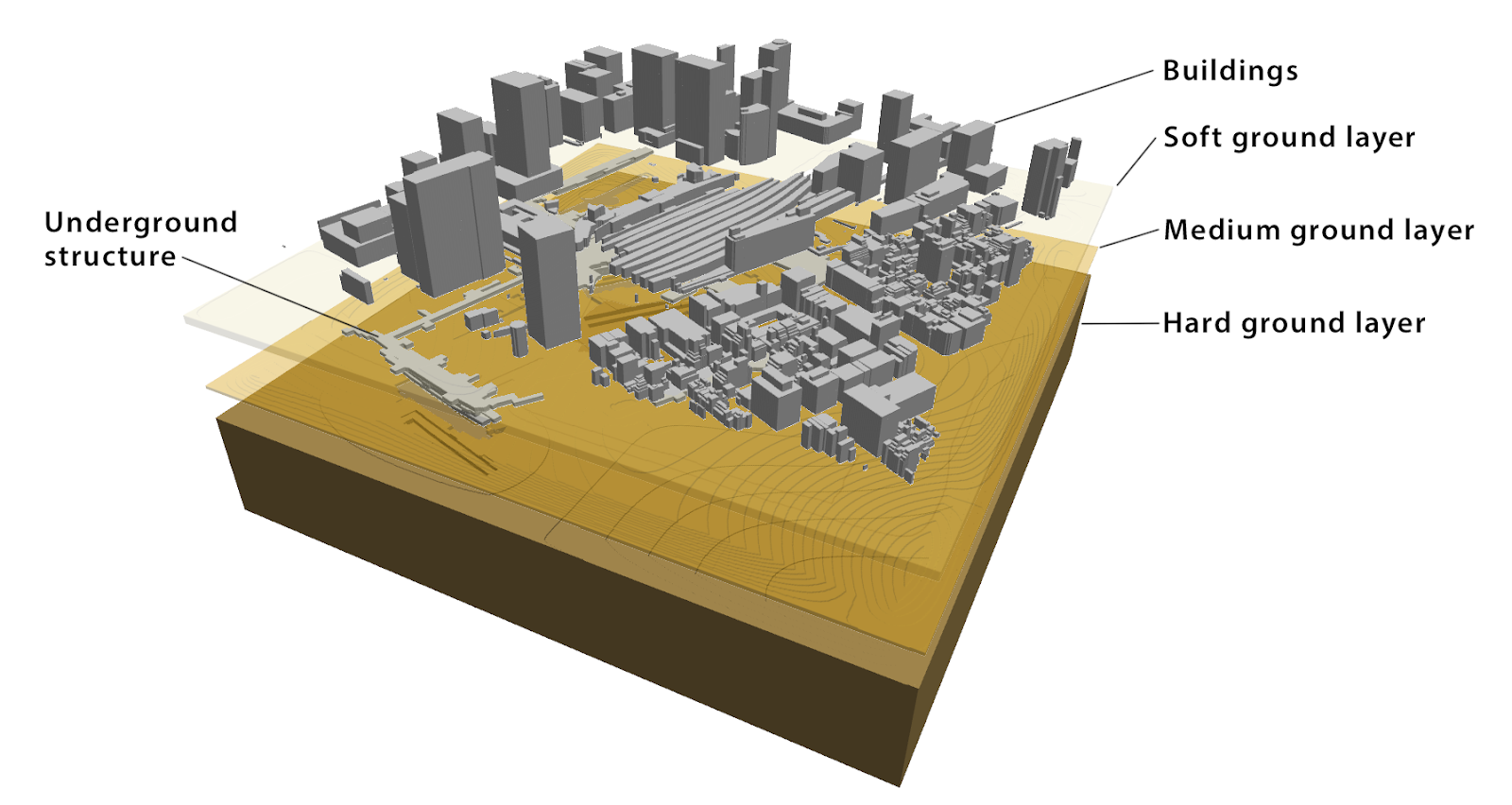

Mar 13, 2019

Speeding Up Semantic Segmentation Using MATLAB Container from NVIDIA NGC

Gone are the days of using a single GPU to train a deep learning model. With computationally intensive algorithms such as semantic segmentation, a single GPU...

8 MIN READ

Jan 23, 2019

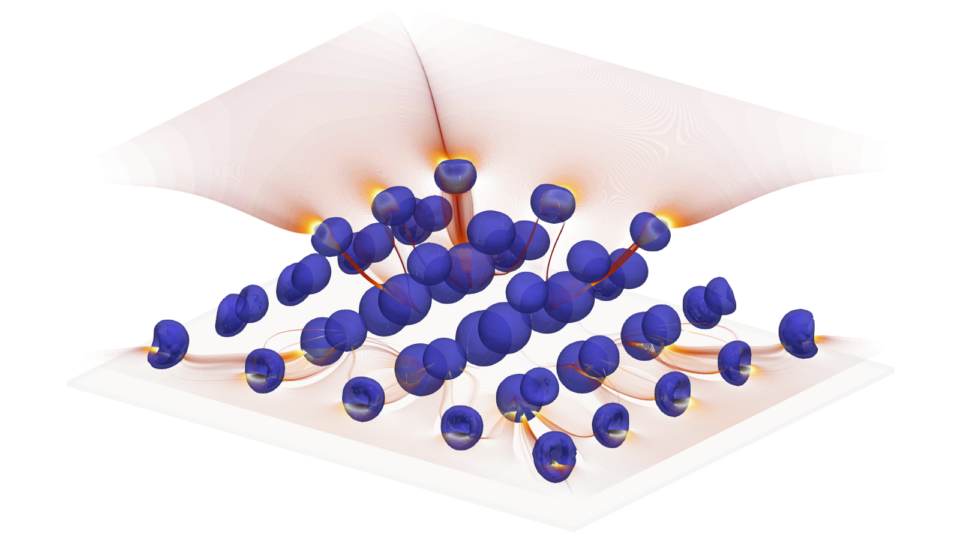

Using Tensor Cores for Mixed-Precision Scientific Computing

Double-precision floating point (FP64) has been the de facto standard for doing scientific simulation for several decades. Most numerical methods used in...

9 MIN READ

Dec 12, 2018

NVIDIA Captures Top Spots on World’s First Industry-Wide AI Benchmark

Today, the MLPerf consortium published its first results for the seven tests that currently comprise this new industry-standard benchmark for machine learning....

10 MIN READ

Jul 16, 2018

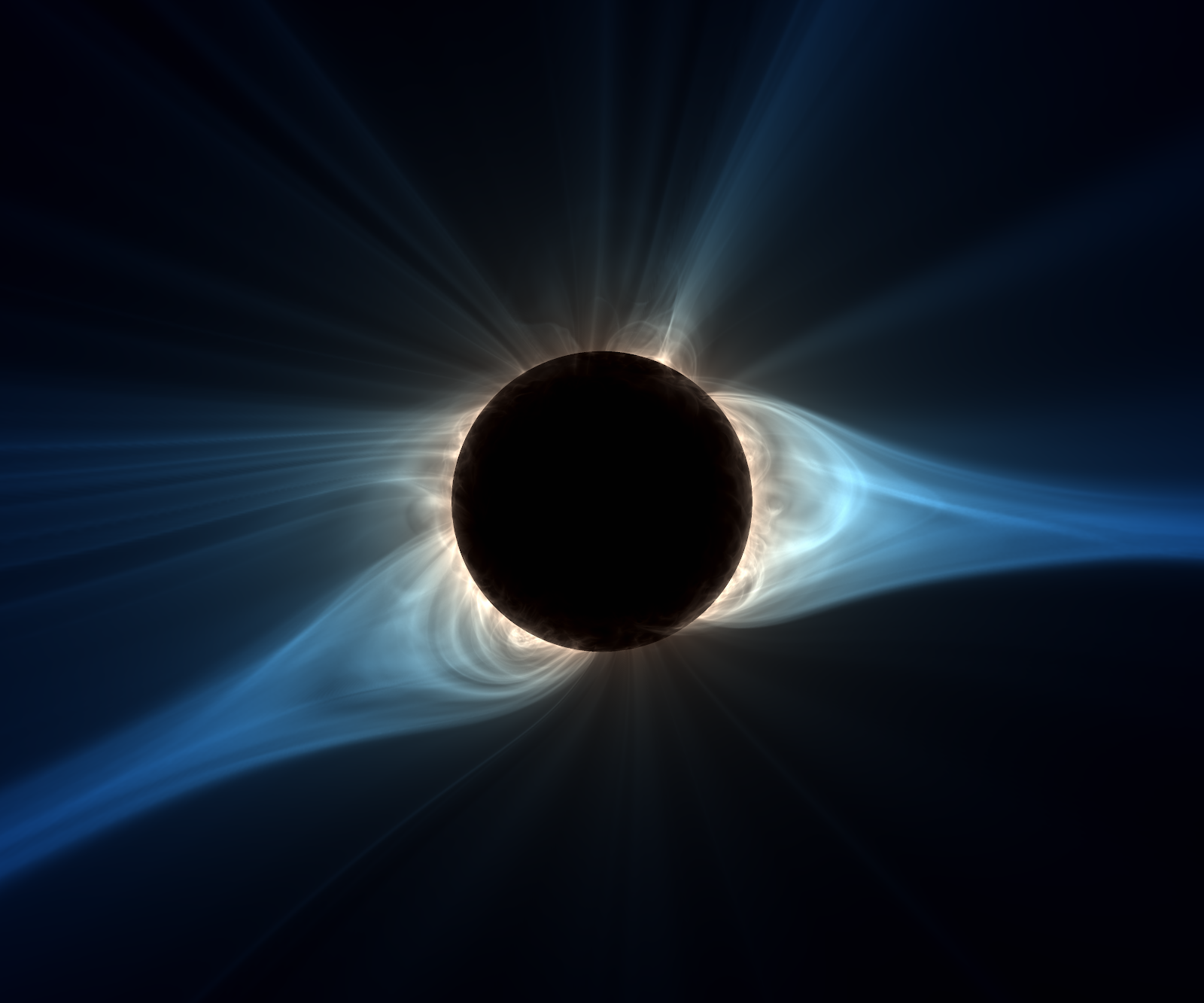

Using OpenACC to Port Solar Storm Modeling Code to GPUs

Solar storms consist of massive explosions on the Sun that can release the energy of over 2 billion megatons of TNT in the form of solar flares and Coronal Mass...

39 MIN READ

May 29, 2018

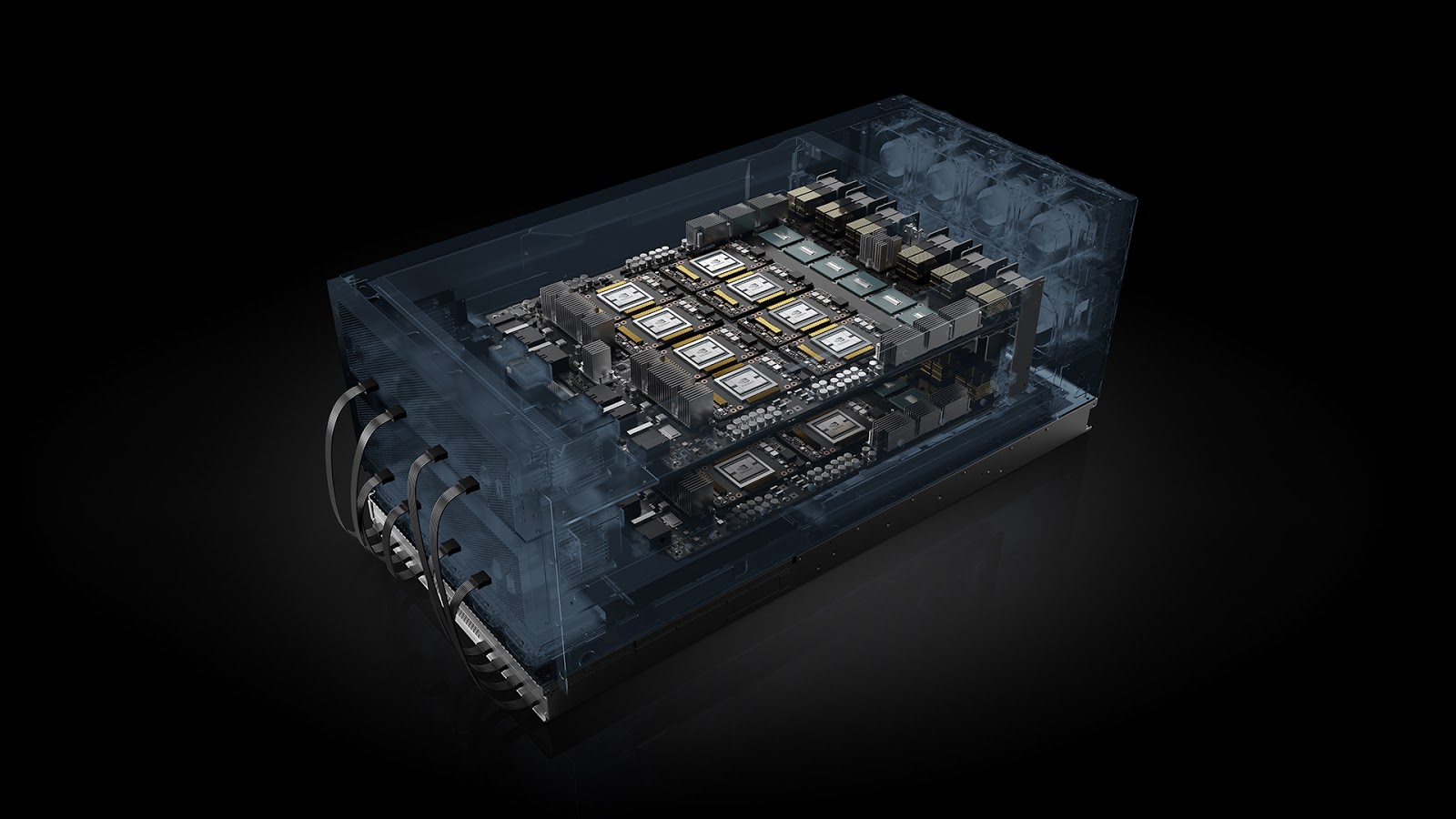

HGX-2 Fuses HPC and AI Computing Architectures

The continuing explosive growth of AI model size and complexity means the appetite for more powerful compute solutions continues to accelerate rapidly. NVIDIA...

9 MIN READ