NVIDIA Nsight Eclipse Edition is a full-featured, integrated development environment that lets you easily develop CUDA applications for either your local (x86) system or a remote (x86 or Arm) target. In this post, I will walk you through the process of remote-developing CUDA applications for the NVIDIA Jetson TX2, an Arm-based development kit. Note that this how-to also applies to Jetson TX1 and Jetson TK1 (this post is an update to an older post aimed at Jetson TK1).

Nsight Eclipse Edition supports two remote development modes: cross-compilation and “synchronize projects” mode. Cross-compiling for Arm on your x86 host system requires that all of the Arm libraries with which you will link your application be present on your host system. In synchronize-projects mode, on the other hand, your source code is synchronized between host and target systems and compiled and linked directly on the remote target, which has the advantage that all your libraries get resolved on the target system and need not be present on the host. Neither of these remote development modes requires an NVIDIA GPU to be present in your host system.

Note: CUDA cross-compilation tools for Arm are available in the Ubuntu installer packages of the CUDA Toolkit. If your host system is running a Linux distribution other than Ubuntu, I recommend the synchronize-projects remote development mode.

CUDA Toolkit Setup

The first step involved in cross-compilation is installing the CUDA Toolkit on your host system. You need to install the CUDA 8.0 Toolkit for Jetson TX2 or TX1, or the 6.5 Toolkit for Jetson TK1. You can download the CUDA Toolkit installer for Ubuntu from the CUDA Downloads page, or using Jetpack L4T.

I recommend that you use use Jetpack L4T to install the same version of the CUDA toolkit for both the host and target systems. Once the toolkits are installed on both host and target, download the required Ubuntu DEB package from the CUDA download page to do the cross-platform setup. You can find installation instructions in the CUDA Installation Guide. I will summarize them below.

CUDA Cross-Platform Environment Setup

First let’s get your host system set up for cross-platform CUDA development. The following target architectures are supported for cross compilation.

x86_64:64-bit x86 CPU architecture;armhf:32-bit Arm CPU architecture, as found on Jetson TK1;aarch64: 64-bit Arm CPU architecture, found on Jetson TX1 and TX2 and certain Android systems;ppc64le:64-bit little-endian IBM POWER8 architecture

- Enable the foreign architecture. The foreign architecture must be enabled to install the cross-platform toolkit. To enable

armhf,arm64 (aarch64), or ppc64leas a foreign architecture, execute the following commands.

# For Jetson TX1/TX2: $ sudo dpkg --add-architecture arm64 $ sudo apt-get update # For Jetson TK1: $ sudo dpkg --add-architecture arm64 $ sudo dpkg --add-architecture armhf $ sudo apt-get update

- Run dpkg to install and update the repo meta-data:

For Jetson TX1/TX2, use CUDA 8:

$ sudo dpkg -i cuda-repo-ubuntu1404-8-0-local-ga2_8.0.61-1_amd64.deb $ sudo apt-get update

For Jetson TK1, use CUDA 6.5:

$ sudo dpkg – i cuda-repo-ubuntu1404_6.5-37_amd64.deb $ sudo apt-get update

- Install cuda cross-platform packages. On supported platforms, the cuda-cross-armhf, cuda-cross-aarch64, and cuda-cross-ppc64el packages install all the packages required for cross-platform development to ARMv7, ARMv8, and POWER8, respectively. The libraries and header files of the target architecture’s display driver package are also installed to enable the cross compilation of driver applications.

$ sudo apt-get install cuda-cross-<arch>

For Jetson TK1:

$ sudo apt-get install g++-4.6-arm-linux-gnueabihf

For Jetson TX1/TX2:

$ sudo apt-get install g++-4.8-aarch64-linux-gnu

- OPTIONAL – if you also wish to do native x86 CUDA development and have an NVIDIA GPU in your host system then you can install the full toolchain and driver:

$ sudo apt-get install cuda

Reboot your system if you installed the driver so that the NVIDIA driver gets loaded. Then update paths to the toolkit install location as follows based on the CUDA Toolkit version installed:

$ export PATH=/usr/local/cuda-8.0/bin:$PATH $ export LD_LIBRARY_PATH=/usr/local/cuda-8.0/lib64:$LD_LIBRARY_PATH

At the end of these steps you should see aarch64-linux or armv7-linux-gnueabihf and the optional x86_64_linux folder under /usr/local/cuda-8.0/targets/.

For your cross-development needs, Jetson comes prepopulated with Linux for Tegra (L4T), a modified Ubuntu Linux distribution provided by NVIDIA. NVIDIA provides the board support package and a software stack that includes the CUDA Toolkit and OpenGL drivers. You can download all of these, as well as examples and documentation, from the JetPack page.

Importing a CUDA Sample into Nsight Eclipse Edition

With the CUDA Toolkit installed and the paths setup on the host system, launch Nsight by typing “nsight” (without the quotes) at the command line or by finding the Nsight icon in the Ubuntu dashboard. Once Nsight is loaded, navigate to “File->New->CUDA C/C++ Project” and import an existing CUDA sample to start the Project Creation wizard. For the project name, enter “boxfilter-arm” and select “Import CUDA Sample” in the project type and and select the supported “CUDA Toolkit” in the toolchain. Next, choose the Boxfilter sample which can be found under the Imaging category.

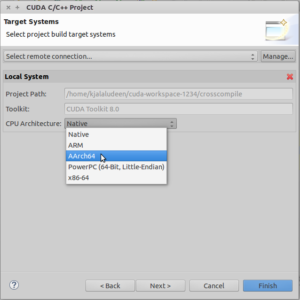

The remaining options in the wizard let you choose which GPU and CPU architectures to generate code for. First, choose the GPU code that should be generated by the nvcc compiler.

- For Jetson TK1 (NVIDIA Kepler GPU), choose 3.x GPU code and 3.x PTX code.

- For Jetson TX1 (NVIDIA Maxwell GPU), choose 5.x GPU code and 5.x PTX code.

- For Jetson TX2 (NVIDIA Pascal GPU), choose 6.x GPU code and 6.x PTX code.

The next page in the wizard lets you decide if you wish to do native x86 development or cross-compile for an Arm system. Choose “AArch64” for Jetson TX1/TX2 and “ARM” architecture for TK1 in the CPU architecture drop-down box.

Building Your Jetson Application with Nsight Eclipse Edition

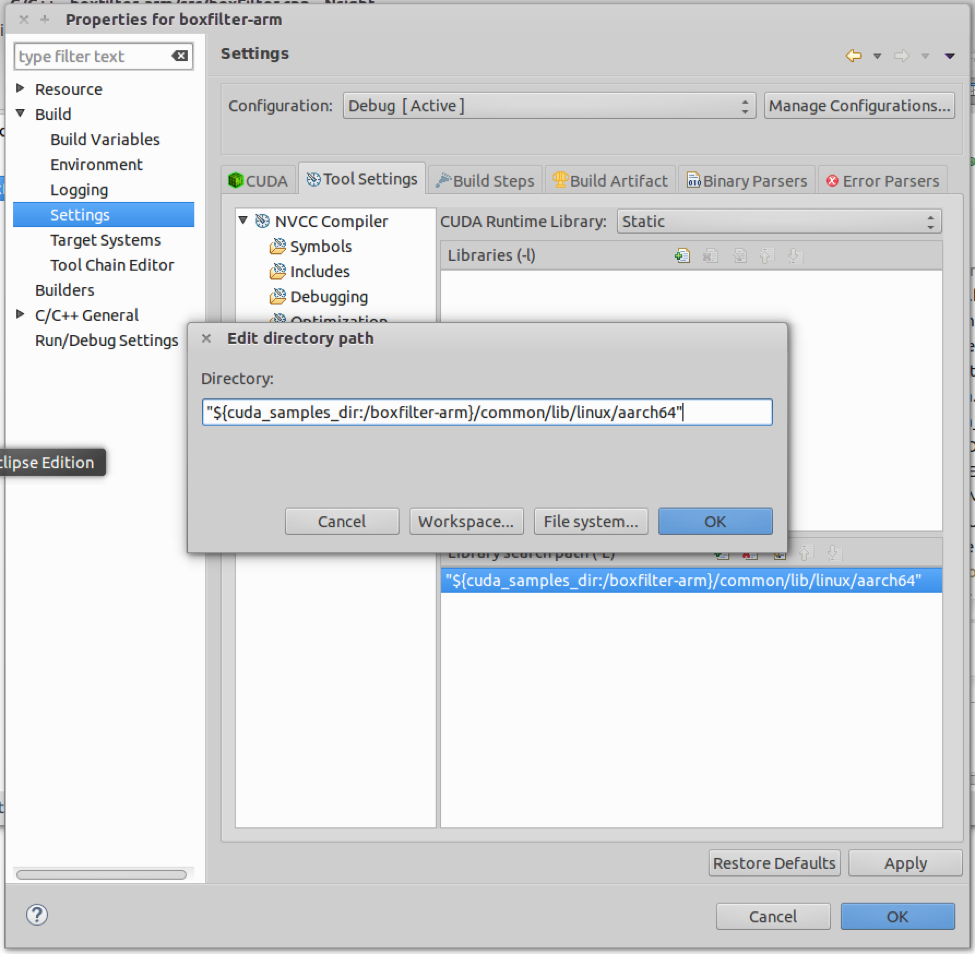

CUDA samples can be imported and run on various hardware configurations. For this cross-build exercise you need to resolve the Arm library dependencies used by this application. Here’s how.

- Right click on the project and navigate to Properties->Build->Settings->Tool Settings->NVCC Linker->Libraries and edit and enter the “Library search path(-L)” to point to

linux/aarch64(for TX1/TX2) orlinux/armv7l(for TK1) instead oflinux/x86_64. This will resolve thelibGLEWlibrary dependencies. Also remove the entry forGLUif present since that library is unused (only for Nsight v6.5).

- Click on the Miscellaneous tab and add a new

-Xlinkeroption —unresolved-symbols=ignore-in-shared-libs. Also select the option “Link with OpenGL libraries” if present. - In the terminal window use the scp utility to copy the remaining libraries.

For Jetson TK1:

$ sudo scp ubuntu@your.ip.address:/usr/lib/arm-linux-gnueabihf/libglut.so.3 /usr/arm-linux-gnueabihf/lib $ sudo scp ubuntu@your.ip.address:/usr/lib/arm-linux-gnueabihf/tegra/libGL.so.1 /usr/arm-linux/gnueabihf/lib $ sudo scp ubuntu@your.ip.address:/usr/lib/arm-linux-gnueabihf/libX11.so.6 /usr/arm-linux-gnueabihf/lib $ sudo ln -s /usr/arm-linux-gnueabihf/lib/libglut.so.3 /usr/arm-linux-gnueabihf/lib/libglut.so $ sudo ln -s /usr/arm-linux-gnueabihf/lib/libgl.so.1 /usr/arm-linux-gnueabihf/lib/libgl.so $ sudo ln -s /usr/arm-linux-gnueabihf/lib/libX11.so.6 /usr/arm-linux-gnueabihf/lib/libX11.so

For Jetson TX1/TX2:

$ sudo scp ubuntu@your.ip.address:/usr/lib/aarch64-linux-gnu/libglut.so.3 /usr/aarch64-linux-gnu/lib $ sudo scp ubuntu@your.ip.address:/usr/lib/aarch64-linux-gnu/tegra/libGL.so.1 /usr/aarch64-linux-gnu/lib $ sudo scp ubuntu@your.ip.address:/usr/lib/aarch64-linux-gnu/libX11.so.6 /usr/aarch64-linux-gnu/lib $ sudo scp ubuntu@your.ip.address:/usr/lib/aarch64-linux-gnu/libGLU.so.1 /usr/aarch64-linux-gnu/lib $ sudo ln -s /usr/aarch64-linux-gnu/lib/libglut.so.3 /usr/aarch64-linux-gnu/lib/libglut.so $ sudo ln -s /usr/aarch64-linux-gnu/lib/libGL.so.1 /usr/aarch64-linux-gnu/lib/libGL.so $ sudo ln -s /usr/aarch64-linux-gnu/lib/libX11.so.6 /usr/aarch64-linux-gnu/lib/libX11.so $ sudo ln -s /usr/aarch64-linux-gnu/lib/libGLU.so.1 /usr/aarch64-linux-gnu/lib/libGLU.so

Note: You need to copy these Arm libraries only for the first CUDA sample. You may need additional libraries for other samples.

The build process for Arm cross-development is similar to the local build process. Just click on the build “hammer” icon in the toolbar menu to build a debug Arm binary. As part of the compilation process, Nsight will launch nvcc for the GPU code and the cross-compiler for the CPU code as follows:

Building file: ../src/boxFilter_kernel.cu Invoking: NVCC Compiler /usr/local/cuda-6.0/bin/nvcc -I"/usr/local/cuda-6.0/samples/3_Imaging" -I"/usr/local/cuda-6.0/samples/common/inc" -I"/home/satish/cuda-workspace_new/boxfilter-arm" -G -g -O0 -ccbin arm-linux-gnueabihf-g++-4.6 -gencode arch=compute_30, code=sm_30 -gencode arch=compute_32,code=sm_32 --target-cpu-architecture ARM -m32 -odir "src" -M -o "src/boxFilter_kernel.d" "../src/boxFilter_kernel.cu" /usr/local/cuda-6.0/bin/nvcc --compile -G -I"/usr/local/cuda-6.0/samples/3_Imaging" -I"/usr/local/cuda-6.0/samples/common/inc" -I"/home/satish/cuda-workspace_new/boxfilter-arm" -O0 -g -gencode arch=compute_30,code=compute_30 -gencode arch=compute_32, code=sm_32 --target-cpu-architecture ARM -m32 -ccbin arm-linux-gnueabihf-g++-4.6 -x cu -o "src/boxFilter_kernel.o" "../src/boxFilter_kernel.cu" Finished building: ../src/boxFilter_kernel.cu

After the compilation steps, the linker resolves all library references, giving you a ready-to-run boxfilter-arm binary.

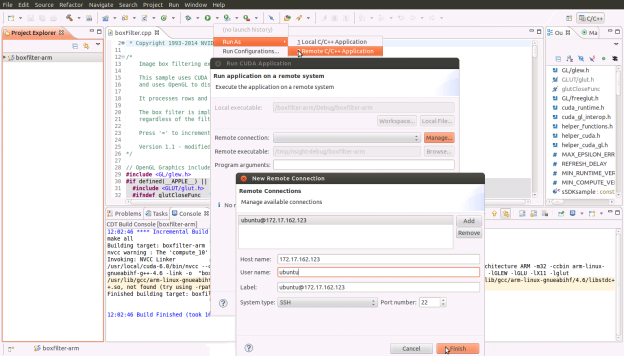

Running Your Jetson Application from Nsight Eclipse Edition

To run the code on the target Jetson system, click on Run As->Remote C/C++ Application to setup the target system user and host address.

Once you finish the remote target system configuration setup, click on the Run icon and you will see a new entry to run the boxfilter-arm binary on the Jetson.

Note: the box filter application relies on data files that reside in the data/ subfolder of the application, which you will need to copy to the target system. Use the scp utility to copy those files into the /tmp/nsight-debug/data/ folder on your Jetson.

Next, edit the boxfilter.cpp file as follows:

- To ensure that the application runs on the correct display device, add this line to the top of the main function if not already there. This is not required on CUDA toolkit 8.0 samples.

setenv(“DISPLAY”, “:0”, 0);

- Add the following lines to the top of the display function so that the app auto-terminates after a few seconds. This is required to gather deterministic execution data across multiple runs of the application, which you will need later in the profiling section:

static int icnt = 120;

while(!icnt--)

{

cudaDeviceReset();

exit(EXIT_SUCCESS);

}

3. Also comment out the call to freeTextures() in the cleanup() function since it might cause runtime error.

Click on Run to execute the modified Box Filter application on your Jetson.

Debugging Your Jetson Application in Nsight Eclipse Edition

The remote target system configuration that you set up in Nsight earlier will also be visible under the debugger icon in the toolbar.

Before you launch the debugger, note that by default Jetson doesn’t allow any application to solely occupy the GPU 100% of the time. In order to run the debugger on Jetson TK1, you need to fix this using the following command (note: this is not required on Jetson TX1/TX2).

root@tegra-ubuntu:/home/ubuntu# sudo echo N > sys/kernel/debug/gk20a.0/timeouts_enabled

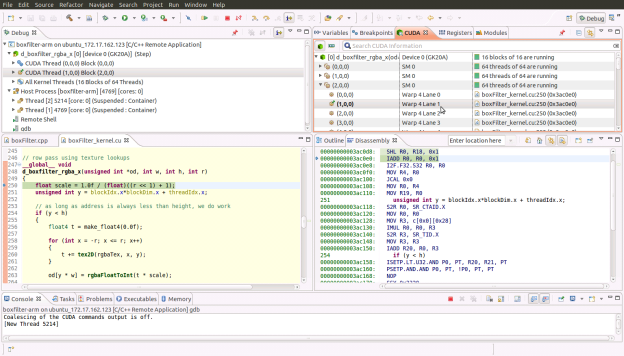

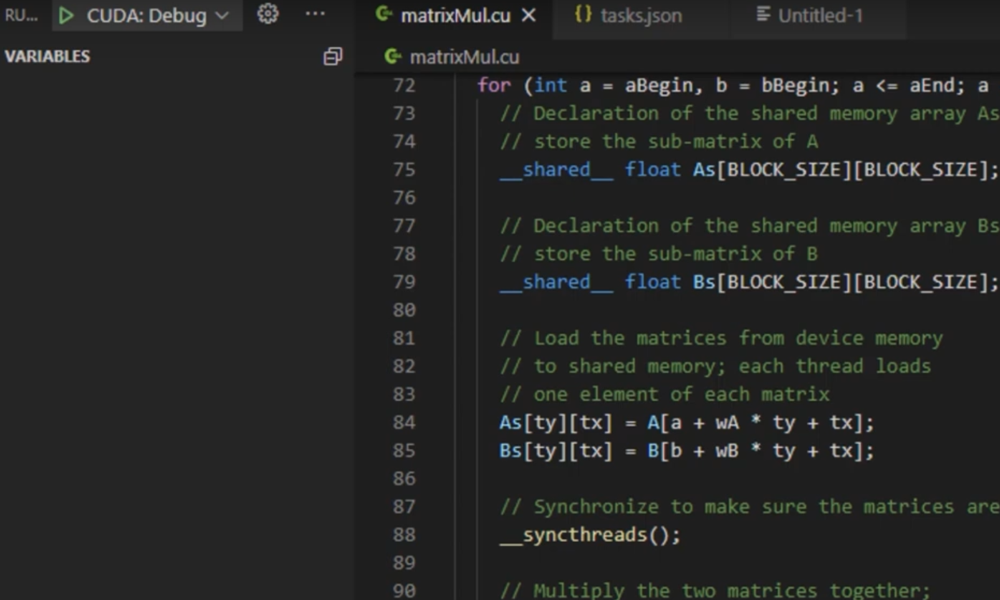

Now you can launch the debugger using the debug icon back on the host system. Nsight will switch to its debugger perspective and break on the first instruction in the CPU code. You can single-step a bit there to see the execution on the CPU and watch the variables and registers as they are updated.

To break on any CUDA kernel executing on the GPU, , go to the CUDA view in the top-right pane of Nsight and click on the cube icon dropdown. Then select the “break on application kernel launches” feature to break on the first instruction of a CUDA kernel launch. You can now resume the application, which will run until the first breakpoint is hit in the CUDA kernel. From here, you can browse the CPU and GPU call stack in the top-left pane. You can also view the variables, registers and HW state in the top-right pane. In addition, you can see that the Jetson GPU is executing 16 blocks of 64 threads each running on its Streaming Multiprocessors (SMs).

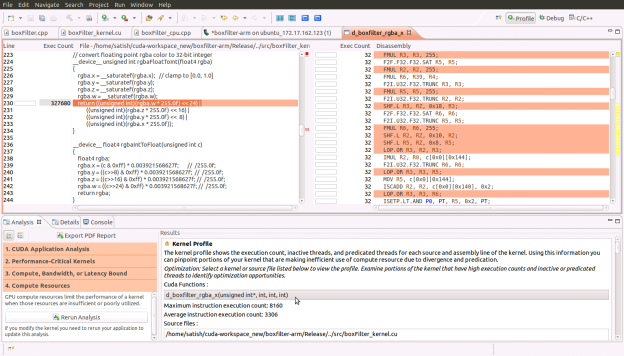

You can also switch to disassembly view and watch the register values being updated by clicking on the i-> icon in the debug view to do GPU instruction-level single-stepping.

To “pin” (focus on) specific GPU threads, double click the thread(s) of interest in the CUDA tab in the top-right pane. The pinned CUDA threads will appear in the top-left pane, allowing you to select and single-step just those threads. (Keep in mind, however, that single-stepping a given thread causes the remaining threads of the same warp to step as well, since they share a program counter.) You can experiment and watch this by pinning threads that belong to different warps.

There are more useful debug features that you will find by going into the debug configuration settings from the debug icon drop down, such as enabling cuda-memcheck and attaching to a running process (on the host system only).

To quit the application you are debugging, click the red stop button in the debugger perspective.

Profiling Your Jetson Application in Nsight Eclipse Edition

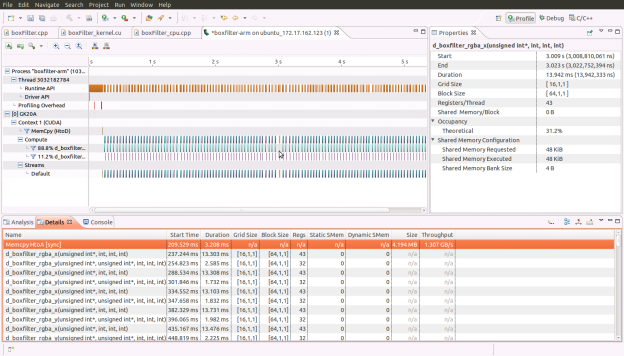

Switch back to the C++ project editor view to start the profiler run. The remote target system configuration you set up in Nsight earlier will also be visible to you under the profiler icon in the toolbar.

Before you launch the profiler, note that you need to create a release build with -lineinfo included in the compile options. This tells the compiler to generate information on source-to-instruction correlation. To do this, first go to the project settings by right-clicking on the project in the left pane. Then navigate to Properties->Build->Settings->Tool Settings->Debugging and check the box that says “Generate line-number…” and click Apply.

Back in the main window, click on the build hammer dropdown menu to create a release build. Resolve any build issues as you did during the first run above, then click on the Run As->Remote C/C++ Application to run the release build of the application. At this point Nsight will overwrite the Jetson TK1 system with the release binary you want to profile and run it once.

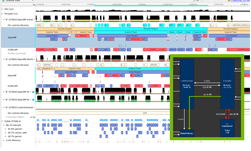

Next click on the profile icon dropdown and choose Profile Configurations where you must select “Profile Remote Application” since the binary is already on the Jetson TK1. Nsight will then switch you to the profiler perspective while it runs the application to gather an execution timeline view of all the CUDA Runtime and Driver API calls and of the kernels that executed on the GPU. The properties tab displays details of any event you select from this timeline; the details of the events can also be viewed in text form in the Details tab in the lower pane.

Below the timeline view in the lower pane, there is also an Analysis tab that is very useful for performance tuning. It guides you through a step-by-step approach on resolving performance bottlenecks in your application. You can switch between guided and unguided analysis by clicking on their icons under the Analysis tab.

You can also get a source-to-instruction correlation view, with hot spots (where the instructions-executed count was particularly high) identified in red as the figure below shows. You get this view from within the guided analysis mode by first clicking on “Examine Individual Kernels” and selecting the highest ranked (100) kernel from the list of examined kernels, then clicking “Perform Kernel Analysis” followed by “Perform Compute Analysis.” From there, clicking “Show Kernel Profile” shows the d_boxfilter_rgba_a kernel in the right pane. Double-click on the kernel name to see the source-to-instruction view. Clicking on a given line of source code highlights the corresponding GPU instructions.

As you can see, whether you are new to NVIDIA Nsight Eclipse Edition or an avid Nsight user, Nsight makes it just as easy and straightforward to create CUDA applications for the Jetson TK1 platform as for all your CUDA-enabled GPUs.

Get Started with CUDA Today

Start by downloading the CUDA Toolkit. If you are new to CUDA, check out this tutorial to get you started with CUDA programming. To learn more about Jetson TX2, NVIDIA’s latest embedded developer platform, read “NVIDIA Jetson TX2 Delivers Twice the Intelligence to the Edge“.