Went from training 700 img/s in MNIST to 1500 img/s (using CUDA) to 4000 img/s (using cuDNN) that is just freaking amazing! @GPUComputing

— Leon Palafox (@leonpalafox) March 27, 2015

I stumbled upon the above tweet by Leon Palafox, a Postdoctoral Fellow at the The University of Arizona Lunar and Planetary Laboratory, and reached out to him to discuss his success with GPUs and share it with other developers interested in using deep learning for image processing.

Tell us about your research at The University of Arizona

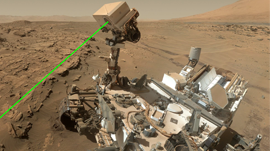

We are working on developing a tool that can automatically identify various geological processes on the surface of Mars. Examples of geological processes include impact cratering and volcanic activity; however, these processes can generate landforms that look very similar, even though they form via vastly different mechanisms. For example, small impact craters and volcanic craters can be easily confused because they can both exhibit a prominent rim surrounding a central topographic depression.

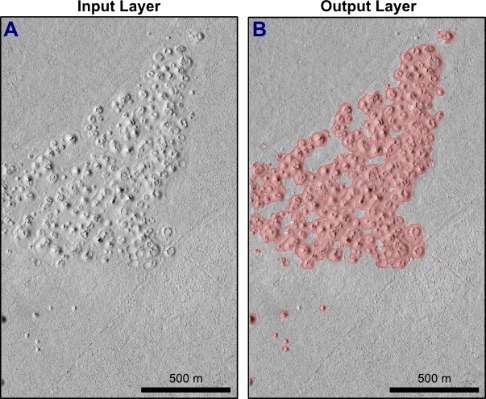

Of particular interest to our research group is the automated mapping of volcanic rootless cones as Figure 2 shows. These landforms are generated by explosive interactions between lava and ground ice, and therefore mapping the global distribution of rootless cones on Mars would contribute to a better understanding of the distribution of near-surface water on the planet. However, to do this we must first develop algorithms that can correctly distinguish between landforms of similar appearance. This is a difficult task for planetary geologists, but we are already having great success by applying state-of-the-art artificial neural networks to data acquired by the High Resolution Imaging Science Experiment (HiRISE) camera, which is onboard the Mars Reconnaissance Orbiter (MRO) satellite.

What is the status of the project?

The project is in the development phase; we expect to have it completed in one or two years depending on the number of features that we wish to train for. Before, we spent much of our processing time on processing the images with the CNN, but now, thanks to the NVIDIA cuDNN library, we have substantially reduced that time.

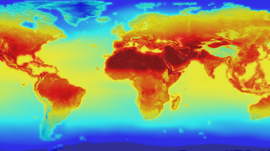

As of now, we are focusing on the identification of volcanic rootless cones and impact craters, but plan to extend our search to include other landforms like sand dunes, recurring slope lineae (thought to be formed by seasonal seeps of surface water), and cloud formations. Of particular interest are dynamic phenomena because once we have developed a robust identification algorithm we can apply it to time series satellite observations to investigate how the Martian environment changes through time. Mars provides an ideal place to develop and test such approaches, but our ultimate aim will be to apply similar techniques to study the Earth.

How are you applying the CUDA platform to this project?

We used a MATLAB approach to access the CUDA library, since at present most of our implementation is in MATLAB. We use the MatConvNet framework, which—like Theano and Caffe—provides a great set of tools to build and deploy your own Convolutional Neural Network (CNN) architectures. It also provides great CUDA interfaces to the cuDNN library.

We are still fine tuning some of the libraries, but in essence we use a CNN very similar to LeNet, albeit modified to work in this particular regime. We are also running five CNNs in parallel, each of which is using different pixel sizes to search for differently scaled features in the image.

What GPUs are you using?

We have five machines and each has two NVIDIA Quadro K5000s.

Can you share some visual results?

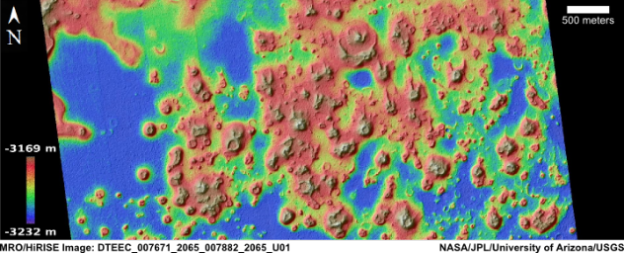

Figures 1 and 3 show the output in a region in Elysium Planitia, where we use the CNN to map the location of volcanic rootless cones. This process, if done in a larger scale, is an incredible tool for understanding the geologic history of Mars. This has been processed by five CNNs looking for features at different scales, and finally pooled to generate a contour map.

What data do you use for training the CNN?

So far we have trained on 800 examples of Martian landforms selected from full-resolution HiRISE images. Each HiRISE image typically has a resolution of 0.25 m/pixel and covers a swath that is 6-km-wide, resulting in file sizes up to 3.4 GB. Individual HiRISE images are spectacular, but the most impressive data products are digital terrain models generated using stereo-photogrammety (Figure 3). This data enables us to visualize the surface of Mars in three dimensions and generate simulated illumination images that can be used to expand our natural training sets.

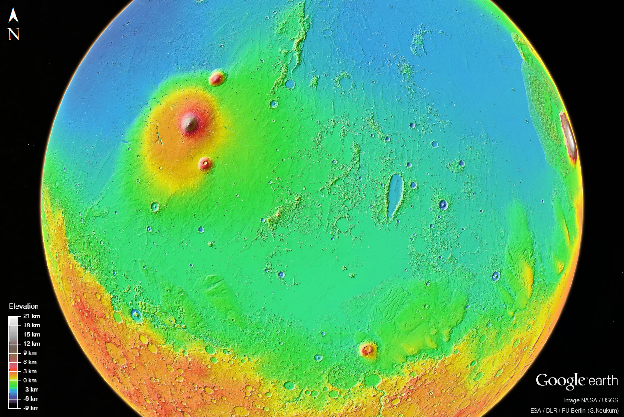

Additionally, we are using the trained Convolutional Neural Networks (CNNs) to examine thousands of HiRISE images within Elysium Planitia. This is a volcanic region that includes some of the youngest lava flows on Mars, covering a total of millions of square kilometers (Figure 1). Performing a manual search for every rootless cone in this region would be prohibitively time-consuming. Fortunately, automated approaches will enable us to map these landforms over a vast area and use the results of this systematic survey to infer the regional distribution of former ground-ice deposits in Elysium Planitia for the first time.

What has been the biggest challenge in this project?

This project has many challenges, from the algorithm implementation to the analysis of the results. I think the biggest challenge is having readily available databases to use and train over for the different features on the surface of Mars.

While there are some databases, not all of them are very consistent, unlike in the computer vision community, which has the MNIST and CIFAR standards.

This is both good and bad. It is good in the sense that it allows you to tackle a real-world problem with state-of-the-art tools, but since databases are not consistent, there is a lot of skepticism in the community about whether the approach will work with all the features on the surface. However, in planetary science, the situation is different because the data collected from instruments, like HiRISE, is made freely available to the public in a standardized form through the NASA Planetary Data System (PDS).

What is your GPU Computing experience and how have GPUs impacted your research?

I’ve used CUDA, but not intensively before now because most of my previous research has focused on processing other kinds of data than images. I had a couple of classes and projects where I used CUDA before, but this has been the first time that it really became critical to optimize the efficiency of the image analyses approach to investigate a problem on such a large scale.

In this short time, having access to a powerful GPU greatly reduces the amount of time that I need to process each of the images that I need to analyze. These images are from the HiRISE database, which consists of 35,000 grayscale and color images with a total database size over 25 TB, and I need to apply different types of CNNs to classify them correctly and to choose the most suitable architecture for our particular problem.

Without using GPUs, it would take days to finish processing a single image, while recent results have shown that an hour or two is enough to process a single image using a GPU.

You have a diverse research background. How have you taken advantage of it?

Having access to different research groups and topics has allowed me to use the experience I have gained from some Machine Learning topics in one area and apply it in a different way to a different area. For example, my work in Bayesian networks applied to gene networks has a huge applicability in my previous work at UCLA on EEG data from Brain–Machine Interfaces.

And surprisingly enough, my work on time series analysis can also find a use in planetary sciences by using things like Hidden Markov Models to model various terrain profiles.

How has machine learning changed over the last few years?

Machine learning has gone from a niche research-oriented area in the 1990s to a boom in the industry in the past decade. Part of it has been the explosion of readily available data that we have due to the information revolution of the last several years.

Many companies are investing a large amount of resources in their own data science divisions, like Facebook, which created its own machine learning laboratory a year ago. This has gotten people more excited about using machine learning tools, since it increases their value in the job market.

This is not without its downside, since many people will apply Machine Learning software without knowing the nuts and bolts of the process, which could result in disappointment for companies in the future, realizing their classifiers and tools are not tuned to their particular datasets. I’ve seen my fair share of implementations where there was no preanalysis of the data whatsoever, and just used the same tuning parameters as the textbook example.

I think that in the next few years more sophisticated Machine Learning tools will become available, and most of the work will be oriented toward large datasets. More effort has to be put into training people how the algorithms work rather than just using them.

In terms of technology advances, what are you looking forward to in the next five years?

I think a more pervasive use of unmanned aerial vehicles (UAVs) would be amazing. Having them readily available running algorithms like CNNs to do feature recognition would allow us to have real-time information about natural disasters, riots and local events.

I can imagine how having a UAV in a large setting like the Coachella Valley Music and Arts Festival or a football stadium would allow the organizers to better control the flow of people in real time to prevent accidents.

Having a UAV using a CNN to track wildfires would allow us to have information on how they spread, and in some cases, how to stop or prevent them.

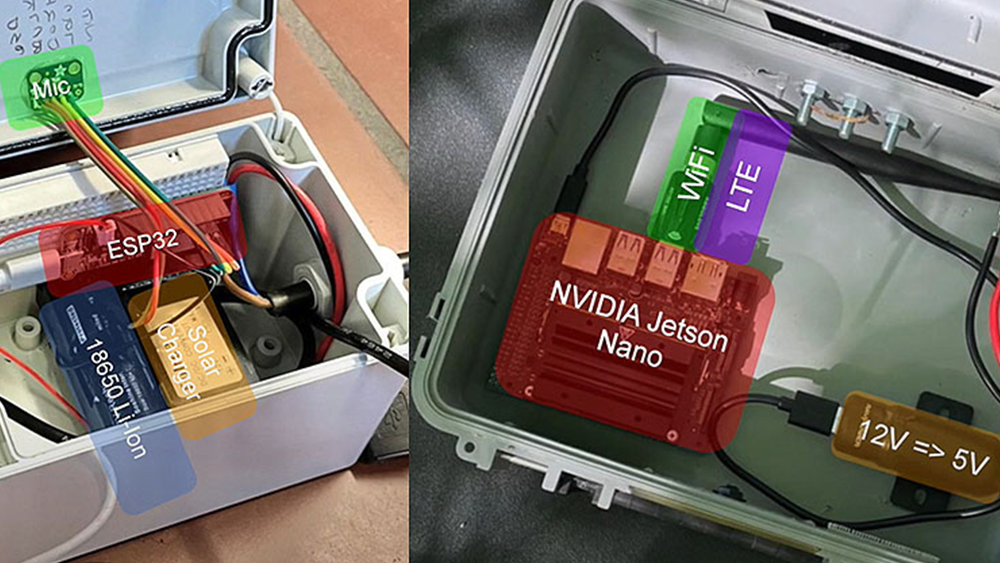

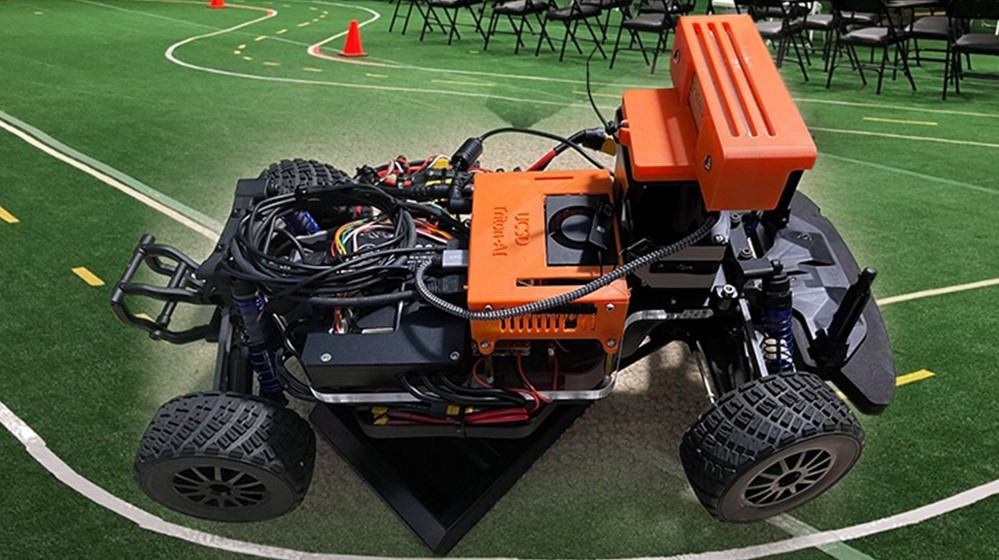

While the privacy implications are still a concern, I think there is much to gain from this technology, and mounting NVIDIA cards in them would be even better since we could do real-time image processing without the need to transmit video over Wi-Fi.

Do you have a success story like Leon’s that involves GPUs? If so, comment below and tell us about your research – we love reading and sharing our developer’s stories!

More About cuDNN and Deep Learning on GPUs

To learn more about deep learning on GPUs, visit the NVIDIA Deep Learning developer portal. Check out the related posts below, especially the intro to cuDNN: Accelerate Machine Learning with the cuDNN Deep Neural Network Library. Be sure to check out the cuDNN Webinar Recording: GPU-Accelerated Deep Learning with cuDNN. If you are interested in embedded applications of deep learning, check out the post Embedded Machine Learning with the cuDNN Deep Neural Network Library and Jetson TK1.