GPUs have quickly become the go-to platform for accelerating machine learning applications for training and classification. Deep Neural Networks (DNNs) have grown in importance for many applications, from image classification and natural language processing to robotics and UAVs. To help researchers focus on solving core problems, NVIDIA introduced a library of primitives for deep neural networks called cuDNN. The cuDNN library makes it easy to obtain state-of-the-art performance with DNNs, but only for workstations and server-based machine learning applications.

GPUs have quickly become the go-to platform for accelerating machine learning applications for training and classification. Deep Neural Networks (DNNs) have grown in importance for many applications, from image classification and natural language processing to robotics and UAVs. To help researchers focus on solving core problems, NVIDIA introduced a library of primitives for deep neural networks called cuDNN. The cuDNN library makes it easy to obtain state-of-the-art performance with DNNs, but only for workstations and server-based machine learning applications.

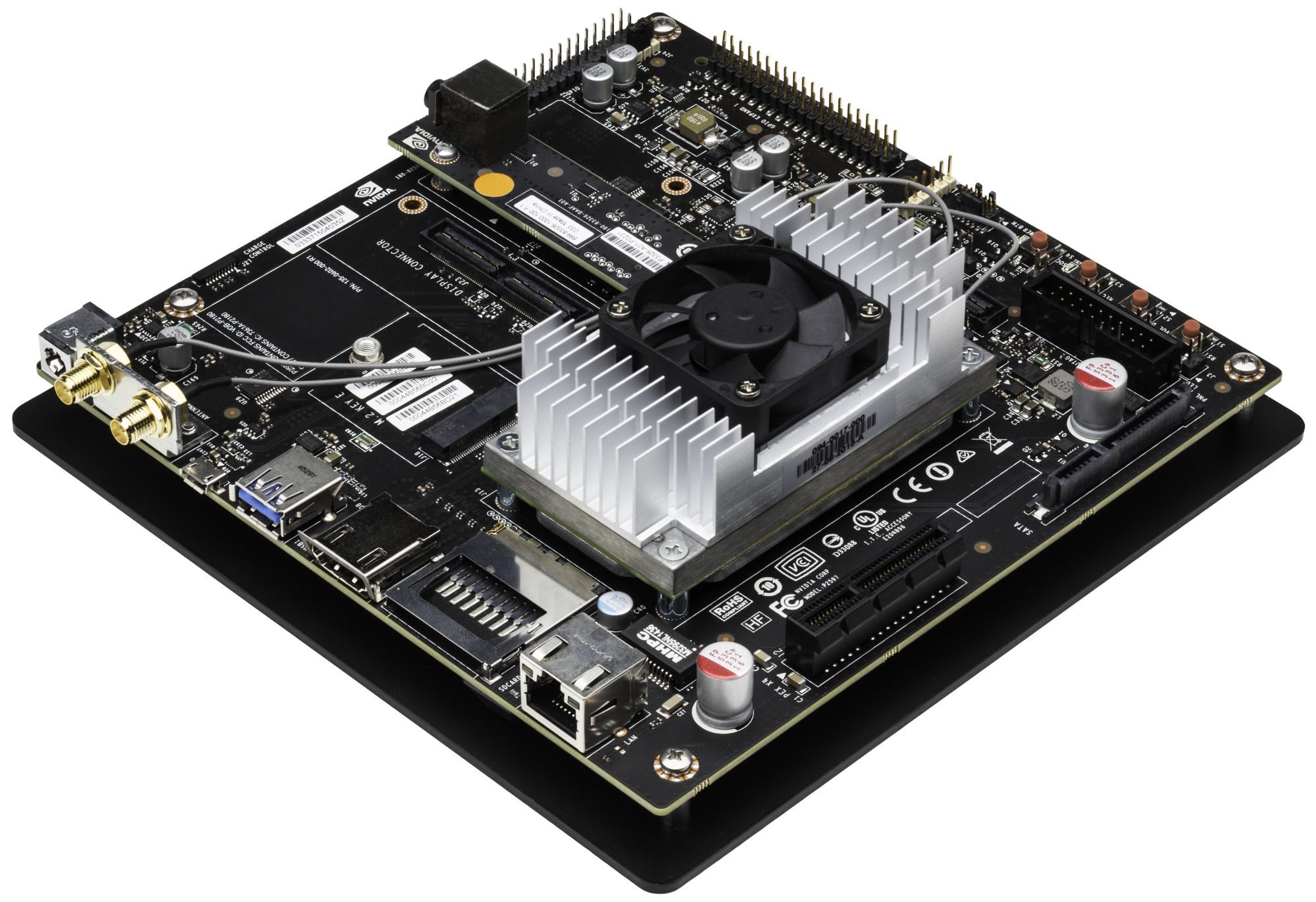

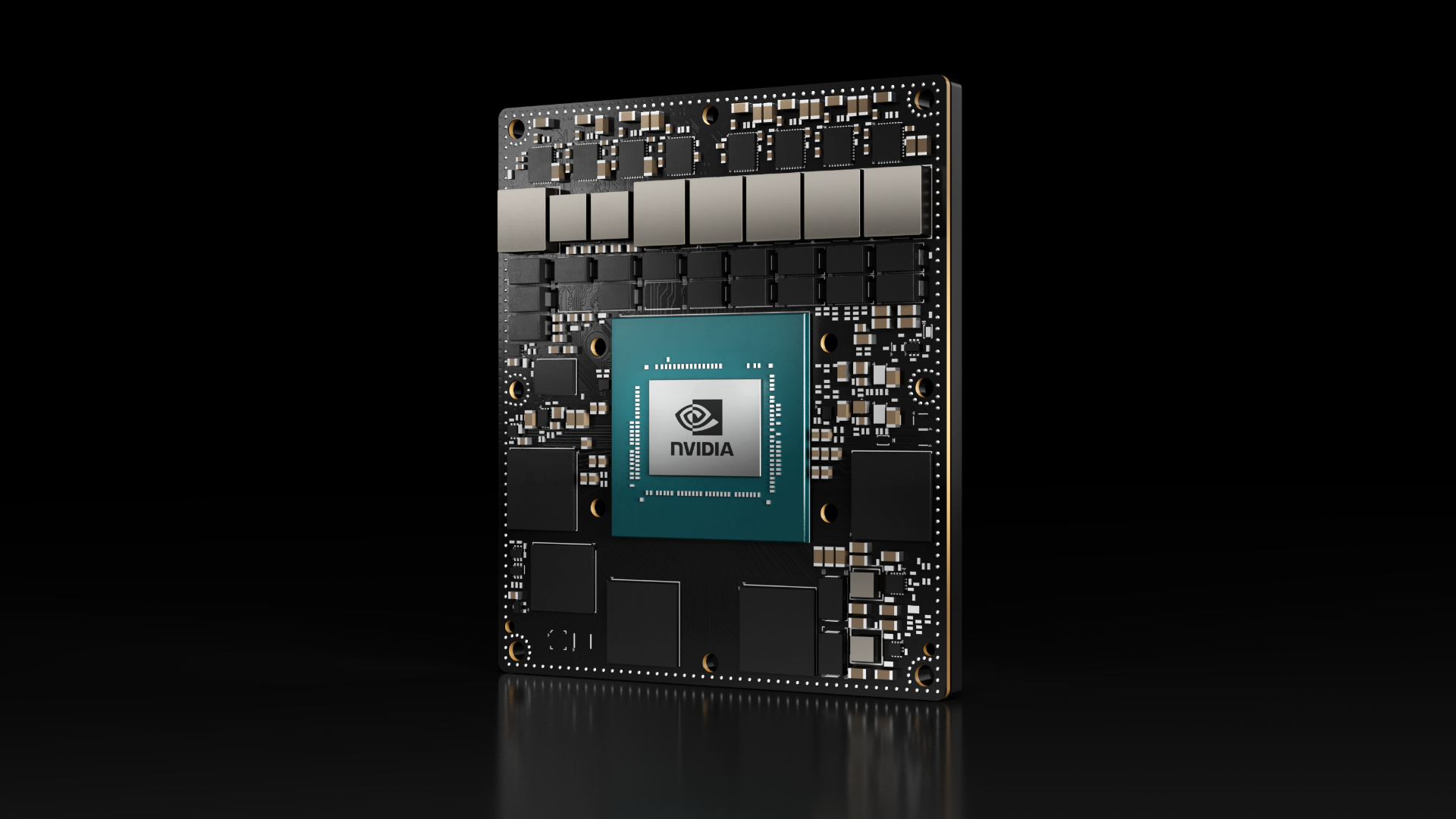

In the meantime, the Jetson TK1 development kit has become a must-have for mobile and embedded parallel computing due to the amazing level of performance packed into such a low-power board. Demand for embedded machine learning has been incredible, so to address this demand, we’ve released cuDNN for Arm (Linux for Tegra—L4T).

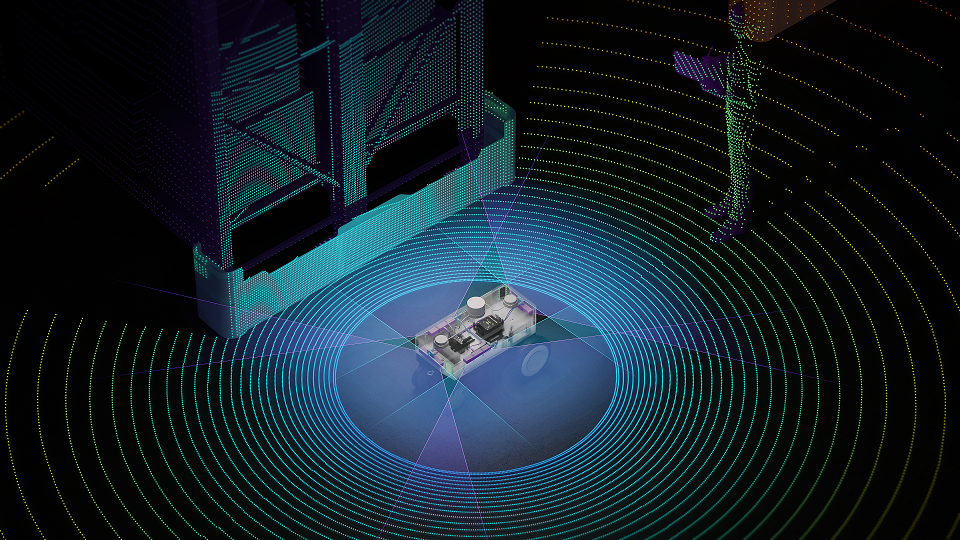

The combination of these two powerful tools enables industry standard machine learning frameworks, such as Berkeley’s Caffe or NYU’s Torch7, to run on a mobile device with excellent performance. Numerous machine learning applications will benefit from this platform, enabling advances in robotics, autonomous vehicles and embedded computer vision.

The combination of these two powerful tools enables industry standard machine learning frameworks, such as Berkeley’s Caffe or NYU’s Torch7, to run on a mobile device with excellent performance. Numerous machine learning applications will benefit from this platform, enabling advances in robotics, autonomous vehicles and embedded computer vision.

This brings the power and flexibility of the complete CUDA platform into a world that previously was stuck using DSP-type tools or other embedded development environments. Neural net researchers can now take the exact same code and infrastructure from their workstation and deploy to mobile inside a 10W power envelope!

Google’s Pete Warden wrote an excellent post detailing how to get Caffe running on the Jetson TK1. Here’s a small except from the post:

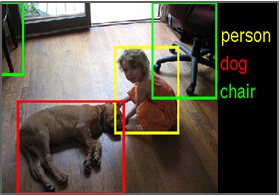

Caffe comes with a pre-built ‘Alexnet’ model, a version of the Imagenet-winning architecture that recognizes 1,000 different kinds of objects. Using this as a benchmark, the Jetson can analyze an image in just 34ms! Based on this table I’m estimating it’s drawing somewhere around 10 or 11 watts, so it’s power-intensive for a mobile device but not too crazy.

The benchmark Warden reported is based on running Caffe’s built-in CUDA implementation. While Caffe for x86 already supports cuDNN, Yangqing Jia, the original creator of Caffe, is working hard to add the Arm version of cuDNN to embedded-Caffe. We can’t wait to see the results of running Caffe with cuDNN on Jetson TK1’s 192 CUDA cores!

Try out cuDNN for Arm (L4T) today! To learn more about the cuDNN GPU-accelerated machine learning library, read Larry Brown’s post about the library. To learn more about Caffe, read Caffe developer Evan Shelhamer’s post. To learn more about Jetson TK1, check out these great posts.