We spoke with Ignacio Llamas, Director of Real Time Ray Tracing Software at NVIDIA about the introduction of real-time ray tracing in consumer GPUs.

We spoke with Ignacio Llamas, Director of Real Time Ray Tracing Software at NVIDIA about the introduction of real-time ray tracing in consumer GPUs.

How did you get started on ray tracing at NVIDIA?

I joined NVIDIA about four years ago, on the GPU architecture team.

If somebody told you that you’d be shipping consumer GPUs in 2018 capable of real-time ray tracing in 2018 in their games, what would you have said?

About 5 years ago that question actually came up, and my thought at the time was: no way. There is no way NVIDIA is going to do that so quickly. I didn’t think it was remotely in the radar for another decade. We knew it was important to work towards, though, because we believed that ray tracing would enable a new wave of graphical innovation. And we knew it was going to be hard.

Building a new technology without a clear sense of when it will be ready for developers must have been a bit scary.

It was scary. It was a leap of faith. We didn’t know if developers would adopt it. And we had to all agree that the R&D costs and the features added to the chip would be worth it. We could have chosen to accelerate something else. But we believed that focusing on ray tracing and deep learning was critically important. We made ray tracing run an order of magnitude faster than we could on prior GPUs. We got roughly a 3X – 4X speed up. We could have sped up something else a little bit… or deliver a 3X – 4X speed up on the frame time of something really new and compelling..

What were the secrets to making ray tracing work on consumer-grade GPUs? Was it just optimized hardware? Did software play a large role?

Real-time ray tracing does require a combination of hardware and software. You can’t just put out a piece of hardware and hope that developers are going to use it. It requires a lot of software investment… And then working closely with developers to understand what they need.

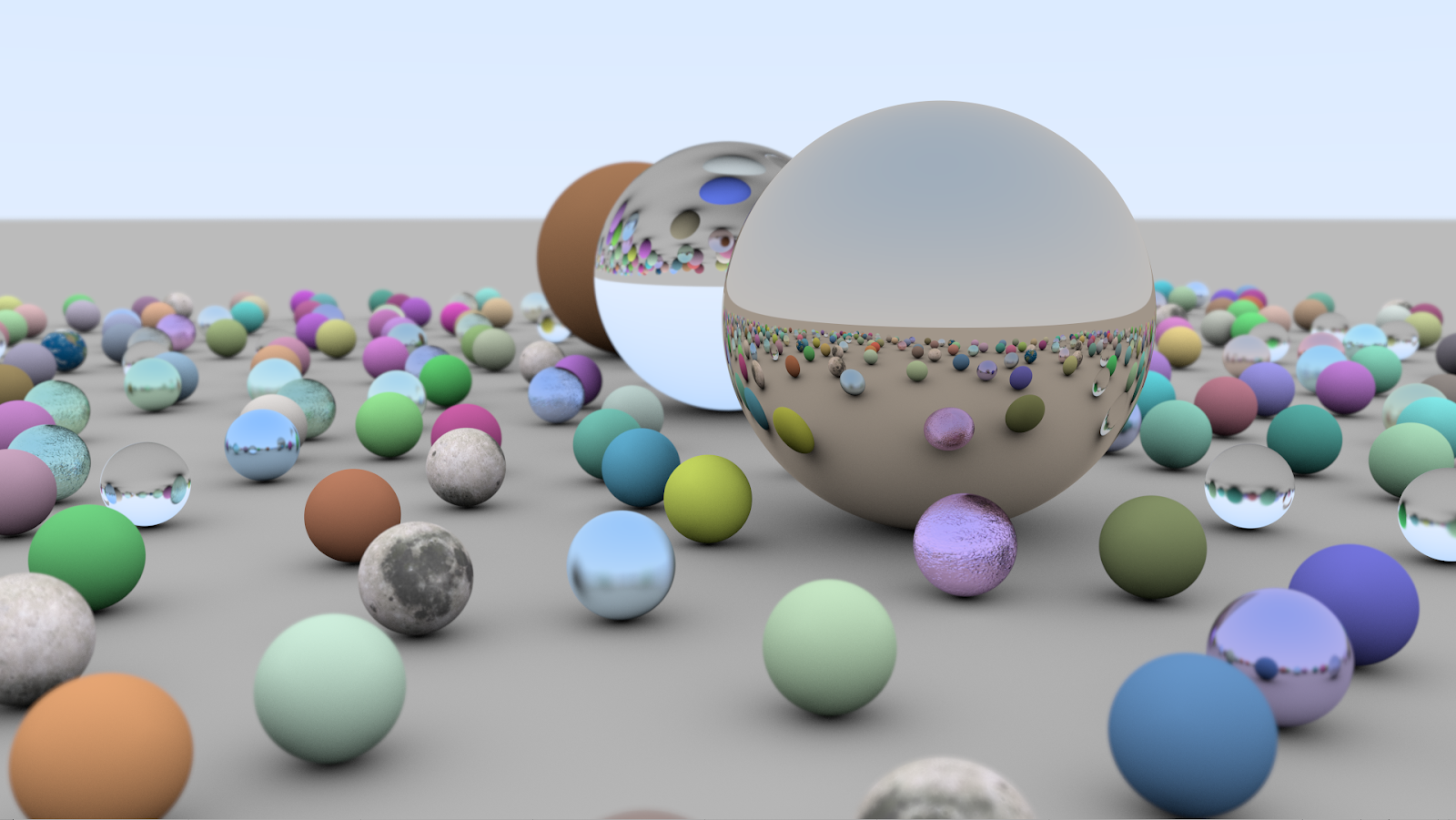

The hardware and software we delivered this year in 2018 was a pipe dream 10 years ago. And we are happy that we could get there sooner rather than later. We had a “moonshot project” – that’s what it was called at the time. We said, “Let’s accelerate ray tracing by orders of magnitude. And let’s give developers denoising tools”. So, it was a confluence of multiple research teams to get us to where we are today.

Since you worked directly with big development teams as this technology came together, what responses did you get when when you asked a roomful of developers, “Guess what, guys? Real-time ray tracing is coming in 2018!” What did they say? Did they even believe you?

A number of developers initially had a cool reception. Like, “Ok, really?”. Some of them were thinking it was coming, but not so quickly. We saw a lot of scepticism, because if you haven’t seen it running, it’s easy to be doubtful. That’s a healthy dose of skepticism. And over the last year, some developers who initially were skeptical converted to the believer side. And they realize that this is the beginning of something. And then they change completely from, “Um…”, to “WOW! We are seeing the rebirth of real-time graphics”.

How have artists responded to real-time ray tracing?

I’ve been interacting with quite a few artists internally and externally. The artist just expects things to work. The ones who have been working in the film industry have really high expectations. And they’re thrilled that they no longer need to compromise their visions. The ones who have been in the game industry for a long are happy to see that they no longer need to worry about cheats, like faking reflective surfaces or shadows. We’ve given them the tools to enable these visual elements in real time. Over time, we’ll see art direction targeting ray tracing from the beginning, to the point that everybody will take it for granted. Right now, we are in a transition period. You’re still seeing a lot of lighting hacks. But over time, those hacks will not be necessary.

What development tips could you provide for teams new to the technology?

At a high level, you continue doing a lot of what you’ve been doing, but you still replace some of those hacks with more accurate techniques based on a real time ray tracing. For example, many developers are already doing ray tracing with reflections in the screen space – which means they can only hit a reflection of something that’s already on the screen. You can take a pass at that algorithm, and replace it completely with another that does fully ray-traced reflections. Conceptually, that’s a really small change in the rendering flow.

As we’ve seen, EA has been able to do that in record time for Battlefield V. We we didn’t expect that to be to be the first game to be able to do that, because the timeline was really short. So I’m really thrilled to see what that team has done. Those guys are amazing.

What other game experience has stood out to you that uses ray tracing?

I’d love to see the final version of Asseto Corsa Competitione. It’s going to look really really great. There are so many so many angles with reflections, and the moment we can add the ray-traced reflections to it, it’s going to make a huge difference.

I wanted to ask you about denoisers, because that’s a really important part of making ray tracing work. Can you explain how denoisers work?

Sure. Rendering a frame takes a number of passes, or steps. Some of them are applied at the end, when you’ve already generated a basic image, and then there is post processing. Denoising is basically a post-processing effect that is applied to an image. Examples might be an image of a reflection, or an image of the ambient occlusion data, or an image of shadow visibility information. Like other post effects (such as motion blur or depth of field), they take that information and compute from it a different image that eliminates the noise by averaging in some way. It’s like computing weighted sums of the values in those images. That is a very high level idea of how denoisers work.

Can you talk to us a little bit more about the DLSS and how it relates to real time ray tracing?

DLSS is a technology that also announced at the Turing launch. It allows us to take an image that we generate with some number of pixels, and through artificial intelligence techniques, we run that image through a deep learning network, and generate a new image that is higher resolution, and very similar in quality to what you would achieve if you were to render in that resolution. When we combine that with ray tracing, which is more computationally intensive than traditional techniques, it allows us to run 50% to 100% faster. That’s important because it allows us to do more with ray tracing, and to create more interesting visuals without losing the resolution or quality that you would normally achieve without ray tracing. By means of the tensor cores, which accelerate deep learning, we spend only a bit of time on those cores in order to save a lot of time overall, and speed up rendering and get the best of both. You get great resolution image, and you get all the visual richness that ray tracing provides.

How can you see an independent developer taking advantage of ray tracing?

I think you can look at an example like Claybook, which was already doing ray tracing before we even launched Turing. Second Order Games did it in a different setting, with a different kind of representation, which worked well for the type of content that they wanted to have. So, that’s an indie game developed by a relatively small team with ray tracing. Indies might be willing to take steps a bigger company might not, and create new games fully focused on the ray tracing technique. They could focus on a specific setting that shows off the technology, like a mirror maze. I was just at a mirror maze this weekend, and it was really cool! Nobody was able to do that in a game before, but now you can.

How did it feel when this technology – which you’d been working on in screet for nearly five years – was finally being announced onstage to a room full of ecstatic developers at GDC 2018?

You’re gonna make me cry! It was really emotional. Leading up to that day, we were hard at work getting the demos ready for launch. You don’t have time to think about it. But when you see your work on stage, and you realize what you’ve done, and you recognize this is the beginning of something totally new… it’s indescribable. And we were there, and we made it happen.. So, for me, it’s the biggest thing I could ever hope to contribute to in this field. Everything happened as well we’d hoped it would. I’m super proud of the entire team.