Arm

Jan 20, 2023

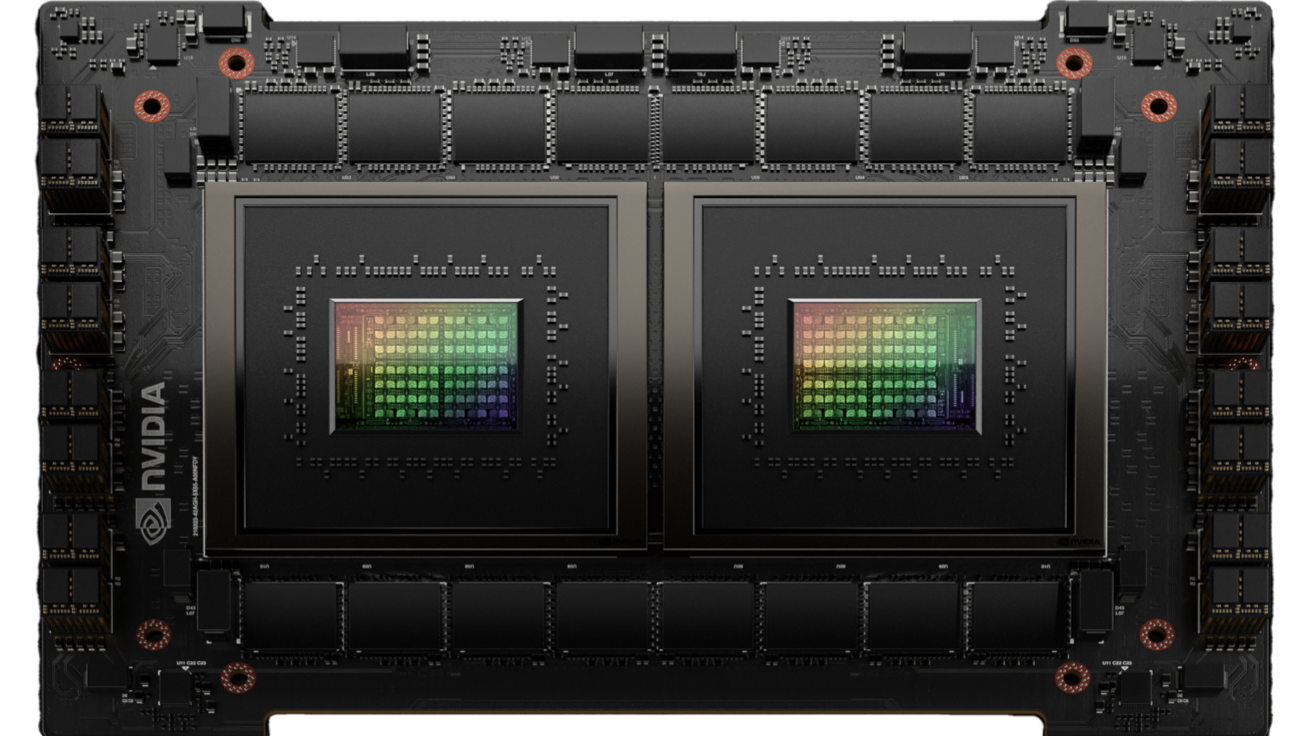

NVIDIA Grace CPU Superchip Architecture In Depth

The NVIDIA Grace CPU is the first data center CPU developed by NVIDIA. Combining NVIDIA expertise with Arm processors, on-chip fabrics, system-on-chip (SoC)...

9 MIN READ

Nov 16, 2022

Evaluating Applications Using the NVIDIA Arm HPC Developer Kit

The NVIDIA Arm HPC Developer Kit is an integrated hardware and software platform for creating, evaluating, and benchmarking HPC, AI, and scientific computing...

8 MIN READ

Oct 12, 2022

Just Released: HPC SDK v22.9

This version 22.9 update to the NVIDIA HPC SDK includes fixes and minor enhancements.

1 MIN READ

Sep 28, 2022

Powering NVIDIA-Certified Enterprise Systems with Arm CPUs

Organizations are rapidly becoming more advanced in the use of AI, and many are looking to leverage the latest technologies to maximize workload performance and...

4 MIN READ

Sep 14, 2022

NVIDIA, Arm, and Intel Publish FP8 Specification for Standardization as an Interchange Format for AI

AI processing requires full-stack innovation across hardware and software platforms to address the growing computational demands of neural networks. A key area...

4 MIN READ

Jul 27, 2022

Just Released: New Arm CPU Support and Advancements in HPC SDK 22.7

This release includes enhancements, fixes, and new support for Arm SVE, Rocky Linux OS, and Amazon EC2 C7g instances, powered by the latest generation AWS...

1 MIN READ

Nov 29, 2021

AWS Launches First NVIDIA GPU-Accelerated Graviton-Based Instance with Amazon EC2 G5g

Today at AWS re:Invent 2021, AWS announced the general availability of Amazon EC2 G5g instances—bringing the first NVIDIA GPU-accelerated Arm-based instance...

3 MIN READ

Nov 15, 2021

Develop the Next Generation of HPC Applications with the NVIDIA Arm HPC Developer Kit

In July of 2021, NVIDIA announced the availability of the NVIDIA Arm HPC Developer Kit for preordering, along with the NVIDIA HPC SDK. Since then NVIDIA and its...

3 MIN READ

Sep 22, 2021

Furthering NVIDIA Performance Leadership with MLPerf Inference 1.1 Results

AI continues to drive breakthrough innovation across industries, including consumer Internet, healthcare and life sciences, financial services, retail,...

6 MIN READ

Jul 22, 2021

NVIDIA Announces Availability for Arm HPC Developer Kit with New HPC SDK v21.7

Today NVIDIA announced the availability of the NVIDIA Arm HPC Developer Kit with the NVIDIA HPC SDK version 21.7. The DevKit is an integrated...

2 MIN READ

Jun 04, 2021

HPC - Top Resources from GTC 21

Get the latest resources and news about the NVIDIA technologies that are accelerating the latest innovations in HPC from industry leaders and developers....

2 MIN READ

Jun 25, 2020

What’s New to NGC: HPC Containers for A100 and Arm Systems

Researchers are harnessing the power of NVIDIA GPUs more than ever before to find a cure for COVID-19. Leveraging popular molecular dynamics and quantum...

2 MIN READ

Dec 12, 2018

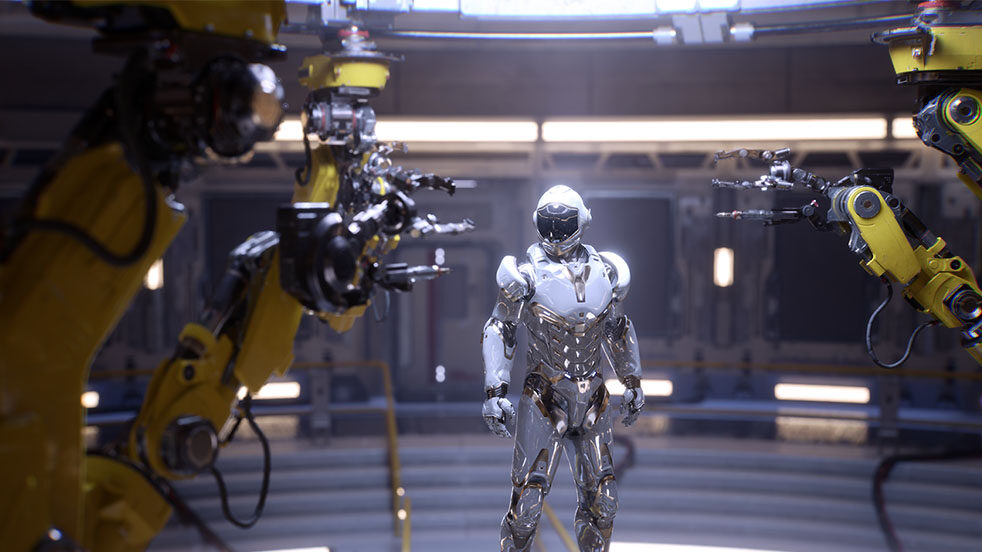

NVIDIA Jetson AGX Xavier Delivers 32 TeraOps for New Era of AI in Robotics

The world’s ultimate embedded solution for AI developers, Jetson AGX Xavier, is now shipping as standalone production modules from NVIDIA. A member of...

22 MIN READ

Mar 19, 2017

CUDA Development for Jetson with NVIDIA Nsight Eclipse Edition

NVIDIA Nsight Eclipse Edition is a...

14 MIN READ

Mar 07, 2017

NVIDIA Jetson TX2 Delivers Twice the Intelligence to the Edge

...

18 MIN READ

Nov 13, 2014

Embedded Machine Learning with the cuDNN Deep Neural Network Library and Jetson TK1

GPUs have quickly become the go-to platform for accelerating machine learning applications for training and classification. Deep Neural Networks (DNNs) have...

3 MIN READ