cuBLAS

Feb 01, 2024

Just Released: NVIDIA HPC SDK v24.1

This NVIDIA HPC SDK update includes the cuBLASMp preview library, along with minor bug fixes and enhancements.

1 MIN READ

Jan 12, 2024

Just Released: cuBLASDx

cuBLASDx allows you to perform BLAS calculations inside your CUDA kernel, improving the performance of your application. Available to download in Preview...

1 MIN READ

Dec 20, 2023

Just Released: cuBLASMp

cuBLASMp is a high-performance, multi-process, GPU-accelerated library for distributed basic dense linear algebra. It is available to download in Preview now.

1 MIN READ

Sep 28, 2023

NVIDIA H100 System for HPC and Generative AI Sets Record for Financial Risk Calculations

Generative AI is taking the world by storm, from large language models (LLMs) to generative pretrained transformer (GPT) models to diffusion models. NVIDIA is...

7 MIN READ

Feb 01, 2023

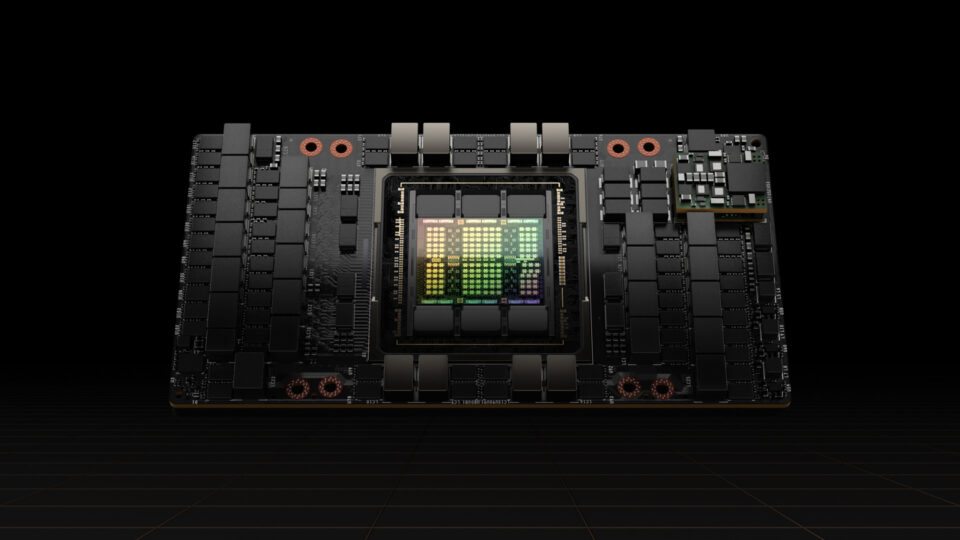

New cuBLAS 12.0 Features and Matrix Multiplication Performance on NVIDIA Hopper GPUs

The NVIDIA H100 Tensor Core GPU, based on the NVIDIA Hopper architecture with the fourth generation of NVIDIA Tensor Cores, recently debuted delivering...

10 MIN READ

Dec 12, 2022

CUDA Toolkit 12.0 Released for General Availability

NVIDIA announces the newest CUDA Toolkit software release, 12.0. This release is the first major release in many years and it focuses on new programming models...

12 MIN READ

Aug 03, 2022

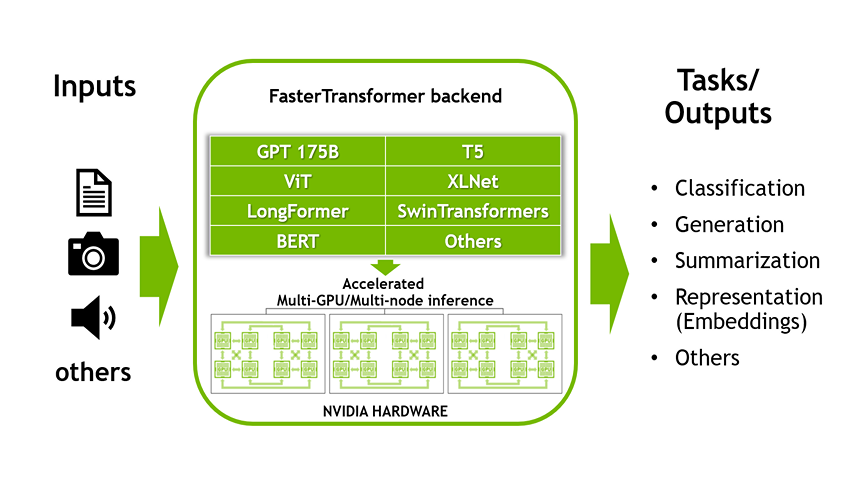

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server

This is the first part of a two-part series discussing the NVIDIA Triton Inference Server’s FasterTransformer (FT) library, one of the fastest libraries for...

10 MIN READ

Jul 26, 2022

Accelerating GPU Applications with NVIDIA Math Libraries

There are three main ways to accelerate GPU applications: compiler directives, programming languages, and preprogrammed libraries. Compiler directives such as...

12 MIN READ

Dec 05, 2017

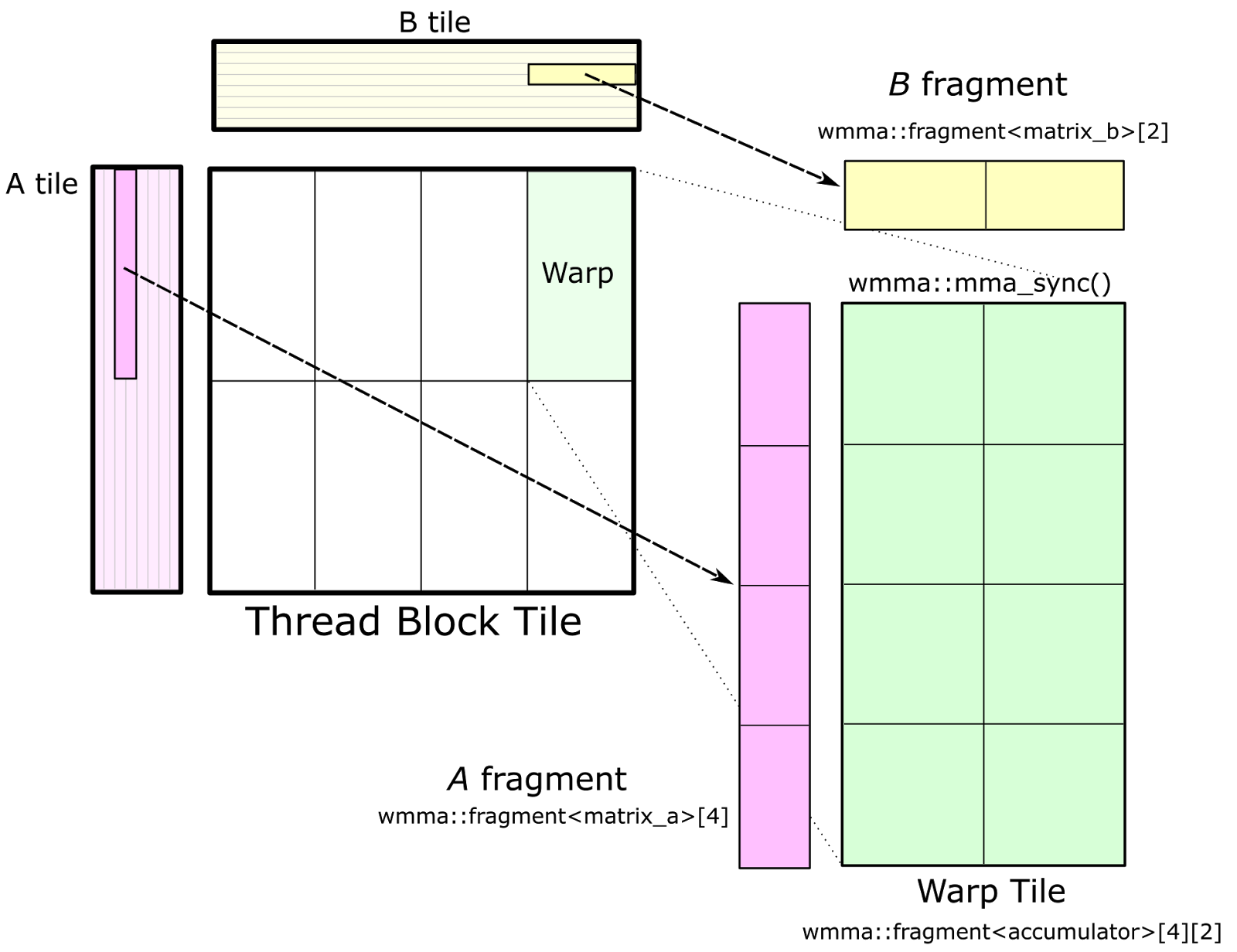

CUTLASS: Fast Linear Algebra in CUDA C++

Update May 21, 2018: CUTLASS 1.0 is now available as Open Source software at the CUTLASS repository. CUTLASS 1.0 has changed substantially from our preview...

25 MIN READ

May 11, 2017

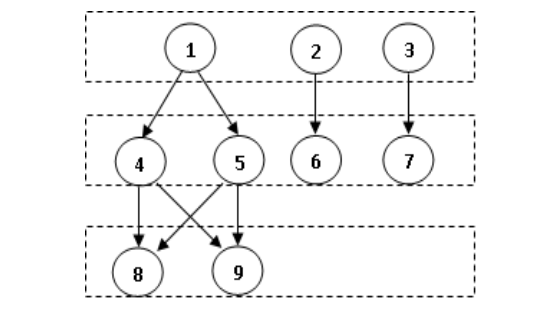

CUDA 9 Features Revealed: Volta, Cooperative Groups and More

Figure 1: CUDA 9 provides a preview API for programming Tesla V100 Tensor Cores, providing a huge...

17 MIN READ

Feb 27, 2017

Pro Tip: cuBLAS Strided Batched Matrix Multiply

There’s a new computational workhorse in town. For decades, general matrix-matrix multiply—known as GEMM in Basic Linear Algebra Subroutines (BLAS)...

10 MIN READ

Feb 25, 2015

Deep Speech: Accurate Speech Recognition with GPU-Accelerated Deep Learning

Speech recognition is an established technology, but it tends to fail when we need it the most, such as in noisy or crowded environments, or when the speaker is...

9 MIN READ

Jun 05, 2014

Drop-in Acceleration of GNU Octave

cuBLAS is an implementation of the BLAS library that leverages the teraflops of performance provided by NVIDIA GPUs. However, cuBLAS can not be used as a...

7 MIN READ

Mar 05, 2014

CUDA Pro Tip: How to Call Batched cuBLAS routines from CUDA Fortran

CUDA Fortran for Scientists and Engineers shows how high-performance application developers can...

7 MIN READ

Jul 02, 2012

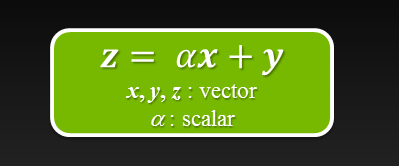

Six Ways to SAXPY

For even more ways to SAXPY using the latest NVIDIA HPC SDK with standard language parallelism, see N Ways to SAXPY: Demonstrating the Breadth of GPU...

8 MIN READ

Jun 22, 2011

Accelerated Solution of Sparse Linear Systems

Fresh from the NVIDIA Numeric Libraries Team, a white paper illustrating the use of the CUSPARSE and CUBLAS libraries to achieve a 2x speedup of incomplete-LU-...

1 MIN READ