Profilers / Debuggers / Code Analysis

Mar 27, 2024

Efficient CUDA Debugging: Using NVIDIA Compute Sanitizer with NVIDIA Tools Extension and Creating Custom Tools

NVIDIA Compute Sanitizer is a powerful tool that can save you time and effort while improving the reliability and performance of your CUDA applications....

14 MIN READ

Mar 14, 2024

Powerful Shader Insights: Using Shader Debug Info with NVIDIA Nsight Graphics

As ray tracing becomes the predominant rendering technique in modern game engines, a single GPU RayGen shader can now perform most of the light simulation of a...

7 MIN READ

Oct 24, 2023

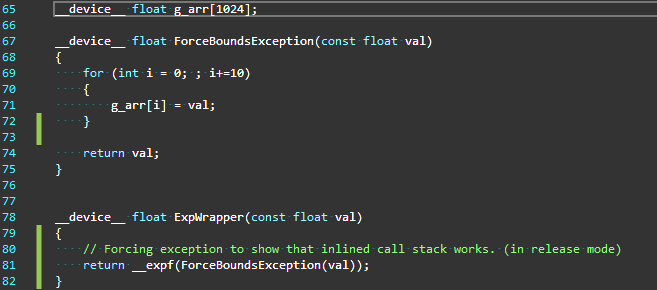

Efficient CUDA Debugging: Memory Initialization and Thread Synchronization with NVIDIA Compute Sanitizer

NVIDIA Compute Sanitizer is a powerful tool that can save you time and effort while improving the reliability and performance of your CUDA applications. In...

13 MIN READ

Aug 09, 2023

Speed Up GPU Crash Debugging with NVIDIA Nsight Aftermath

NVIDIA Nsight Developer Tools provide comprehensive access to NVIDIA GPUs and graphics APIs for performance analysis, optimization, and debugging activities....

5 MIN READ

Jul 06, 2023

CUDA Toolkit 12.2 Unleashes Powerful Features for Boosting Applications

The latest release of CUDA Toolkit 12.2 introduces a range of essential new features, modifications to the programming model, and enhanced support for hardware...

8 MIN READ

Jun 29, 2023

Efficient CUDA Debugging: How to Hunt Bugs with NVIDIA Compute Sanitizer

Debugging code is a crucial aspect of software development but can be both challenging and time-consuming. Parallel programming with thousands of threads can...

14 MIN READ

Jun 28, 2023

Optimizing CUDA Memory Transfers with NVIDIA Nsight Systems

NVIDIA Nsight Systems is a comprehensive tool for tracking application performance across CPU and GPU resources. It helps ensure that hardware is being...

10 MIN READ

May 31, 2022

Improve Guidance and Performance Visualization with the New Nsight Compute

NVIDIA Nsight Compute is an interactive kernel profiler for CUDA applications. It provides detailed performance metrics and API debugging through a user...

3 MIN READ

Mar 24, 2022

Record, Edit, and Rewind in Virtual Reality with NVIDIA VR Capture and Replay

Developers and early access users can now accurately capture and replay VR sessions for performance testing, scene troubleshooting, and more with NVIDIA Virtual...

5 MIN READ

Jan 25, 2022

NVIDIA Nsight Systems 2022.1 Introduces Vulkan 1.3 and Linux Backtrace Sampling and Profiling Improvements

The latest update to NVIDIA Nsight Systems—a performance analysis tool designed to help developers tune and scale software across CPUs and GPUs—is now...

2 MIN READ

Jan 25, 2022

NVIDIA Nsight Graphics 2022.1 Supports Latest Vulkan Ray Tracing Extension

Today, NVIDIA announced the latest Nsight Graphics 2022.1, which supports Direct3D (11, 12, DXR), Vulkan 1.3 ray tracing extension, OpenGL, OpenVR, and the...

3 MIN READ

Feb 12, 2021

Boosting Productivity and Performance with the NVIDIA CUDA 11.2 C++ Compiler

The 11.2 CUDA C++ compiler incorporates features and enhancements aimed at improving developer productivity and the performance of GPU-accelerated applications....

21 MIN READ

Aug 02, 2019

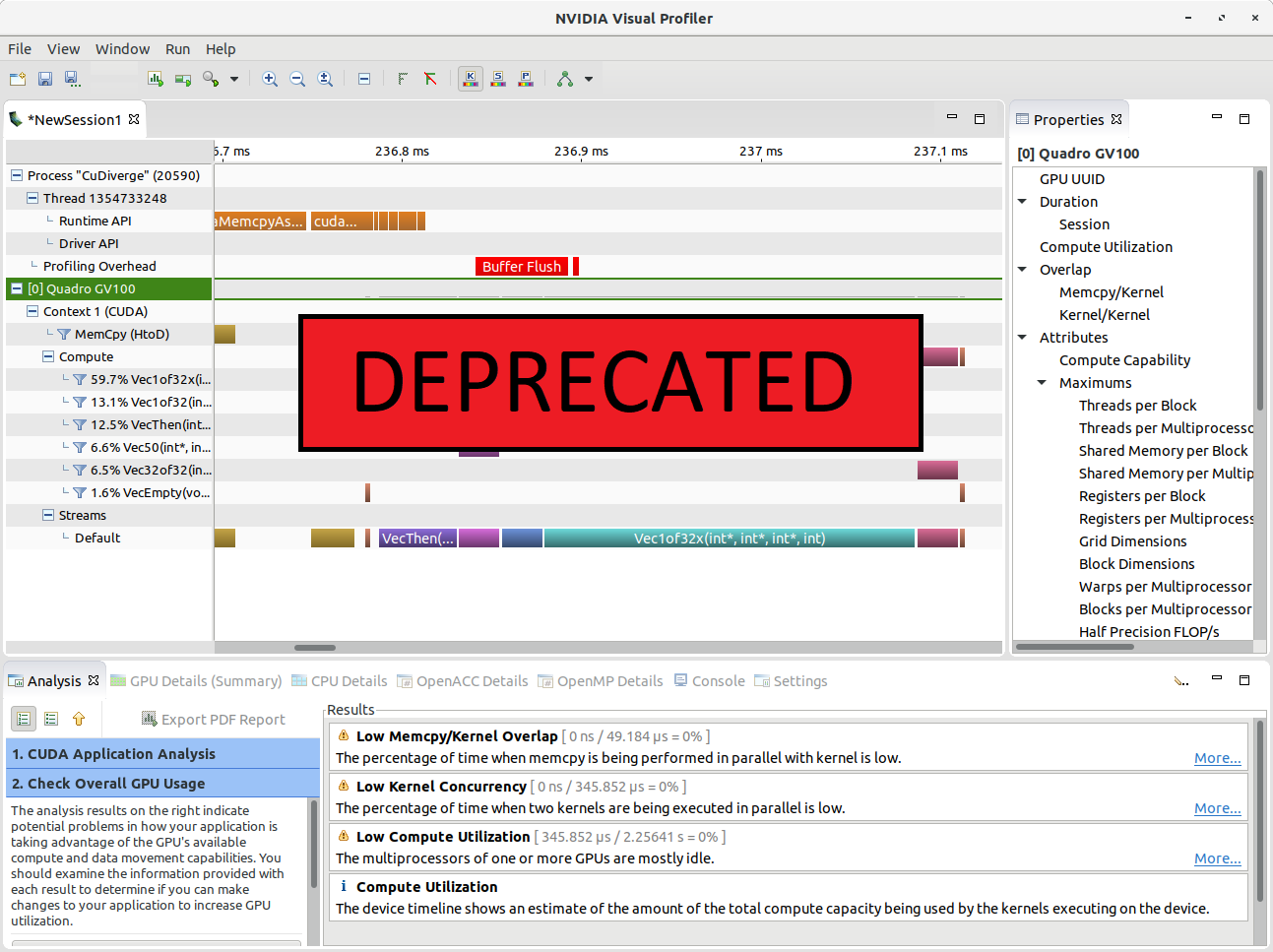

Transitioning to Nsight Systems from NVIDIA Visual Profiler / nvprof

The Nsight suite of profiling tools now supersedes the NVIDIA Visual Profiler (NVVP) and nvprof. Let’s look at what this means for NVIDIA Visual Profiler or...

17 MIN READ

Jul 15, 2019

Migrating to NVIDIA Nsight Tools from NVVP and Nvprof

If you use the NVIDIA Visual Profiler or the nvprof command line tool, it’s time to transition to something newer: NVIDIA Nsight Tools. Don’t worry! The new...

10 MIN READ

Nov 16, 2017

Pro Tip: Pinpointing Runtime Errors in CUDA Fortran

CUDA Fortran for Scientists and Engineers shows how high-performance application developers can...

4 MIN READ

Mar 19, 2017

CUDA Development for Jetson with NVIDIA Nsight Eclipse Edition

NVIDIA Nsight Eclipse Edition is a...

14 MIN READ