Graph Algorithms

Oct 13, 2023

Supercharge Graph Analytics at Scale with GPU-CPU Fusion for 100x Performance

Graphs form the foundation of many modern data and analytics capabilities to find relationships between people, places, things, events, and locations across...

11 MIN READ

Apr 06, 2023

Ask Me Anything: Experts Answer Your NVIDIA cuGraph Questions Live

Join us April 12 and ask experts about NVIDIA cuGraph with added support for GNN, accelerated aggregators, models, and extensions to DGL and PyG.

1 MIN READ

Aug 16, 2022

Running Large-Scale Graph Analytics with Memgraph and NVIDIA cuGraph Algorithms

With the latest Memgraph Advanced Graph Extensions (MAGE) release, you can now run GPU-powered graph analytics from Memgraph in seconds, while working in...

9 MIN READ

Oct 06, 2021

GPU-Accelerated Hierarchical DBSCAN with RAPIDS cuML – Let’s Get Back To The Future

Data scientists across various domains use clustering methods to find naturally ‘similar’ groups of observations in their datasets. Popular clustering...

10 MIN READ

May 12, 2016

Fast Spectral Graph Partitioning on GPUs

Graphs are mathematical structures used to model many types of relationships and processes in physical, biological, social and information systems. They are...

15 MIN READ

Jun 09, 2015

Graph Coloring: More Parallelism for Incomplete-LU Factorization

In this blog post I will briefly discuss the importance and simplicity of graph coloring and its application to one of the most common problems in sparse linear...

12 MIN READ

Mar 10, 2015

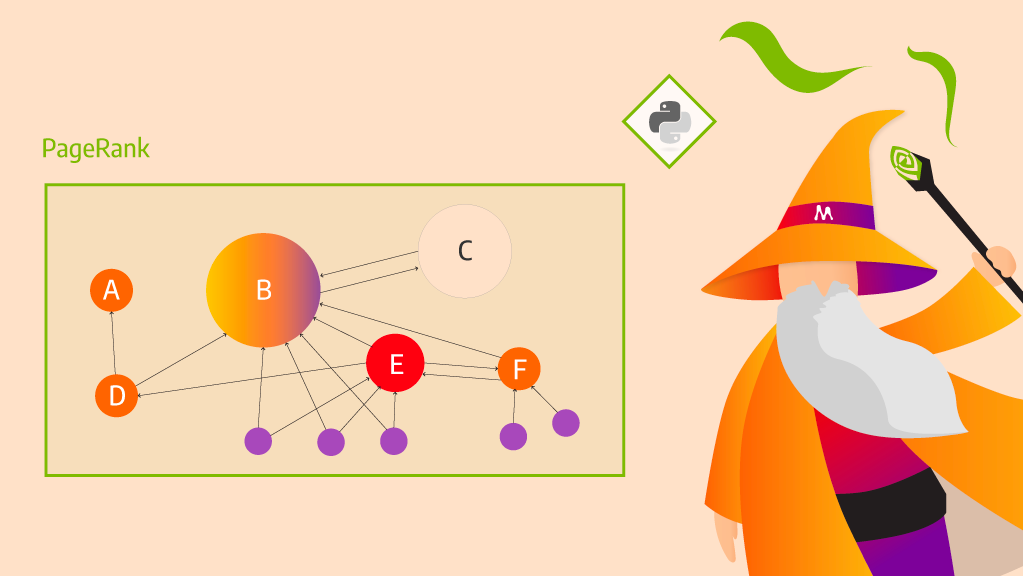

GPU-Accelerated Graph Analytics in Python with Numba

Numba is an open-source just-in-time (JIT) Python compiler that generates native machine code for X86 CPU and CUDA GPU from annotated Python Code. (Mark Harris...

8 MIN READ

Jul 23, 2014

Accelerating Graph Betweenness Centrality with CUDA

Graph analysis is a fundamental tool for domains as diverse as social networks, computational biology, and machine learning. Real-world applications of graph...

14 MIN READ