Image Processing & Enhancement

Jan 16, 2024

Robust Scene Text Detection and Recognition: Inference Optimization

In this post, we delve deeper into the inference optimization process to improve the performance and efficiency of our machine learning models during the...

9 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Implementation

To make scene text detection and recognition work on irregular text or for specific use cases, you must have full control of your model so that you can do...

6 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Introduction

Identification and recognition of text from natural scenes and images become important for use cases like video caption text recognition, detecting signboards...

8 MIN READ

Jul 18, 2023

Research Unveils Breakthrough Deep Learning Tool for Understanding Neural Activity and Movement Control

A primary goal in the field of neuroscience is understanding how the brain controls movement. By improving pose estimation, neurobiologists can more precisely...

8 MIN READ

May 15, 2023

How NeRFs Helped Me Re-Imagine the World

Why have images not evolved past two dimensions yet? Why are we satisfied with century-old technology? What if the technology already exists that’s ready to...

10 MIN READ

May 04, 2023

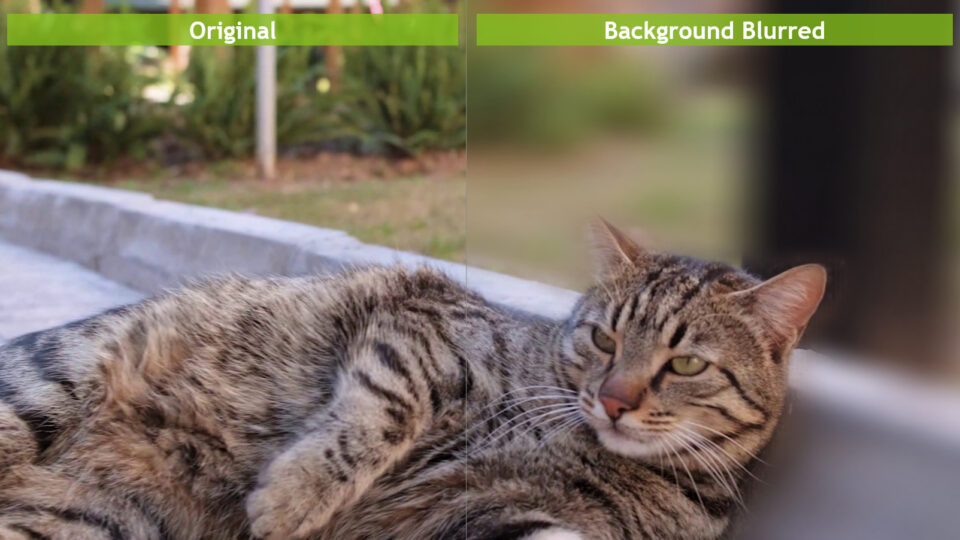

Increasing Throughput and Reducing Costs for AI-Based Computer Vision with CV-CUDA

Real-time cloud-scale applications that involve AI-based computer vision are growing rapidly. The use cases include image understanding, content creation,...

11 MIN READ

May 01, 2023

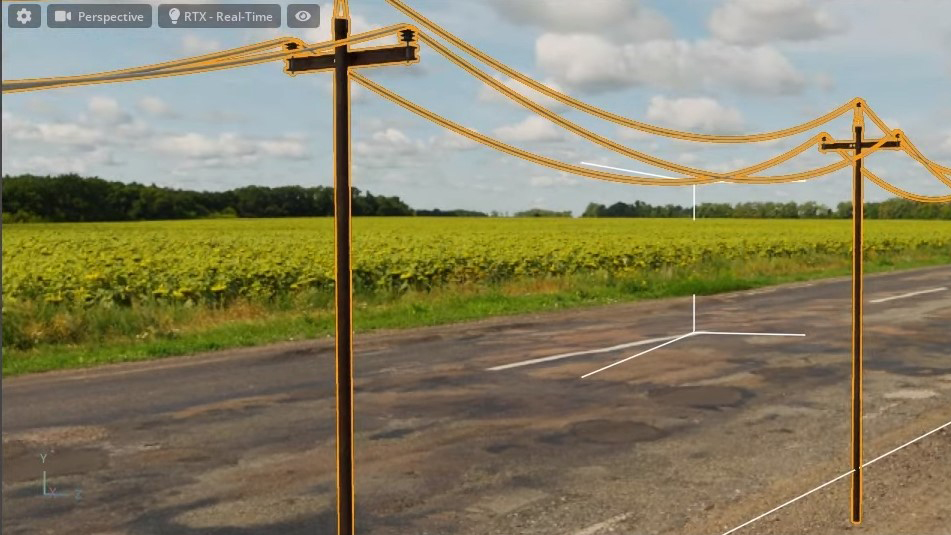

Exelon Uses Synthetic Data Generation of Grid Infrastructure to Automate Drone Inspection

Most drone inspections still require a human to manually inspect the video for defects. Computer vision can help automate and accelerate this inspection...

8 MIN READ

Apr 27, 2023

End-to-End AI for NVIDIA-Based PCs: Optimizing AI by Transitioning from FP32 to FP16

This post is part of a series about optimizing end-to-end AI. The performance of AI models is heavily influenced by the precision of the computational resources...

4 MIN READ

Apr 12, 2023

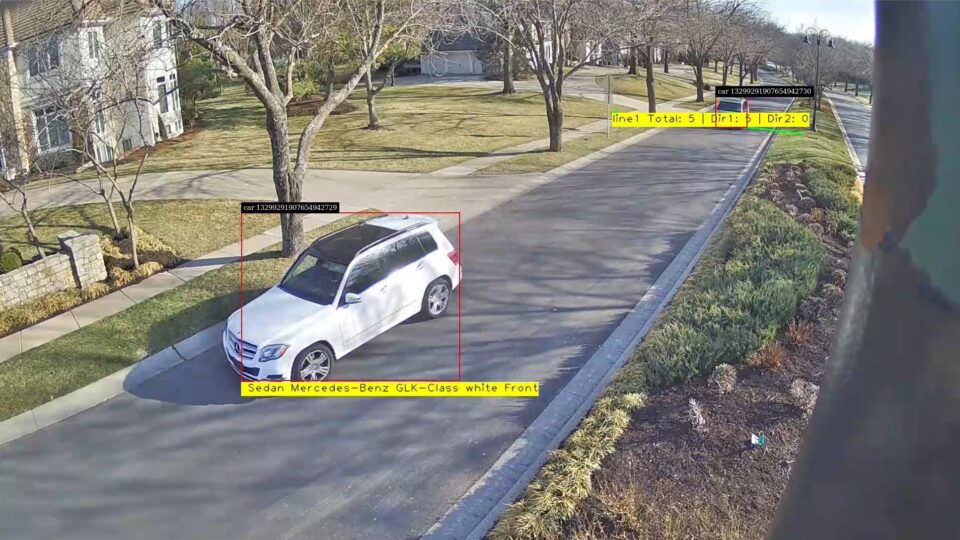

Metropolis Spotlight: Lumeo Simplifies Vision AI Development

Over a billion cameras are deployed in the most important spaces worldwide and these cameras are critical sources of video and data. It is becoming increasingly...

4 MIN READ

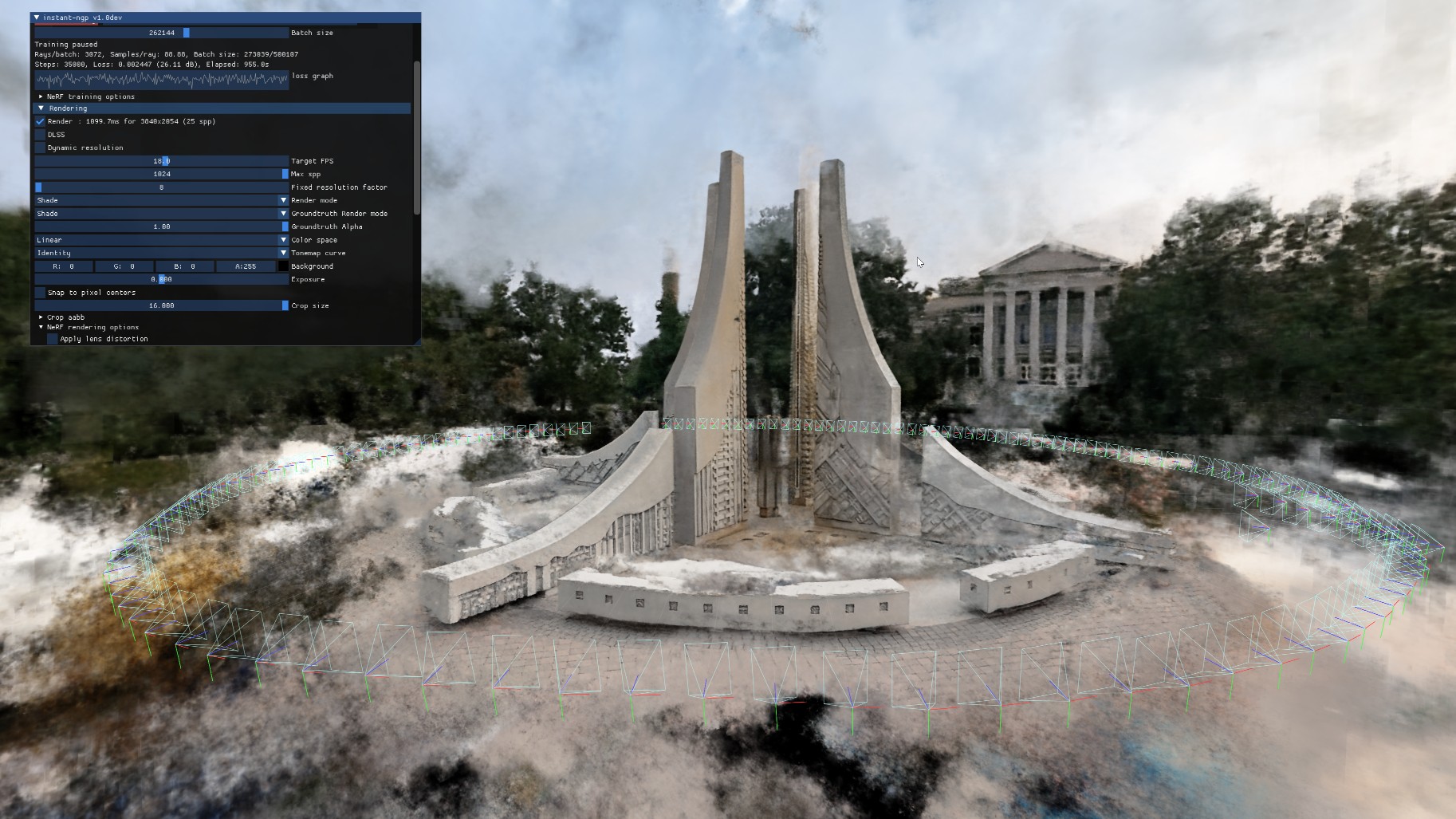

Jan 31, 2023

Turn 2D Images into Immersive 3D Scenes with NVIDIA Instant NeRF in VR

Thousands of developers and content creators have built stunning 3D visuals with NVIDIA Instant NeRF, a rendering tool that turns a set of static images into a...

3 MIN READ

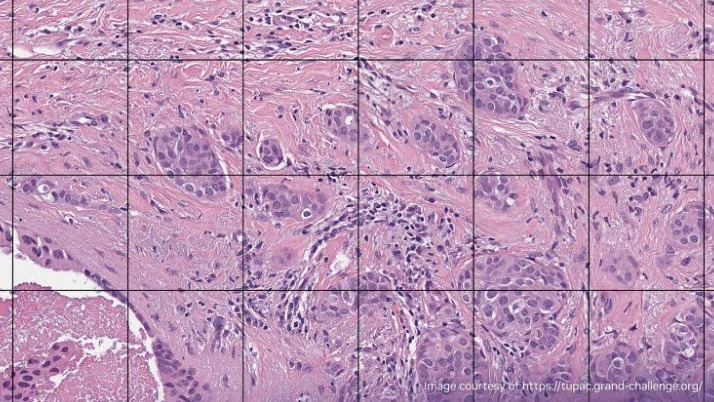

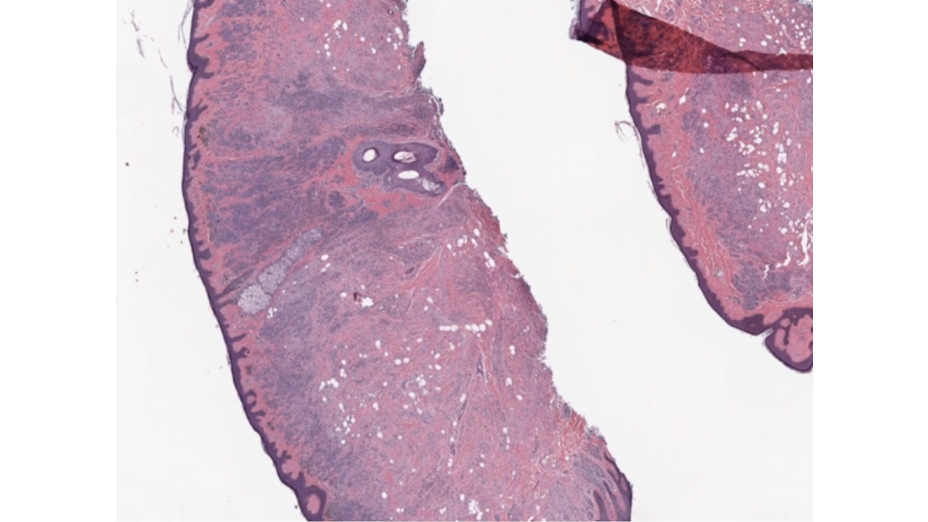

Jan 05, 2023

Accelerating Digital Pathology Workflows Using cuCIM and NVIDIA GPUDirect Storage

Whole slide imaging (WSI), the digitization of tissue on slides using whole slide scanners, is gaining traction in healthcare. WSI enables clinicians in...

10 MIN READ

Nov 30, 2022

Explainer: What Is Denoising?

Denoising is an advanced technique used to decrease grainy spots and discoloration in images while minimizing the loss of quality.

1 MIN READ

Nov 10, 2022

TIME Magazine Names NVIDIA Instant NeRF a Best Invention of 2022

TIME Magazine named NVIDIA Instant NeRF, a technology capable of transforming 2D images into 3D scenes, one of the Best Inventions of 2022. “Before...

3 MIN READ

Sep 28, 2022

AI Model Matches Radiologists’ Accuracy Identifying Breast Cancer in MRIs

Researchers from NYU Langone Health aim to improve breast cancer diagnostics with a new AI model. Recently published in Science Translational Medicine, the...

5 MIN READ

Jul 27, 2022

Enhanced Image Analysis with Multidimensional Image Processing

Image data can generally be described through two dimensions (rows and columns), with a possible additional dimension for the colors red, green, blue (RGB)....

5 MIN READ

Jun 01, 2022

Upcoming Webinar : VPI and Pytorch Interoperability Demo

Join this webinar on June 14 and learn how to program computer vision algorithms using VPI's Python interface.

1 MIN READ