Multi-GPU

Mar 12, 2024

Streamline Live Media Application Development with New Features in NVIDIA Holoscan for Media

NVIDIA Holoscan for Media is a software-defined platform for building and deploying applications for live media. Recent updates introduce a user-friendly...

5 MIN READ

Oct 05, 2023

Just Released: NVIDIA HPC SDK 23.9

This NVIDIA HPC SDK 23.9 update expands platform support and provides minor updates.

1 MIN READ

Sep 14, 2023

Software-Defined Broadcast with NVIDIA Holoscan for Media

The broadcast industry is undergoing a transformation in how content is created, managed, distributed, and consumed. This transformation includes a shift from...

5 MIN READ

Sep 07, 2023

Unlocking Multi-GPU Model Training with Dask XGBoost

As data scientists, we often face the challenging task of training large models on huge datasets. One commonly used tool, XGBoost, is a robust and efficient...

11 MIN READ

Aug 29, 2023

Streamline Generative AI Development with NVIDIA NeMo on GPU-Accelerated Google Cloud

Generative AI has become a transformative force of our era, empowering organizations spanning every industry to achieve unparalleled levels of productivity,...

9 MIN READ

Jun 02, 2023

GPU Integration Propels Data Center Efficiency and Cost Savings for Taboola

When you see a context-relevant advertisement on a web page, it's most likely content served by a Taboola data pipeline. As the leading content recommendation...

13 MIN READ

May 25, 2023

Just Released: NVIDIA HPC SDK v23.5

This update expands platform support and provides minor updates.

1 MIN READ

May 04, 2023

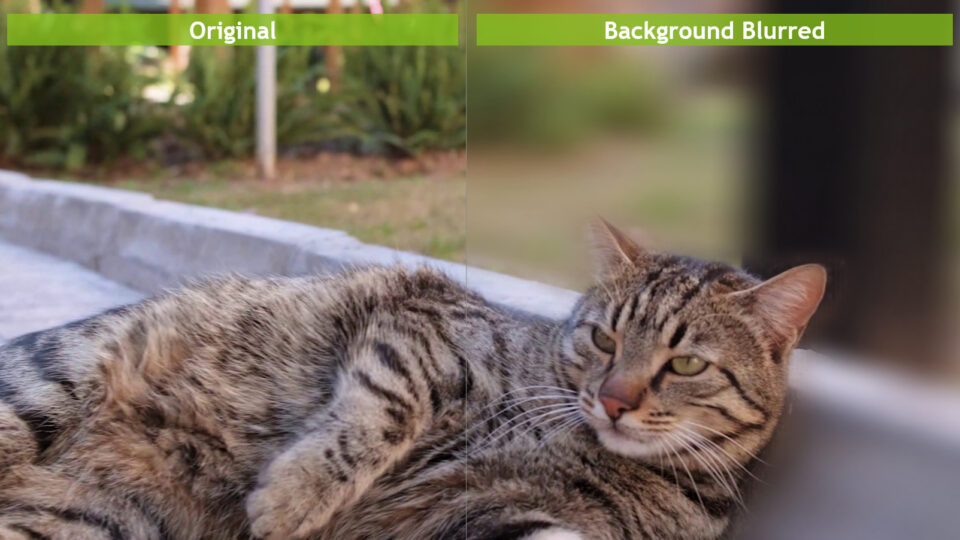

Increasing Throughput and Reducing Costs for AI-Based Computer Vision with CV-CUDA

Real-time cloud-scale applications that involve AI-based computer vision are growing rapidly. The use cases include image understanding, content creation,...

11 MIN READ

Apr 14, 2023

A Guide to CUDA Graphs in GROMACS 2023

GPUs continue to get faster with each new generation, and it is often the case that each activity on the GPU (such as a kernel or memory copy) completes very...

13 MIN READ

Apr 06, 2023

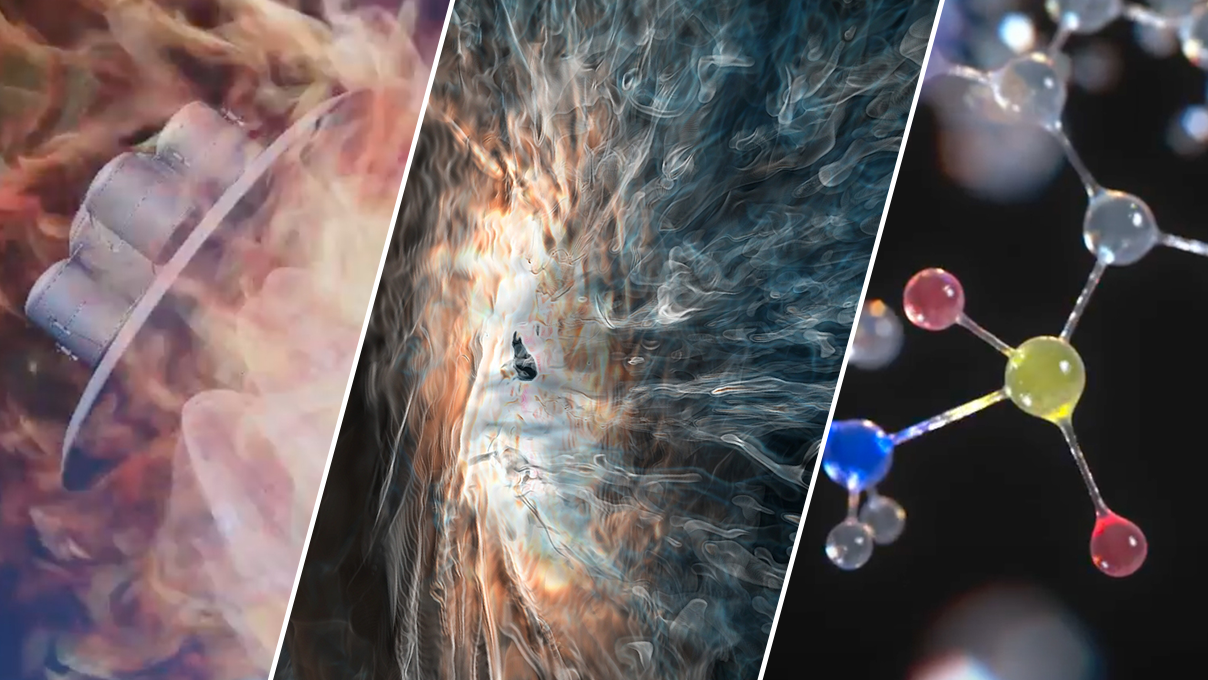

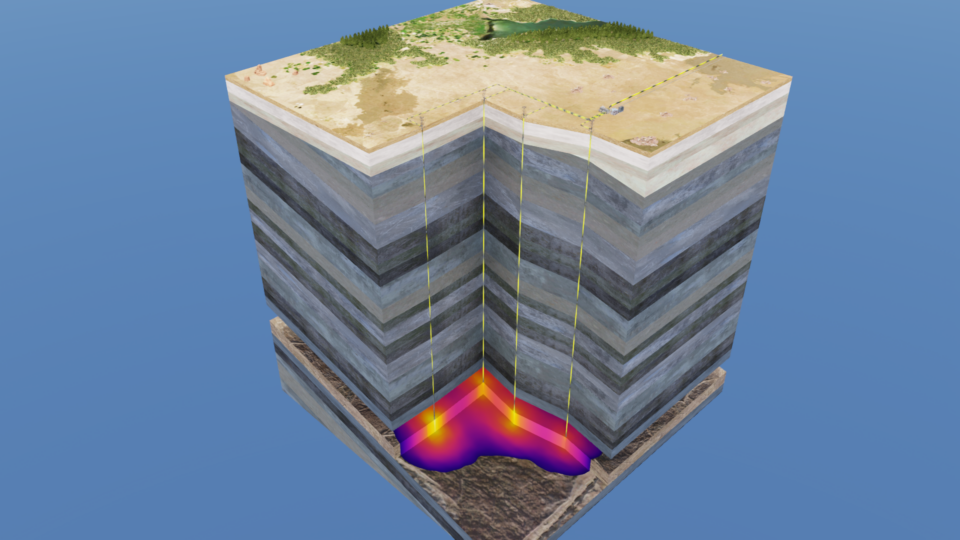

Using Carbon Capture and Storage Digital Twins for Net Zero Strategies

CO2 capture and storage technologies (CCS) catch CO2 from its production source, compress it, transport it through pipelines or by ships, and store it...

13 MIN READ

Apr 03, 2023

Just Released: NVIDIA HPC SDK v23.3

Version 23.3 expands platform support and provides minor updates to the NVIDIA HPC SDK.

1 MIN READ

Jan 25, 2023

Just Released: NVIDIA HPC SDK v23.1

Version 23.1 of the NVIDIA HPC SDK introduces CUDA 12 support, fixes, and minor enhancements.

1 MIN READ

Jan 17, 2023

New Course: Introduction to Graph Neural Networks

Learn the basic concepts, implementations, and applications of graph neural networks (GNNs) in this new self-paced course from NVIDIA Deep Learning Institute.

1 MIN READ

Nov 29, 2022

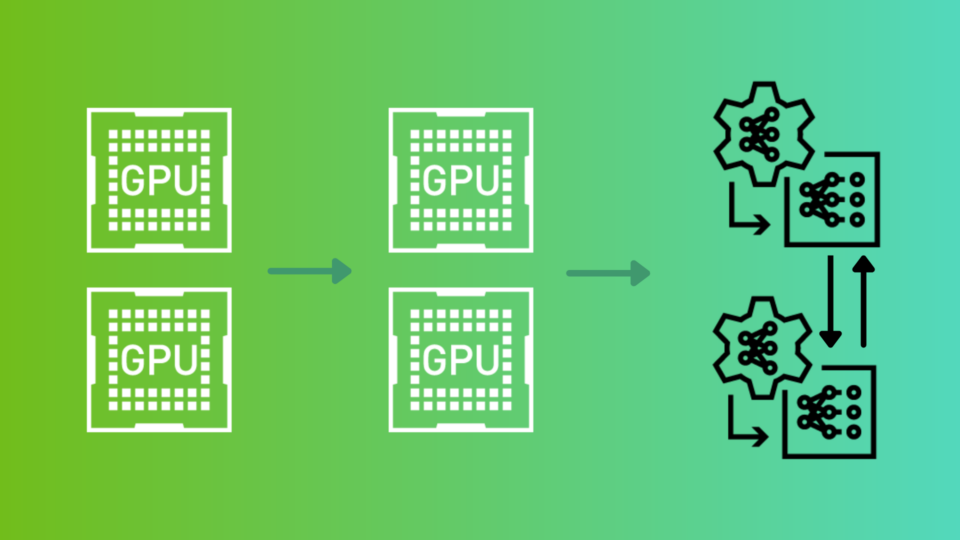

New Workshop: Data Parallelism: How to Train Deep Learning Models on Multiple GPUs

Learn how to decrease model training time by distributing data to multiple GPUs, while retaining the accuracy of training on a single GPU in this new instructor-led workshop.

1 MIN READ

Nov 17, 2022

New Asynchronous Programming Model Library Now Available with NVIDIA HPC SDK v22.11

Celebrating the SuperComputing 2022 international conference, NVIDIA announces the release of HPC Software Development Kit (SDK) v22.11. Members of the NVIDIA...

4 MIN READ

Oct 13, 2022

Upcoming Workshop: Model Parallelism: Building and Deploying Large Neural Networks (EMEA)

Learn how to train the largest of neural networks and deploy them to production.

1 MIN READ