Performance Optimization

Mar 12, 2024

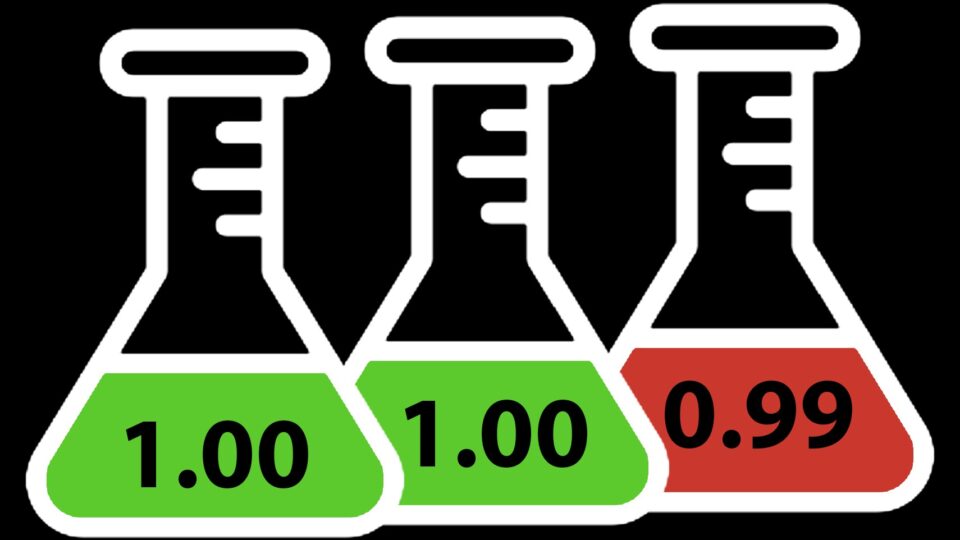

Calculating Video Quality Using NVIDIA GPUs and VMAF-CUDA

Video quality metrics are used to evaluate the fidelity of video content. They provide a consistent quantitative measurement to assess the performance of the...

14 MIN READ

Feb 21, 2024

Limiting CPU Threads for Better Game Performance

Many PC games are designed around an eight-core console with an assumption that their software threading system ‘just works’ on all PCs, especially...

6 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Inference Optimization

In this post, we delve deeper into the inference optimization process to improve the performance and efficiency of our machine learning models during the...

9 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Implementation

To make scene text detection and recognition work on irregular text or for specific use cases, you must have full control of your model so that you can do...

6 MIN READ

Jan 16, 2024

Robust Scene Text Detection and Recognition: Introduction

Identification and recognition of text from natural scenes and images become important for use cases like video caption text recognition, detecting signboards...

8 MIN READ

Jan 05, 2024

Improving CUDA Initialization Times Using cgroups in Certain Scenarios

Many CUDA applications running on multi-GPU platforms usually use a single GPU for their compute needs. In such scenarios, a performance penalty is paid by...

5 MIN READ

Dec 18, 2023

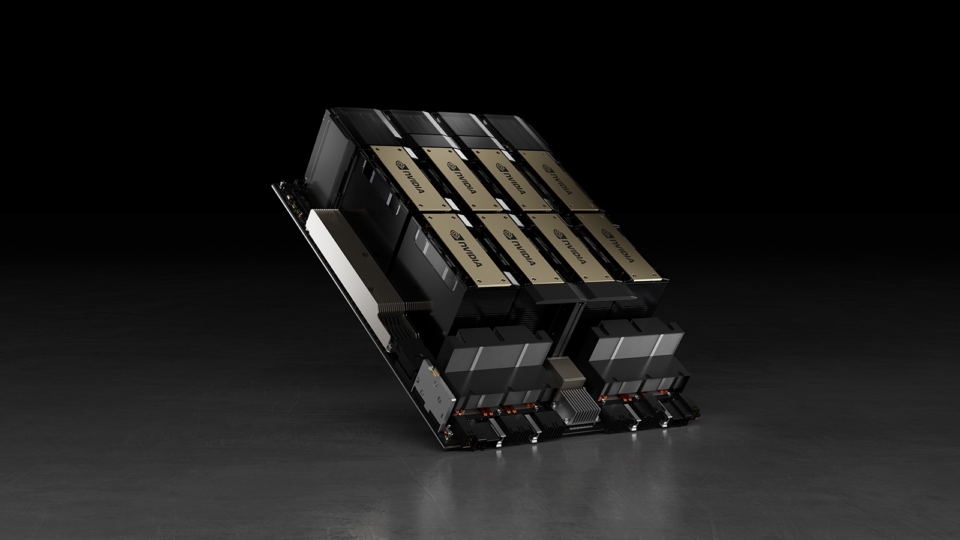

Deploying Retrieval-Augmented Generation Applications on NVIDIA GH200 Delivers Accelerated Performance

Large language model (LLM) applications are essential in enhancing productivity across industries through natural language. However, their effectiveness is...

10 MIN READ

Dec 14, 2023

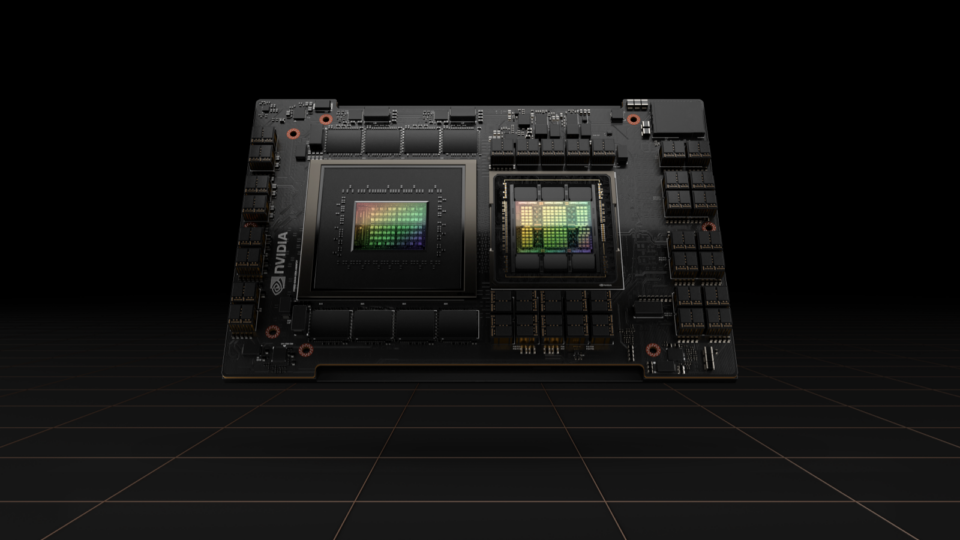

Achieving Top Inference Performance with the NVIDIA H100 Tensor Core GPU and NVIDIA TensorRT-LLM

Best-in-class AI performance requires an efficient parallel computing architecture, a productive tool stack, and deeply optimized algorithms. NVIDIA released...

4 MIN READ

Oct 02, 2023

Accelerated Vector Search: Approximating with RAPIDS RAFT IVF-Flat

Performing an exhaustive exact k-nearest neighbor (kNN) search, also known as brute-force search, is expensive, and it doesn’t scale particularly well to...

15 MIN READ

Sep 11, 2023

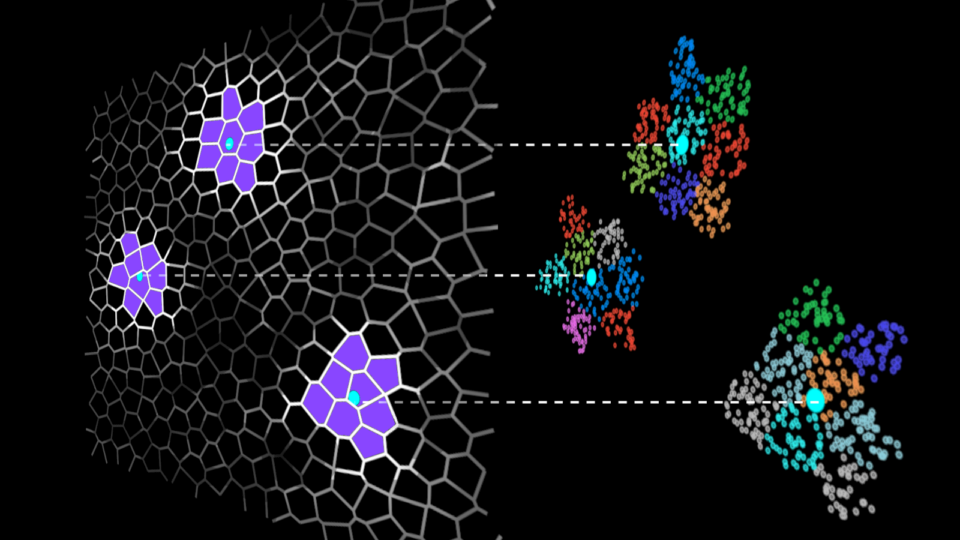

Accelerating Vector Search: Fine-Tuning GPU Index Algorithms

In this post, we dive deeper into each of the GPU-accelerated indexes mentioned in part 1 and give a brief explanation of how the algorithms work, along with a...

12 MIN READ

Sep 11, 2023

Accelerating Vector Search: Using GPU-Powered Indexes with RAPIDS RAFT

In the AI landscape of 2023, vector search is one of the hottest topics due to its applications in large language models (LLM) and generative AI. Semantic...

11 MIN READ

Sep 06, 2023

GPUs for ETL? Optimizing ETL Architecture for Apache Spark SQL Operations

Extract-transform-load (ETL) operations with GPUs using the NVIDIA RAPIDS Accelerator for Apache Spark running on large-scale data can produce both cost savings...

8 MIN READ

Jul 17, 2023

GPUs for ETL? Run Faster, Less Costly Workloads with NVIDIA RAPIDS Accelerator for Apache Spark and Databricks

We were stuck. Really stuck. With a hard delivery deadline looming, our team needed to figure out how to process a complex extract-transform-load (ETL) job on...

7 MIN READ

Jul 11, 2023

Accelerated Data Analytics: Machine Learning with GPU-Accelerated Pandas and Scikit-learn

If you are looking to take your machine learning (ML) projects to new levels of speed and scalability, GPU-accelerated data analytics can help you deliver...

14 MIN READ

Jul 10, 2023

In-Game GPU Profiling for DirectX 12 Using SetBackgroundProcessingMode

If you are a DirectX 12 (DX12) game developer, you may have noticed that GPU times displayed in real time in your game HUD may change over time for a given...

4 MIN READ

Jun 28, 2023

Improving GPU Performance by Reducing Instruction Cache Misses

GPUs are specially designed to crunch through massive amounts of data at high speed. They have a large amount of compute resources, called streaming...

11 MIN READ