parallel programming

Mar 06, 2024

How to Accelerate Quantitative Finance with ISO C++ Standard Parallelism

Quantitative finance libraries are software packages that consist of mathematical, statistical, and, more recently, machine learning models designed for use in...

10 MIN READ

Feb 01, 2024

Just Released: NVIDIA HPC SDK v24.1

This NVIDIA HPC SDK update includes the cuBLASMp preview library, along with minor bug fixes and enhancements.

1 MIN READ

Dec 01, 2023

Webinar: Analysis of OpenACC Validation and Verification Testsuite

On December 7, learn how to verify OpenACC implementations across compilers and system architectures with the validation testsuite.

1 MIN READ

Nov 13, 2023

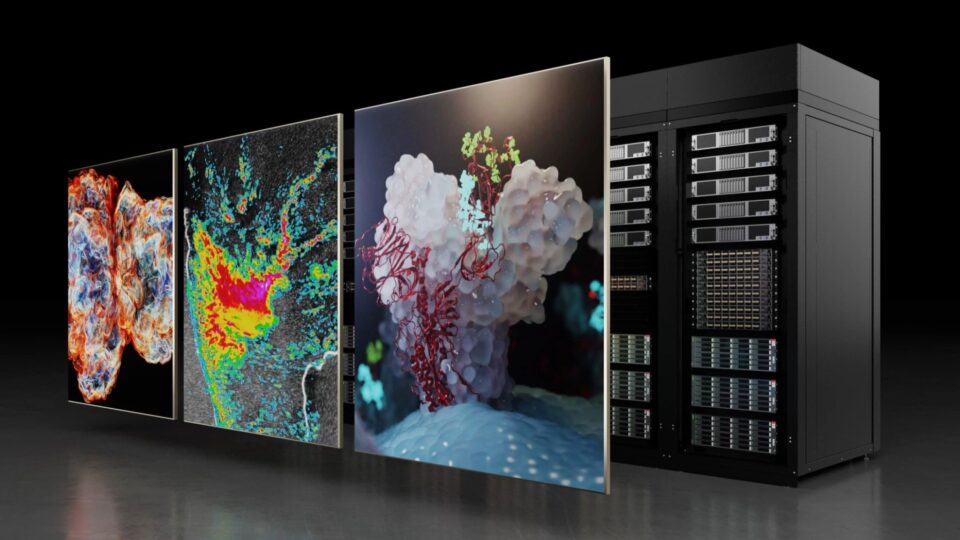

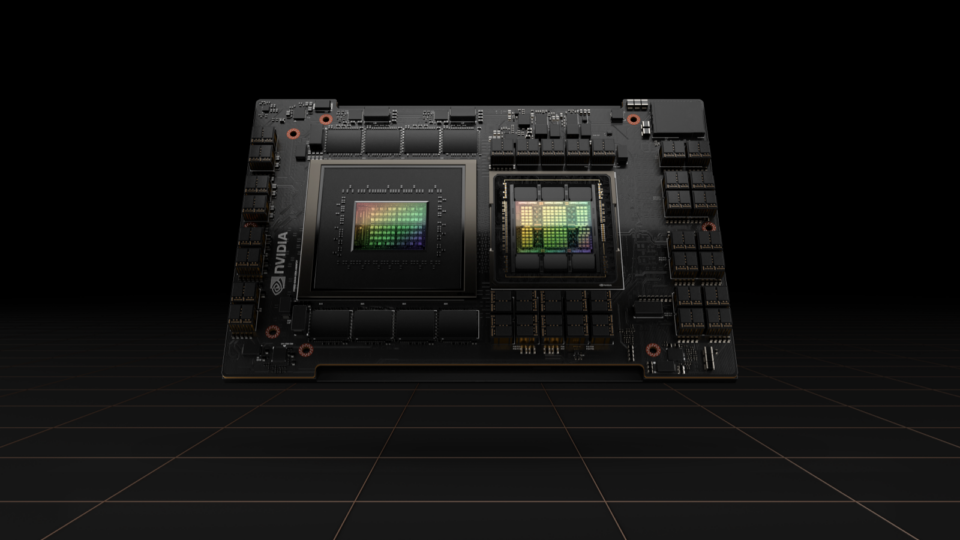

Simplifying GPU Programming for HPC with NVIDIA Grace Hopper Superchip

The new hardware developments in NVIDIA Grace Hopper Superchip systems enable some dramatic changes to the way developers approach GPU programming. Most...

17 MIN READ

Nov 13, 2023

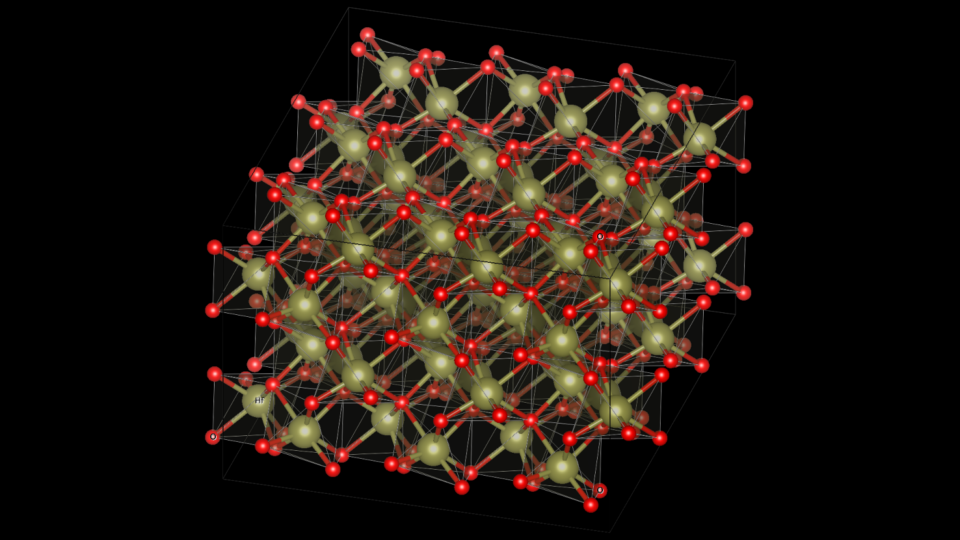

Optimize Energy Efficiency of Multi-Node VASP Simulations with NVIDIA Magnum IO

Computational energy efficiency has become a primary decision criterion for most supercomputing centers. Data centers, once built, are capped in terms of the...

17 MIN READ

Oct 05, 2023

Just Released: NVIDIA HPC SDK 23.9

This NVIDIA HPC SDK 23.9 update expands platform support and provides minor updates.

1 MIN READ

Jul 31, 2023

Just Released: NVIDIA HPC SDK v23.7

NVIDIA HPC SDK version 23.7 is now available and provides minor updates and enhancements.

1 MIN READ

Jun 22, 2023

Model Parallelism and Conversational AI Workshops

Join these upcoming workshops to learn how to train large neural networks, or build a conversational AI pipeline.

1 MIN READ

May 25, 2023

Just Released: NVIDIA HPC SDK v23.5

This update expands platform support and provides minor updates.

1 MIN READ

Apr 03, 2023

Just Released: NVIDIA HPC SDK v23.3

Version 23.3 expands platform support and provides minor updates to the NVIDIA HPC SDK.

1 MIN READ

Jan 25, 2023

Just Released: NVIDIA HPC SDK v23.1

Version 23.1 of the NVIDIA HPC SDK introduces CUDA 12 support, fixes, and minor enhancements.

1 MIN READ

Nov 17, 2022

New Asynchronous Programming Model Library Now Available with NVIDIA HPC SDK v22.11

Celebrating the SuperComputing 2022 international conference, NVIDIA announces the release of HPC Software Development Kit (SDK) v22.11. Members of the NVIDIA...

4 MIN READ

Nov 15, 2022

Scaling VASP with NVIDIA Magnum IO

You could make an argument that the history of civilization and technological advancement is the history of the search and discovery of materials. Ages are...

22 MIN READ

Oct 12, 2022

Just Released: HPC SDK v22.9

This version 22.9 update to the NVIDIA HPC SDK includes fixes and minor enhancements.

1 MIN READ

Sep 15, 2022

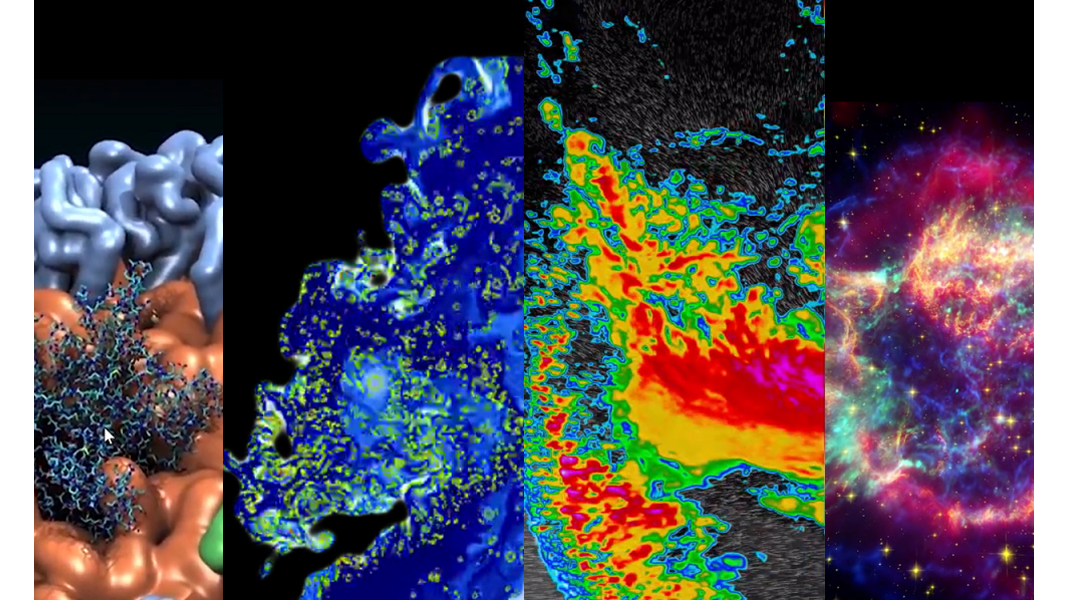

Top HPC Sessions at GTC 2022

Learn about new CUDA features, digital twins for weather and climate, quantum circuit simulations, and much more with these GTC 2022 sessions.

1 MIN READ

Jul 27, 2022

Just Released: New Arm CPU Support and Advancements in HPC SDK 22.7

This release includes enhancements, fixes, and new support for Arm SVE, Rocky Linux OS, and Amazon EC2 C7g instances, powered by the latest generation AWS...

1 MIN READ