The NVIDIA DriveWorks SDK contains a collection of CUDA-based low level point cloud processing modules optimized for NVIDIA DRIVE AGX platforms. The DriveWorks Point Cloud Processing modules include common algorithms that any AV developer working with point cloud representations would need, such as accumulation and registration. Figure 1 shows NVIDIA test vehicles outfitted with lidar.

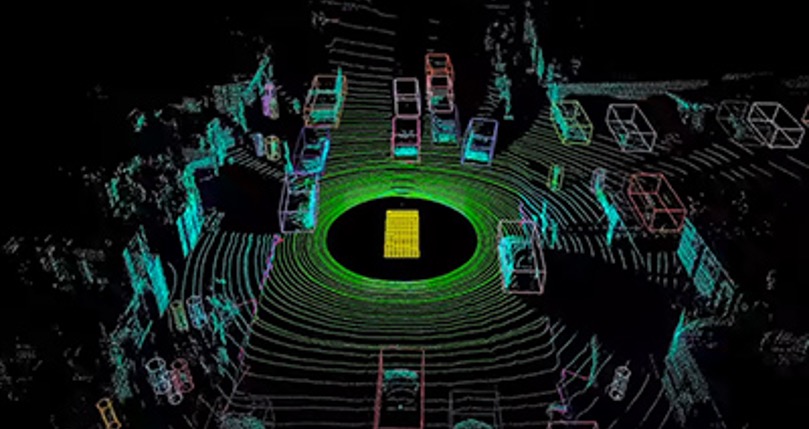

Certain types of automotive sensors, including lidars and radars, represent the spatial environment around the vehicle as a collection of 3-dimensional points. This is known as a point cloud. Point clouds sample the surface of objects around the vehicle in high detail, making them well-suited for use in higher-level obstacle perception, mapping, localization, and planning algorithms.

Point cloud processing onboard the autonomous vehicle (AV) must be fast enough to enable it to sense and react to changing environments in order to meet safety requirements for self-driving cars. It must also be robust enough to handle sparse and noisy data.

The Point Cloud Processing modules are compatible with the DriveWorks Sensor Abstraction Layer (SAL). The modules will therefore work with any supported automotive sensor that outputs a stream of samples, whether natively supported or enabled through the DriveWorks Sensor Plugin Framework. Though the modules can process point clouds from any SAL-compatible sensor, the algorithms inherently give the best performance with sensors that output denser point clouds. Additionally, while we optimize the modules for lidar data, we also assume they work with other supported sensor types such as radar.

As with the rest of the DriveWorks SDK, the Point Cloud Processing modules expose well-defined APIs and data structures. This enables developers to interface modules with their own software stack, reducing custom code and development time.

DriveWorks SDK Point Cloud Processing Modules

DRIVE Software 8.0 introduced the following CUDA-based Point Cloud Processing capabilities. The Point Cloud Processing capabilities will continue to evolve and will be delivered in subsequent releases.

- Accumulation. Collects a specified number of decoded packets per each lidar spin. Organizes the resulting point cloud into a 2D grid for more efficient downstream processing. Accumulation also optimally corrects for motion distortion during packet accumulation.

- Stitching. Supports combining point clouds from different sensors into a common coordinate system. Also handles offset correction between multiple point clouds coming from different sensors to reduce motion artifacts.

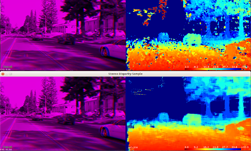

- Range Image Creator. Generates a 2-dimensional image from a point cloud and supports both organized and unorganized point clouds.

- Iterative Closest Point (ICP). Calculates the rigid transformation between two accumulated point clouds using Iterative Closest Points (ICP) algorithm. Provides two different ICP variants: KD Tree and Depth Map. This allows developers to use the variant best suited for their case.

- Plane Extractor. Estimates a 3D plane from point cloud data using random sample consensus (RANSAC) fitting and non-linear optimization; easily configurable parameters for efficiency tuning.

Point Cloud Processing In Action

Let’s look at an example that demonstrates the DriveWorks Point Cloud Processing capabilities.

The demonstration first stitches point clouds from two Velodyne HDL-32E lidars and one Velodyne HDL-64E lidar. Stitching multiple point clouds requires knowledge of the calibration parameters of the individual lidars, precise time synchronization between the sensors, and good estimation of the vehicle’s motion since the last stitching time point. The DriveWorks Self-Calibration module estimates the lidars’ orientation with respect to the vehicle by continuously observing the deviation of the nominal lidar calibration with respect to the car. The DriveWorks Egomotion module, on the other hand, uses IMU and odometry information to estimate the vehicle’s movement between any two timestamps with high confidence and low drift rate.

The sample generates a range image and an organized point cloud using the resultant fused point cloud. This output point cloud is then used to compute the rigid transformation between two temporally-adjacent point clouds via the GPU-based iterative closest points (ICP) module.

Now let’s check out some code which shows how you can implement the described workflow using the DriveWorks SDK.

First, we need to initialize DriveWorks’ PointCloudProcessing components and required buffers to store the results:

void initializePointCloudProcessing(){

// allocate point cloud buffer to store accumulated points

gAccumulatedPoints.type = DW_MEMORY_TYPE_CUDA;

gAccumulatedPoints.capacity = gLidarProperties.pointsPerSpin;

dwPointCloud_createBuffer(&gAccumulatedPoints);

// initialize DriveWorks PointCloudAccumulator module which will accumulate individual lidar packets to a point cloud

dwPointCloudAccumulatorParams accumParams{};

accumParams.egomotion = gEgomotion;

accumParams.memoryType = DW_MEMORY_TYPE_CUDA;

accumParams.enableMotionCompensation = true;

dwPointCloudAccumulator_initialize(&gAccumulator, &accumParams, &gLidarProperties, gContext);

// allocate point cloud buffer to store stitched points

gStitchedPoints.type = DW_MEMORY_TYPE_CUDA;

gStitchedPoints.capacity = getNumberOfPointsPerSpinFromAllLidars();

dwPointCloud_createBuffer(&gStitchedPoints);

// initialize DriveWorks PointCloudStitcher which will combine multiple point clouds into one

dwPointCloudStitcher_initialize(&gStitcher, gContext);

// allocate buffer to generate depthmap representation from lidar points

gDepthMap.type = DW_MEMORY_TYPE_CUDA;

gDepthMap.capacity = getDepthmapWidth() * getDepthmapHeight();

dwPointCloud_createBuffer(&gDepthMap);

// create RangeImageCreator which will generate 2.5D depthmap as well as range image representation from unorganized accumulated points

dwPointCloudRangeImageCreator_initialize(&gRangeImageCreator, &gRangeImageCreatorProperties, gContext);

// initialize DriveWorks ICP module

dwPointCloudICPParams icpParams{};

icpParams.icpType = DW_POINT_CLOUD_ICP_TYPE_DEPTH_MAP; // optimized ICP for 2.5D depthmap representation of a point cloud

dwPointCloudICP_initialize(&gICP, &icpParams, gContext);

}

After initializing all components, we execute the main loop of the application. In the main loop, we grab data from sensors, feed it to the point cloud accumulators, run the point cloud stitcher, create range images from the motion compensated stitcher result, and then execute ICP given the current and previous stitched point clouds:

void runOneIteration(){

// repeat until we have all lidars collected

// one would usually differentiate the accumulator for each lidar and feed it with events coming from the corresponding LIDAR

while(!accumulatedAllRequiredLidars())

{

const dwSensorEvent* ev = nullptr;

dwSensorManager_acquireNextEvent(&ev, QUERY_TIMEOUT, gSensorManager);

// for any new lidar measurement we run them through the pipeline

if (ev->type == DW_SENSOR_LIDAR)

{

// bind new lidar frame as input to the accumulator

// when method return DW_SUCCESS we have enough points collected to represent full spin

if (DW_SUCCESS == dwPointCloudAccumulator_bindInput(ev->lidFrame, gPointCloudAccumulator))

{

// bind output of the accumulator, this can be done once during initialization time if output buffer is fixed

dwPointCloudAccumulator_bindOutput(&gAccumulatedPoints, gAccumulator);

// perform accumulation of the packets of full spin into a point cloud

dwPointCloudAccumulator_process(gPointCloudAccumulator);

registrationTime = ev->hostTimestamp;

}

// for any non-lidar event they shall be consumed by Egomotion

}else

{

consumeNonLidarEvent(ev);

}

dwSensorManager_releaseAcquiredEvent(ev, gSensorManager);

}

// associate input and output of the stitcher

// the call can happen once during initialization, if output/input buffers are not changing

gStitcherInput.pointCloud[0] = &gAccumulatedPoints;

gStitcherInput.transformation[0] = getLidarPose(0); // orientation/position of lidar 0 in a common coordinate system

gStitcherInput.count = 1; // up-to 10 possible accumulated point clouds

dwPointCloudStitcher_bindInput(&gStitcherInput, gStitcher);

// run stitcher to process all accumulated point clouds

dwPointCloudStitcher_bindOutput(&gStitchedPoints, gStitcher);

dwPointCloudStitcher_process(gStitcher);

// use output of the stitcher to generate the depthmaps

dwPointCloudRangeImageCreator_bindInput(gStitchedPoints, gRangeImageCreator);

// we want to have a 3D point cloud representation as well as 2.5D depthmap image

dwPointCloudRangeImageCreator_bindOutput(&gStichedDepthmap3D, gStitchedDepthmapImage, gRangeImageCreator);

// run range image creator to generate range images from the stitched point cloud

dwPointCloudRangeImageCreator_process(gRangeImageCreator);

// query Egomotion module for the motion experienced by the car between now and previous stitched pointcloud

dwTransformation3f icpInitialPose = DW_IDENTITY_TRANSFORMATION3F;

dwTransformation3f icpRefinedPose = DW_IDENTITY_TRANSFORMATION3F;

dwEgomotion_computeRelativeTransformation(&icpInitialPose, nullptr, gStitchedDepthmap3D.timestamp, gStitchedDepthmap3DPrev.timestamp, gEgomotion);

// input to ICP is the current and previous stitched point cloud with a prior for the motion as derived from egomotion

// output is the refined pose

dwPointCloudICP_bindInput(&gStitchedDepthmap3D, &gStitchedDepthmap3DPrevious, &icpInitialPose, gICP);

dwPointCloudICP_bindOutput(&icpRefinedPose, gICP);

dwPointCloudICP_process(gICP);

// ping-pong between previous and current stitched point clouds

swap(gStitchedDepthmap3D, gStitchedDepthmap3DPrev);

...

}

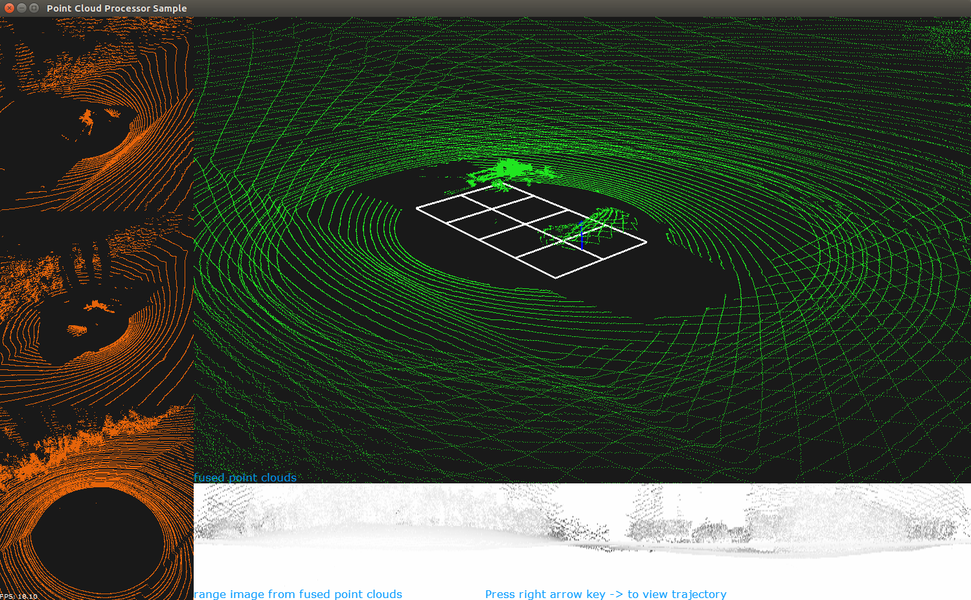

As the animation in Figure 3 shows, the sample opens a window to render three orange colored point clouds in the left column, one for each of the vehicle’s lidars. The fused point cloud renders to the right side of the window, in green. The bottom of the window shows the range image generated from the fused point cloud.

NVIDIA includes the Point Cloud Processing sample plus its source code in each DriveWorks release. It runs right out of the box and can be used as a starting point for developing AV point cloud solutions.

How to get the NVIDIA DriveWorks SDK

Members of the NVIDIA DRIVE Early Access Developer Program currently have exclusive access to the DriveWorks SDK. Your company or university must have current legal agreements on file. Please contact your Account Manager (or complete this form) to ensure necessary agreements have been signed before requesting to join the program. Users may only join with a corporate or university email address; register for the NVIDIA DRIVE Early Access Developer Program.