Pixvana’s cloud-based VR pipeline now incorporates the NVIDIA VRWorks 360 Video SDK. Pixvana strives to solve a number of challenges facing the VR creator by leveraging the power of cloud computing. Highlights of Pixvana’s VR pipeline include:

- A video player with spatialized audio for all the major VR headsets (Oculus Go, Oculus Rift, HTC Vive, Google Daydream and Samsung GearVR) allows for convenient video distribution.

- Post-processing tools and options to provide the best quality 360 video on headsets.

- Stitching tools (Using VRWorks) to create 360 videos from various camera systems.

- A web-based platform enables sharing, editing, and resource management from a Chrome browser.

- Kiosk and presenter modes streamline group casting in cinema and training scenarios.

- Customizable interactive videos allow hyperports, graphic overlays, and testing.

- In-headset editing tools to create interactive videos.

This post provides an overview of the fundamentals of 360 video production. We’ll also zoom in on Pixvana’s suite of cloud-based tools as well as some key processes in VRWorks with the goal of helping VR creators leverage these programs to make the most of their 360/VR projects.

Creating 360 Video

Pixvana focuses on making 360 video more accessible for creators without sacrificing in-headset video quality. High quality 360 video requires multiple cameras or multiple lenses and sensors in a specialized camera rig to capture all angles of an environment. Figure 1 shows two examples of multi-lens, multi-sensor 360-degree cameras.

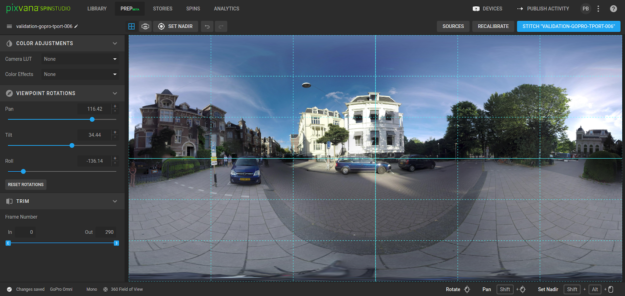

These video views need to be aligned, exposure compensated, and combined in a process called “stitching”, as seen in figure 2. Additional post-production features allow editors to refine, rotate, and color correct the image. Stitching and processing the series of images results in an equirectangular video typically 8K or larger.

While equirectangular is the standard format for 360 video, it has significant drawbacks, including wasted pixels at the zenith and nadir of the image. So we support multiple original specialty formats in addition to supporting equirectangular video. These include different geometric shapes and packaging, such as diamond plane and FOVAS (Field of View Adaptive Streaming). Diamond plane is a special projection format that reduces data size. FOVAS uses a special tiling format and streaming technology that places high-resolution content in the active field of view, even as the user turns their head. These formats overcome the constraints of standard video in VR headsets with the ultimate goal of producing higher quality video at a lower bandwidth.

Figure 3 shows an equirectangular projection of a beach scene. Notice the extreme distortion at both the top and bottom of the image. These pixels are over-sampled requires more bandwidth to stream, which is wasteful.

Figure 4 shows a diamond plane re-arrangement in which the image is projected onto an icosahedron. The facets of the image are rearranged to fit a plane. This projection addresses the oversampling problem making the resulting image smaller and therefore more efficient for streaming.

The FOVAS method requires creating multiple views of the same video, each visually tuned to a specific viewing direction. The player switches as needed to the correct video.

Regardless of format, the next step in VR video production is creating multiple versions of the file with different bit rates so they can stream as variable bitrate videos as well as specialized downloadable exports. All of these processes: stitching, color, special formats, and multiple bitrates versions can take advantage of both the cloud and GPUs to efficiently and quickly generate the required outputs.

Pixvana’s cloud-powered system: distribution, post-production, and processing

Distribution represents one of the chief challenges for a surround video creator. The state-of-the-art is currently side-loading video onto individual devices. This both takes considerable time and requires the headset and the video producer to be in the same location. Pixvana’s SPIN Play capability simplifies this workflow, allowing for remote management and the ability to track changes when connected to the internet. It also allows for full content download onto the paired headset and playback when not connected to the network.

There are two SPIN Play modes: Share Mode and Guide Mode. Share mode is intended for short-term viewing, such as collaborating with a remote colleague. The viewer using share mode enters a unique 9 character code into the headset player, as shown in figure 5. This code identifies the playlist, which the user then watches. The headset wearer is in complete control and can easily exit the sharing session.

Guide mode targets a more managed experience, such as a VR cinema or corporate training scenarios. The headset running guide mode tracks the online content, responds to changes, and can be put into a managed playback mode. A single presenter can synchronize dozens of headsets, start and stop playback, and even track the gazes of all users. These special functions make it ideal for VR film festivals and enterprise clients alike. Figure 6 shows the guide mode management screen.

We’ve added tools to create interactive videos, as shown in figure 7, making 360 videos more useful and engaging for enterprise creators. Producers edit interactive videos in VR, allowing users to place hyperports, graphics, and text in the final viewing environment from within the headset.

The processing power necessary to handle VR videos remains a significant problem facing VR creators. A typical VR camera rig consists of approximately six or more overlapping, high-resolution video cameras. Whatever the editing decisions, these cameras must be aligned, combined, and rendered into a final ultra-high-resolution (8K) video. This takes a significant amount of power to process. An NVIDIA GPU is obviously the right solution, but the horsepower and time required to render reduces workstation use while rendering. Some editors queue up a number of renders at the end of the day to process overnight resulting in an interrupted workflow not suited for creativity.

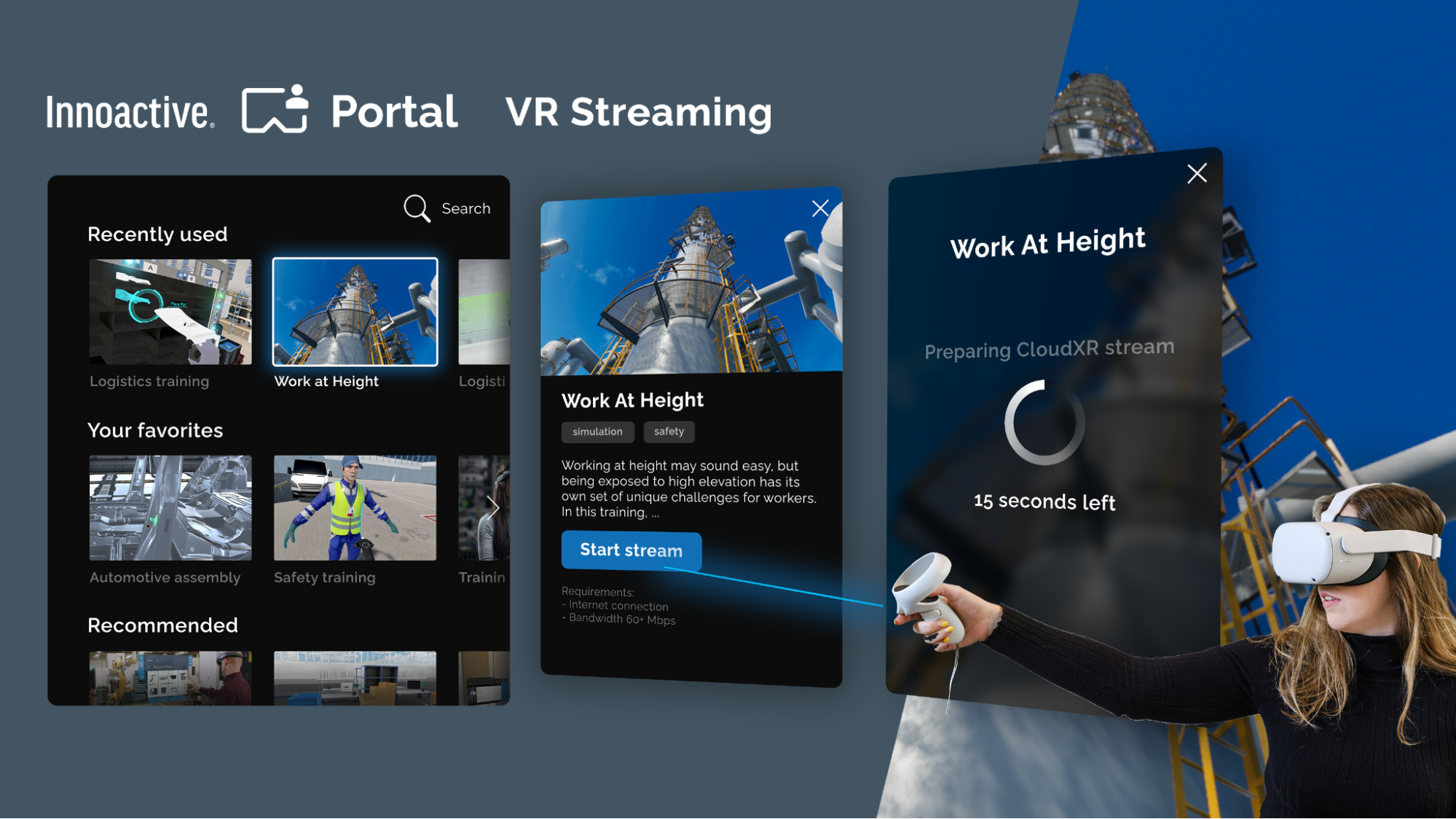

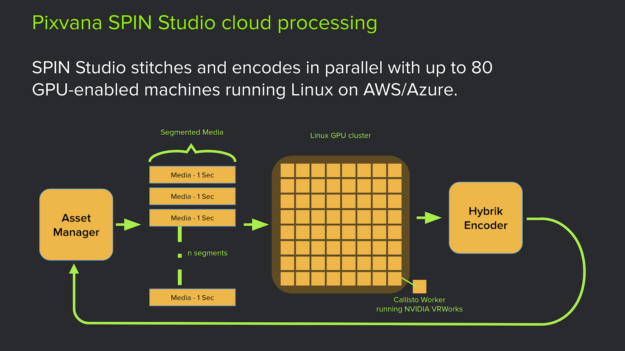

SPIN Studio is Pixvana’s cloud-based platform that uses VRWorks 360 Video for rendering high-quality VR video in a reasonable time. A smart resource management system hosted on Amazon Web Services (AWS) allows users to edit and render multiple videos simultaneously. Once a user requests a render, the system splits the video into multiple segments, which are then distributed to multiple instances. This system can manage work among disparate cloud services platforms for greater throughput. In the current architecture, Pixvana uses both AWS and Microsoft Azure.

Once the jobs complete, one last process collects the results to produce the final video in its entirety. Each GPU instance runs Callisto, our in-house video processing engine which resides in an instance of a Docker container. Callisto incorporates industry standard computer vision libraries, including FFMPEG and OpenCV, our own C++ and CUDA based image-processing algorithms, and the VRWorks 360 Video SDK. This system allows video images to be manipulated and formatted in complex ways.

All VR Video processing involves the following steps:

- Calibrate, or determine rules for rotating and warping the input images

- Edit, trim, color manipulate, seam adjust, and orient

- Render and encode

Creating 360 videos is easier than ever thanks to cloud-based technology, new specialty formats, and Pixvana’s breakthrough VR pipeline. Next, we’ll discuss working in Graphics, 360 Video, and Spatialized Audio within VRWorks, as well as future plans for Pixvana’s VR pipeline.

VRWorks in the Cloud

Let’s now discuss how to leverage VRWorks and cloud-powered processing to optimize VR video production. We’ll also cover some best practices we’ve developed along the way.

How VRWorks works

VRWorks is a set of APIs, libraries, and engines specific to VR usage. VRWorks consists of three main components: VRWorks Graphics (for application and headset developers), VRWorks 360 Video, and VRWorks Audio (for spatialized, ray traced audio). Pixvana primarily leverages 360 Video features.

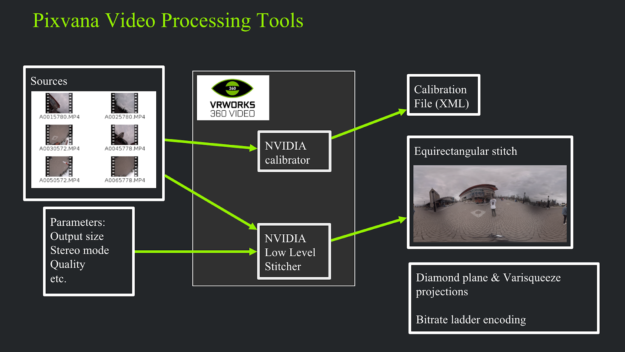

The NVIDIA VRWorks 360 Video SDK supports file-, stream- and frame-based work flows and includes five modules: a high-level stitcher, a calibrator, a low-level stitcher, a warper, and a spatial audio processor. The high-level stitching pipeline includes GPU-accelerated demux, mux, decode and encode to work directly with video files and streams.

While well-optimized, the high-level stitcher does not give us the ability to interact with it to make editing decisions. Instead, we choose to use a combination of the calibrator and the low-level stitcher to enable user interactions. The low-level stitching pipeline works directly with frames in CUDA device memory and provides more flexibility and application control of threads of execution and data transfers. The highly-optimized CUDA-based warper performs image warping and distortion removal by converting images between perspective, fisheye and equirectangular projections. The warping can also be employed to transform equirectangular stitched output into projection formats such as cubemaps to eliminate oversampling and reduce the required streaming bandwidth.

Figure 8 illustrates a calibration workflow. The box at the left, labeled “Sources” represents the user input. The NVIDIA calibrator determines a complete set of intrinsic parameters (focal length, distortion coefficients, field of view) and extrinsic parameters (orientation and position) for each of the cameras from as little as a single frame if input data.

Some caveats exist, such as feature distance, sufficient features, and appropriate lighting. A scene should not have features within one or two meters of the rig, as significant parallax confuses the algorithm. A scene with large, featureless regions, such as a wall, may cause alignment problems. Finally, the scene should be well illuminated, neither so dark that you cannot visually distinguish features in all parts of the input frame, nor so bright that significant portions of the input frame are saturated.

The image in figure 9 shows the result from a very easy calibration case.

Figure 10 below shows a result from a challenging case. You can see significant saturation in three of the sensors. In this case, providing a focal length estimate to the calibration system allowed the calibration process to converge. However, easily distinguishable alignment flaws are visible.

The code snippet below provides an example of how easy it is to call NVcalib, the VRWorks calibrator function. There are seven essential steps:

- Create calibration instance

- Set camera rig properties

- Set calibration options (such as a focal length guess)

- Pass the input image pointers to the calibration instance

- Instruct the calibrator to perform the calibration

- Retrieve the results

- Destroy the calibration instance

// 1. Create calibration instance

verifyNvidiaErrorCode(

nvcalibCreateInstance(framesCount, cameraCount, &hInstance),

hInstance,

"create calibration instance");

// 2. Set rig properties

verifyNvidiaErrorCode(

nvcalibSetRigProperties(hInstance, &videoRig_),

hInstance,

"set rig properties");

// 3. Set calibration options.

setCalibrationOptions(hInstance, calOptions);

// 4. Assign the input images to this calibration instance.

setCalibrationImages(hInstance, calImages);

// 5. Perform the calibration

verifyNvidiaErrorCode(

nvcalibCalibrate(hInstance),

hInstance,

"VRWorks calibration");

// 6. Retrieve the calibraiton results.

verifyNvidiaErrorCode(

nvcalibGetRigProperties(hInstance, &videoRig_),

hInstance,

"retrieve rig calibration properties");

// 6a. Evaluate quality

float q;

nvcalibResult qualResult(nvcalibGetResultParameter(

hInstance, NVCALIB_RESULTS_ACCURACY, NVCALIB_DATATYPE_FLOAT32, 1, &q));

// 7. Destroy instance

verifyNvidiaErrorCode(

nvcalibDestroyInstance(hInstance),

hInstance,

"destroy VRWorks calibration instance");

The nvssVideoStitch low-level stitcher function is equally simple to call. This set of steps is a rough schematic example and does not represent an optimal workflow, but it does allow the steps to be shown in one code block. In this case, the steps are:

- Set the stitcher properties

- Initialize stitcher instance (required once per video).

- Load the input data onto the nvstitch instance

- Perform the stitch

- Retrieve the stitched image from the stitcher

- Destroy the stitcher instance (required once per video)

// 1. Set the stitcher properties

nvssVideoStitcherProperties_t stitcher_props(getStitcherProperties(

top_left_x,

top_left_y,

outputImageRGBA,

pipeline,

stitchQuality,

projection_type,

pano_width,

stereo_ipd,

feather_width,

gpus,

min_dist,

mono_flags));

// 2. initialize stitcher instance (required once per video)

nvssVideoHandle stitcher;

verifyNvidiaErrorCode(

nvssVideoCreateInstance(&stitcher_props, calParams->getPvideoRig(), &stitcher));

// 3. load the input data onto the nvstitch instance.

copyImageCUDAVector2nvstitch(&stitcher, calParams, inputImageCUDAVector);

// Synchronize CUDA before snapping start time

cudaStreamSynchronize(cudaStreamDefault);

const auto stitch_start = high_resolution_clock::now();

// 4. Perform the stitch

verifyNvidiaErrorCode(nvssVideoStitch(stitcher));

// Synchronize CUDA before snapping end time

cudaStreamSynchronize(cudaStreamDefault);

// Report stitch time

auto stitching_time_ms =

std::chrono::duration_cast(high_resolution_clock::now() - stitch_start)

.count();

DEV_LOG("Stitching done. Stitching time in milliseconds is: ");

DEV_INSPECT(stitching_time_ms);

// 5. copy the stitched image from the stitcher to outputRGBA

size_t out_offset = 0;

unsigned char* out_stacked(outputImageRGBA->getPDataUint8());

nvstitchImageBuffer_t output_image;

int num_eyes = stereo_flag ? 2 : 1;

DEV_INSPECT(num_eyes);

for(int eye = 0; eye < num_eyes; eye++)

{

if(stereo_flag)

{

DEV_LOG("writing stereo stitched outout");

verifyNvidiaErrorCode(

nvssVideoGetOutputBuffer(stitcher, nvstitchEye(eye), &output_image));

}

else

{

verifyNvidiaErrorCode(

nvssVideoGetOutputBuffer(stitcher, NVSTITCH_EYE_MONO, &output_image));

}

DEV_LOG("coying output panorama from CUDA buffer.");

if(cudaMemcpy2D(

out_stacked + out_offset,

output_image.row_bytes,

output_image.dev_ptr,

output_image.pitch,

output_image.row_bytes,

output_image.height,

cudaMemcpyDeviceToHost) != cudaSuccess)

{

throwVRWorksError("Error copying output stacked panorama from CUDA buffer");

}

out_offset += output_image.height * output_image.row_bytes;

}

// 6. Destroy the stitcher instance (required once per video)

verifyNvidiaErrorCode(nvssVideoDestroyInstance(stitcher));

Exposure compensation is an important concern for VR videos. Since an outdoor scene shot in 360 will include both the sun and shadow, the dynamic range varies substantially. Camera sensors have limited dynamic range, so each camera in a rig will often autoset its exposure. This leads to a luminance variance between each image in the regions where they will overlap in the final stitch. We measure the luminance differences and compensate for them before passing them to the VRWorks stitcher. Pixvana is working with the NVIDIA team to improve the stitcher to handle this and provide more control and features to produce even better stitches.

Cloud Processing Benefits, Drawbacks and Workarounds

Figure 11 illustrates one of the chief benefits of cloud computing. Each input in this example video is split into short segments, which are then distributed among a number of computers for stitching. These stitched segments are gathered and encoded into a single stitched video. The final video is then placed in the user’s media asset library, ready to watch.

Four significant limitations exist with our cloud-based approach. First, we have a limited amount of control over the GPUs that we use. Second, we must maintain a web-based VR editor. Third, we need to manage coordination among many services. Finally, users must upload their source video, a potentially time-consuming process.

Pixvana has developed a cloud-ingest capability and a desktop uploader for Windows and Mac OS to solve the problem of transferring files to our cloud-based system. The desktop uploader allows the user maximum flexibility by resuming after interruption, such as network loss or system power-down. The uploader also comprehends folder hierarchies, copying the user-defined file structure in the cloud. The upload status is simultaneously reported in the web UI as well as the uploader, allowing a remote editor to track progress.

Pixvana also created a suite of tools to solve the major problems facing VR creators. Highlights include a universal player, in-headset post-processing tools, and a web-based editing and management system. Cloud computing makes all of these innovations possible. This cloud-based VR video editing platform, with NVIDIA’s VRWorks at the core, is how Pixvana will realize the potential of XR storytelling.

Check out Pixvana’s GTC session video to find out more about Pixvana’s approach to VR in the cloud.

Future Plans

Pixvana is dedicated to improving our cloud-based VR pipeline to continue improving the experience and quality for enterprise filmmakers.

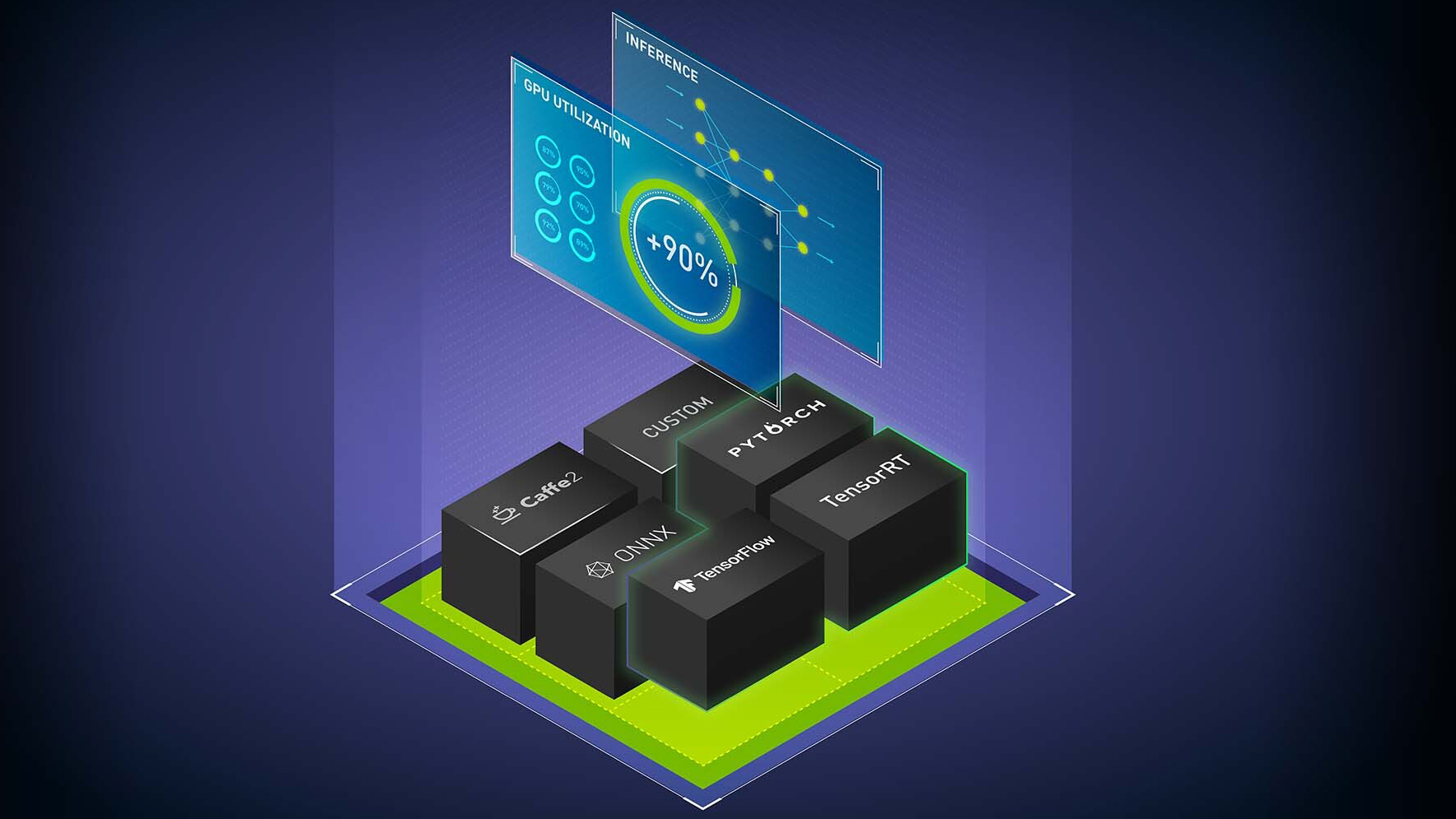

- With the amount of video being processed, we are looking at leveraging Machine Learning and AI to increase what’s possible for 360 filmmaking.

- We’re also investigating how to improve the stitching process by using image recognition, allow for content-aware filling, and optimizing metadata of the video content.

- While we are currently focused on 360 video for VR headsets, we expect to cover specialized uses in AR and MR as well — the full XR range.

- Volumetric capture continues to be developed, and can provide full 6DOF captured experiences. Support for this feature will fold into the pipeline as it becomes more practical.

- Light fields are another VR video technology that could provide fully reproduced live capture in a 6DOF environment.

Of course, all of these will require even more GPU power on the cloud.