Video Series: Shiny Pixels and Beyond – Real-Time Raytracing at SEED

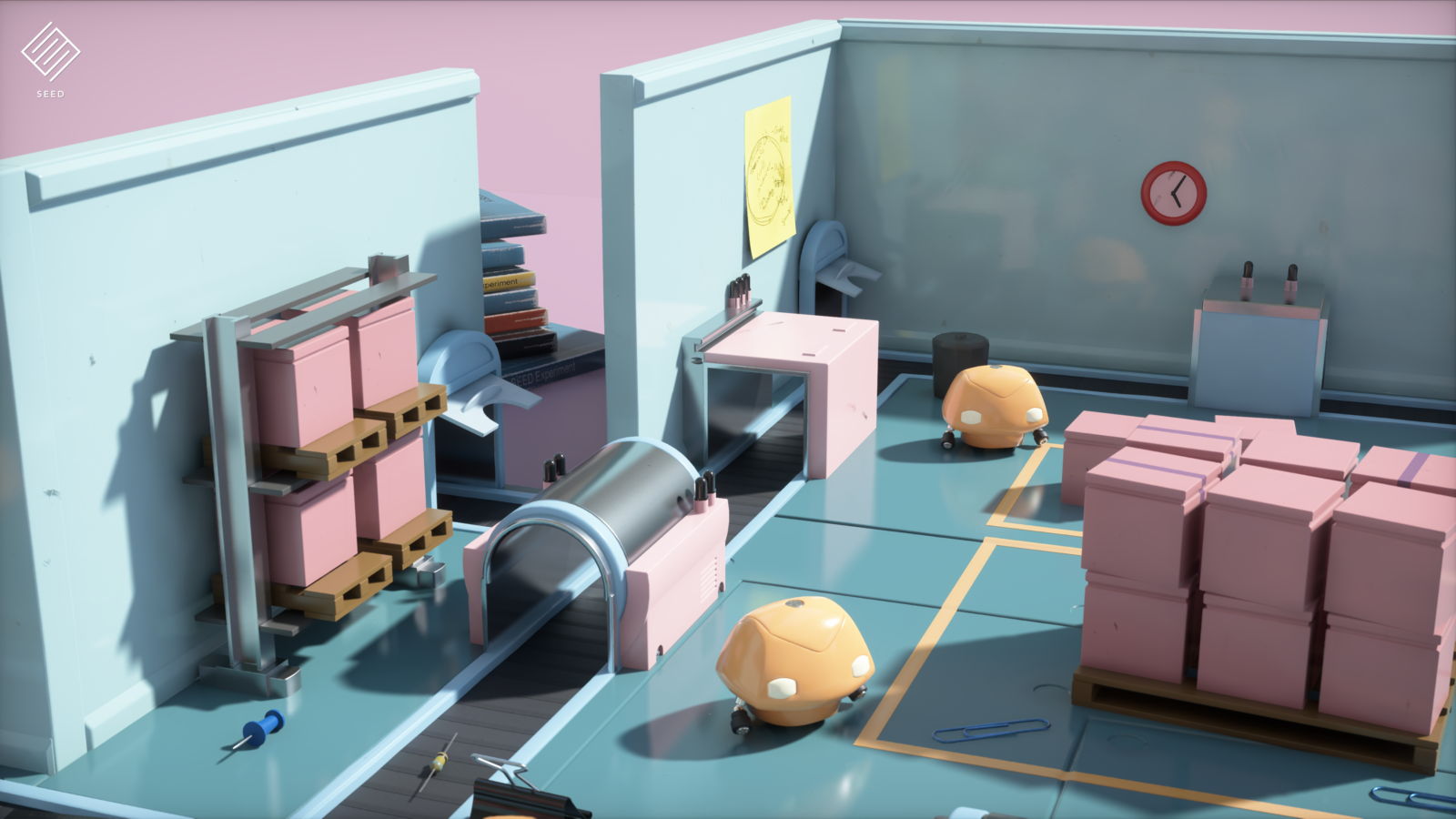

SEED, Electronic Art’s “Search for Extraordinary Experiences Divison”, walks through what they’ve learned about real-time ray tracing when they built the impressive “PICA PICA” demo over the next four videos..

- Exploring Real-Time Ray Tracing and Self-Learning AI with the “PICA PICA” Demo (8:23 min)

- Hybrid Rendering Pipeline and Reflection Rays (9:24 min)

- Materials, Transparency, Translucency, Global Illumination, Sampling, and Shadows (9:29 min)

- Texture Level of Details and Summary (11:47 min)

Part 1: Exploring Real-Time Ray Tracing and Self Learning AI with the “PICA PICA” Demo (8:23 min)

In just a few months with DXR, SEED designed a demo showing what could be done with depth-of- field, ray traced reflections, ray traced ambient occlusion, soft shadows, translucency, self-learning agents, and dynamic global illumination.

Seven Key Things from Part 1:

- The characters in the “PICA PICA” demo are self-learning agents – the “game” is made for them to play!

- SEED got clear and consistent visuals using hybrid ray tracing (via DXR) to create procedurally-assembled worlds with no pre-computation.

- Just three artists in total created the “PICA PICA” demo. Ray tracing sped up the art pipeline, reducing the need for manual crafting of art assests.

- Ray tracing helps solve for sparse and incoherent problems.

- Having a unified API (DX12) is super helpful, and makes a big difference, as does access to next generation GPUs.

- Self Learning AI was implemented, using TensorFlow for the runtime. This allows AI to learn new types of environments on their own.

- The AI gets more than just head-on information – they see all angles at once.

Part 2: Hybrid Rendering Pipeline and Reflection Rays (9:24 min)

While benefits exist for using a pure ray tracing pipeline, SEED found that working with a hybrid rendering pipeline is currently most efficient. In this video, SEED explains why this approach is sensible, and we see how different rendering techniques can work together. After providing this topline view, SEED segues into a deep dive on reflection rays.

Five Key Things From Part 2:

- The hybrid rendering pipeline begins with deferred shading (rasterization). Afterwards, you can produce direct shadows (raytrace or raster), determine direct lighting (compute), output reflections (raytrace), calculate global illumination (raytrace), process ambient occlusion (raytrace or compute), and render transparencies (raytrace).

- Before spawning a mesh, you build a bottom acceleration structure for it.

- Mesh instances are specified in top acceleration.

- If you move a mesh, you must update the instance’s position/orientation in the top acceleration.

- The team raytraced at half-resolution for ray traced reflections, then reconstructed at full resolution using spatiotemporal filtering.

Part 3: Materials, Transparency, Translucency, Global Illumination, Sampling, and Shadows (9:29 min)

Next, SEED describes acquiring lightmapping samples, why a process might become invalid, and how to manage direct light.

Seven Key Things from Part 3:

- Combine multiple microfacet surface layers into a single, unified and expressive BRDF.

- Rapidly experiment with different looks; bake down number of layers for production.

- BRDF Sampling: launch one ray for the whole stack then stochastically select a layer and sample. You need to estimate visibility versus other layers. Clever filtering techniques are required when generating a single value for multiple layers.

- Translucency: Inner structure scattering based on lighting traveling inside the medium; results converge over a couple of frames and denoised or temporally accumulated

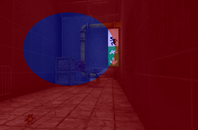

- Transparency: Launch ray using view’s origin and direction then refract based on medium’s index-of-refraction (IOR). Works for clear and rough glass

- Sampling & Integration: Compute surfel irradiance by PT. When shooting rays/frame, limit depth and number of paths. Limiting depth means fewer bounces; reuse results from previous frames

- Shadows: Accumulated and filtered in screen space

Part 4: Texture Level-of-Detail and Summary (11:47 min)

What about texture level of detail? Mipmapping is the standard method to avoid texture aliasing. But what about when you’re using ray tracing? In this video, they explain how this works, and make clear that the process is supported by all GPUs for rasterization via shading quad and derivatives.

Five Key Things from Part 4:

- The heuristic is based on triangle properties, a curvature estimate, distance, and the incident angle. Similar quality to ray differentials with a single trilinear lookup A Single value is stored in the payload for subsequent rays, as described in a technical paper here.

- A unified API makes things easy to experiment with and integrate.

- Flexible but complex tradeoffs must be weighed – noise vs. ghosting vs. perf.

- Ray tracing techniques can enable very high quality cinematic visuals.

- There’s much left to explore — perf, raster vs trace, sparse render, denoising, new techniques.

We hope you’ve gotten value from the this installment in NVIDIA’s Coffee Break series!

If this intrigues you, you can find the full presentation on the GDC Vault. You can also download the presentation.